out2getyou

asked on

VMWARE - Microsoft SQL Clustering best practice (SAN, vDisks & vSwitch)

Hi All,

Need some assistance with configuration of SQL clustering for our SharePoint farm. Its actually already setup but I'm revisiting its configuration and I've read up that my predecessor has set it up incorrectly.

SAN - 3PAR via Fibre Switch, all LUN's are thin provisioned.

vDisks - all VM disks are thin provisioned (have read that they need to be eager zeroed thick - why is this?)

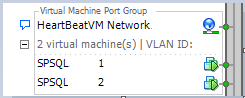

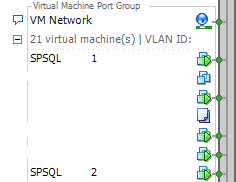

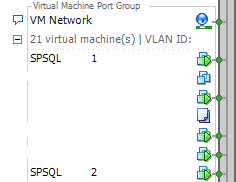

vSwitch - currently both nodes are sitting within the same virtual machine port group. Its been setup in DRS for the two nodes to mode together all the time. Within the nodes, they have setup two vNICs, 1 for heart beat network, 1 for normal server network.

Here are some screen shots:

Need some assistance with configuration of SQL clustering for our SharePoint farm. Its actually already setup but I'm revisiting its configuration and I've read up that my predecessor has set it up incorrectly.

SAN - 3PAR via Fibre Switch, all LUN's are thin provisioned.

vDisks - all VM disks are thin provisioned (have read that they need to be eager zeroed thick - why is this?)

vSwitch - currently both nodes are sitting within the same virtual machine port group. Its been setup in DRS for the two nodes to mode together all the time. Within the nodes, they have setup two vNICs, 1 for heart beat network, 1 for normal server network.

Here are some screen shots:

ASKER

interesting about eager zeroed thick disk... do you know why they perform best? are all your disks eager zero in your environment? does your SAN thin provision and VM disk thick?

Well we have 2 ESXi hosts in a vCentre Cluster, both VM's move together across both ESXi hosts. So if the SQL nodes are on one ESXi host, if the ESXi host failed, both VM's would move across to the other ESXi host "together", like set in DRS. Both ESXi hosts have the same vSwitch, VM port group settings so auto vMotion with DRS would be fine as they have same settings. So the SQL cluster wouldnt be done like you say.

So the standard production network is on a vlan, and the heartbeat network is on another vlan..

so my main question is (aside from the vDisk question above), do the SQL nodes need to have this rule to move together in DRS, or can all the hosts have the same vSwitch and VM port groups with vlan stand prod and vlan heartbeat and let DRS move them around to separate hosts ?

Well we have 2 ESXi hosts in a vCentre Cluster, both VM's move together across both ESXi hosts. So if the SQL nodes are on one ESXi host, if the ESXi host failed, both VM's would move across to the other ESXi host "together", like set in DRS. Both ESXi hosts have the same vSwitch, VM port group settings so auto vMotion with DRS would be fine as they have same settings. So the SQL cluster wouldnt be done like you say.

So the standard production network is on a vlan, and the heartbeat network is on another vlan..

so my main question is (aside from the vDisk question above), do the SQL nodes need to have this rule to move together in DRS, or can all the hosts have the same vSwitch and VM port groups with vlan stand prod and vlan heartbeat and let DRS move them around to separate hosts ?

All our disks in our production environment are Eager Zero.

Our SAN does Thin Provision and Thick Provision.

Eager zero means the full disk is zeroed out during the provisioning, which means that the VM does not have to spend time, doing the same when the block is accessed the first time.

I'm sorry, but if a Host Fails, both your SQL Nodes, would also FAIL, and then 1-2 minutes later both would be restarted automatically by VMware HA.

VMware vSphere cannot possibly know in advance a host is going to fail, and move your VMs before the Host fails - impossible!

Delete the Rules, and create another rule, which Keeps the SQL Nodes, Apart, so they are on different Hosts.

In the event a Host fails, your SQL Cluster will still be up.

All hosts can have the same name on a Virtual Machine Portgroup on a vSwitch.

Our SAN does Thin Provision and Thick Provision.

Eager zero means the full disk is zeroed out during the provisioning, which means that the VM does not have to spend time, doing the same when the block is accessed the first time.

I'm sorry, but if a Host Fails, both your SQL Nodes, would also FAIL, and then 1-2 minutes later both would be restarted automatically by VMware HA.

VMware vSphere cannot possibly know in advance a host is going to fail, and move your VMs before the Host fails - impossible!

Delete the Rules, and create another rule, which Keeps the SQL Nodes, Apart, so they are on different Hosts.

In the event a Host fails, your SQL Cluster will still be up.

All hosts can have the same name on a Virtual Machine Portgroup on a vSwitch.

ASKER

What about RDM LUNS? do we need these to out the SQL nodes on separate hosts?

RDM LUNs are presented "through" and direct to Hosts for VMs to use for Failover Cluster - a MUST!

Cannot do Failover Clustering across Hosts without RDMs.

Cannot do Failover Clustering across Hosts without RDMs.

ASKER

I'm not too sure if we have RDM LUNS? how do we know? and not sure what they are for?

Look at the VM Settings, and check your disks, e.g. Raw Mapped Disks.

ASKER

Are there any risks in using raw mapped disks ? Spoke to my manager about it and he is a bit hesitant about RAW MAPPED DISKS as they used it 3-4 years and it caused heas of problems

It makes me wonder if your SQL Server Cluster, has been created, in what we call "Cluster in a box" and hence why both nodes are on a Host, because Cluster in box breaks, because both nodes need to be on the same host.

Can you upload screenshots of the VM settings,

As for Raw Mapped Disks, there are a requirement for Failover Clustering.

Can you upload screenshots of the VM settings,

As for Raw Mapped Disks, there are a requirement for Failover Clustering.

ASKER

Yeah sure.... what screen shots did you want exactly?

Right Click Edit Settings > Disk Settings....on both VMs....

screen shots, I'd like to see how the virtual disks are currently set....

screen shots, I'd like to see how the virtual disks are currently set....

ASKER

Just check the properties on the SCSI Controllers

also check to see if you have any RAW disks, e.g. disk 1 to disk 6 ?

also check to see if you have any RAW disks, e.g. disk 1 to disk 6 ?

ASKER

Hi Andrew, any updates on this? cheers

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

ah okay.... I think I understand.

So right now we have a "Cluster in a box" configuration that's using SCSI BUS Sharing which doesn't support DRS vMotion. This is why we have that rule applied to those VM's to keep them on the same box.

Is this correct?

Now what if we want to use RDM?

1. What are the advantages?

2. what are the risks?

3. Would the change or transition be a big job?

4. Why would my predecessor of chosen to set-up the cluster this way?

Thank you very much for your assistance and helping me understand Andrew. Much appreciated. If I knew you're address, I would send you a bottle of wine as a gift :p

So right now we have a "Cluster in a box" configuration that's using SCSI BUS Sharing which doesn't support DRS vMotion. This is why we have that rule applied to those VM's to keep them on the same box.

Is this correct?

Now what if we want to use RDM?

1. What are the advantages?

2. what are the risks?

3. Would the change or transition be a big job?

4. Why would my predecessor of chosen to set-up the cluster this way?

Thank you very much for your assistance and helping me understand Andrew. Much appreciated. If I knew you're address, I would send you a bottle of wine as a gift :p

ah okay.... I think I understand.

So right now we have a "Cluster in a box" configuration that's using SCSI BUS Sharing which doesn't support DRS vMotion. This is why we have that rule applied to those VM's to keep them on the same box.

Is this correct?

That's correct, because that's the only way, Cluster in a Box, can work, because virtual SCSI BUS sharing only works on a single ESXI HOST!

1. What are the advantages?

Supported by Microsoft,

vMotion will operate correctly.

DRS will operate correctly.

You will be able to perform ESXi maintenance, without having to SHUTDOWN your Cluster in a Box Solution.

2. what are the risks?

I cannot forsee any risks, other than lack of Storage Knowledge and training.

3. Would the change or transition be a big job?

It's a substantial piece of work, to perform a conversion, requiring setup of new LUNs, Cluster - requiring downtime.

4. Why would my predecessor of chosen to set-up the cluster this way?

Many reasons, lack of training skills, did not understand RDM..... Management wanted it that way! Who knows!

Thank you very much for your assistance and helping me understand Andrew. Much appreciated. If I knew you're address, I would send you a bottle of wine as a gift :p

Yes, we like gifts - see my Experts Exchange Profile

You can find, my Company Website!

Follow the bread crumbs....

ASKER

that's great! thanks for the help.

In terms of performance, would RDM affect storage performance?

Any disadvantages?

Also would you help me set it up? in a new question of course :)

In terms of performance, would RDM affect storage performance?

Any disadvantages?

Also would you help me set it up? in a new question of course :)

Performance will certainly remain the same, or be better, it will not get any worse.

I love to help you set this up....BUT

To be honest, it is probably beyond the scope of a forum based posted question, to setup a complete new Two Node, RDM Failover Cluster for Windows 2008/2012, and include SQL Clustering, and transfer the databases from old server to new server.

Considering our time zone differences, and the complex nature of what is required. It's difficult enough to do, if I was sat at the console of the equipment, and would take us, 3-4 (man days).

Also would you help me set it up? in a new question of course :)

I love to help you set this up....BUT

To be honest, it is probably beyond the scope of a forum based posted question, to setup a complete new Two Node, RDM Failover Cluster for Windows 2008/2012, and include SQL Clustering, and transfer the databases from old server to new server.

Considering our time zone differences, and the complex nature of what is required. It's difficult enough to do, if I was sat at the console of the equipment, and would take us, 3-4 (man days).

check this

A Guide to installing Microsoft Failover Clustering and SQL Failover Clustering on VMware vSphere on 2 Windows VMs across 2 VMware hosts

https://www.experts-exchange.com/Database/MS-SQL-Server/SQL_Server_2008/A_15039-A-Guide-to-installing-Microsoft-Failover-Clustering-and-SQL-Failover-Clustering-on-VMware-vSphere-on-2-Windows-VMs-across-2-VMware-hosts.html

A Guide to installing Microsoft Failover Clustering and SQL Failover Clustering on VMware vSphere on 2 Windows VMs across 2 VMware hosts

https://www.experts-exchange.com/Database/MS-SQL-Server/SQL_Server_2008/A_15039-A-Guide-to-installing-Microsoft-Failover-Clustering-and-SQL-Failover-Clustering-on-VMware-vSphere-on-2-Windows-VMs-across-2-VMware-hosts.html

ASKER

Thanks Andrew... and I understand the job is beyond scope.

Do you think you would be able to write the instructions as a brief as possible? like one liners?

Would I need to rebuild the nodes from scratch?

Do you think you would be able to write the instructions as a brief as possible? like one liners?

Would I need to rebuild the nodes from scratch?

You could modify existing nodes.

I think what you could do, is practice, setting this up in your lab environment, which you have built and I can offer you some assistance.

and once you have mastered it, you can then think about what you want to do, about production.

What versions of WIndows and SQL?

I think what you could do, is practice, setting this up in your lab environment, which you have built and I can offer you some assistance.

and once you have mastered it, you can then think about what you want to do, about production.

What versions of WIndows and SQL?

ASKER

OS is 2012 Datacenter on both nodes and SQL version is 2012.

How could I modify existing nodes? is it worth the headache or just create new nodes and cluster and then migrate database across?

How could I modify existing nodes? is it worth the headache or just create new nodes and cluster and then migrate database across?

I would recommend creating a new Cluster, VMs, Nodes, LUNs, and then migrate the DB across.

This will give you time, and will not affect production.

You could post questions in phases, and I could help you out. But it's not going to be quick.

This will give you time, and will not affect production.

You could post questions in phases, and I could help you out. But it's not going to be quick.

ASKER

OK i think starting from scratch is a good idea aswell! I just need to make sure we have the storage for it.

What do you think of the instructions listed here posted by EugeneZ ? thanks EugeneZ by the way! :)

Also do you want me to post another question regarding the assistance? or would this question saffice?

What do you think of the instructions listed here posted by EugeneZ ? thanks EugeneZ by the way! :)

Also do you want me to post another question regarding the assistance? or would this question saffice?

That article although recently published is out of date, uses an old version of vSphere, and the OS, is for Windows 2008 and SQL 2008!

You really need to post a new question, and I would suggest several questions, rather than one large question.

First question, Description Setup two VMware Virtual Nodes nodes for use with SQL Cluster.

In this we can discuss and setup the two nodes with their RDM LUNs.

You also need to work out, how many drive letters, you currently have for your current install.

I think there were at least 5-6 virtual disks, excluding the OS

You really need to post a new question, and I would suggest several questions, rather than one large question.

First question, Description Setup two VMware Virtual Nodes nodes for use with SQL Cluster.

In this we can discuss and setup the two nodes with their RDM LUNs.

You also need to work out, how many drive letters, you currently have for your current install.

I think there were at least 5-6 virtual disks, excluding the OS

ASKER

Ok sounds great!

I will discuss with my manager and then post the question on what we agree on.

thanks very much Andrew! you have been a life saver ....

I will discuss with my manager and then post the question on what we agree on.

thanks very much Andrew! you have been a life saver ....

ASKER

fantastic!

The DRS seems a little weird, that would mean, if the host failed they were both on your SQL Cluster would be done, this is ridiculous.

Delete the rules, and let the VMs move around the cluster, using DRS, and use a standard production network, or use a VLAN for the private network for the heartbeat, as long as it's the same network.