pzozulka

asked on

NetApp: Setting up separate IP for each datastore

It has been recommended to create a separate IP for each datastore. Since our ifgrp is setup for link aggregation this will allow us to create multiple sessions per ESXi host and improve throughput.

How do create separate IPs for each datastore? For more details our /etc/rc file is below. Our NetApp is a FAS2240, and we use NFS volumes.

How do create separate IPs for each datastore? For more details our /etc/rc file is below. Our NetApp is a FAS2240, and we use NFS volumes.

#Auto-generated by setup Tue Aug 7 17:53:15 PDT 2012

hostname i1whlnetapp01

ifgrp create lacp ifgrp1 -b ip e0a e0b

ifgrp create lacp ifgrp2 -b ip e0c e0d

ifgrp create single ifgrp3 ifgrp1 ifgrp2

ifconfig ifgrp3 `hostname`-ifgrp3 mediatype auto netmask 255.255.255.224 partner ifgrp6 mtusize 1500

ifconfig e0M `hostname`-e0M netmask 255.255.255.240 mtusize 1500

route add default 10.0.128.61 1

routed on

options dns.domainname domain.local

options dns.enable on

options nis.enable off

savecoreASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

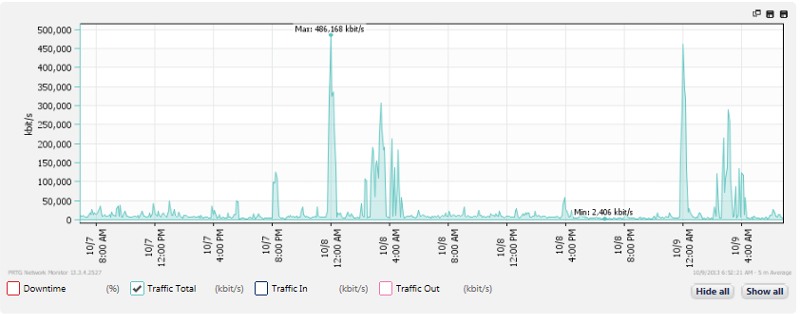

The graphic below shows the traffic (for the past 2 days) on the switch port directly connected to vmnic2 on our ESXi host. This is the NIC handling the NFS traffic. Even though we have 2 NICs in this team, esxtop shows vmnic2 to be handling almost all of the traffic for storage.

Having said that, it doesn't seem like we're using the pipe too much so is there not going to be much improvement, if any, if we setup multiple IPs for different datastores?

Having said that, it doesn't seem like we're using the pipe too much so is there not going to be much improvement, if any, if we setup multiple IPs for different datastores?

SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

This is exactly what we see in most environments.

It seems that your highest traffic is during early morning hours (backups?). During normal working hours you use less than 10% of capacity.

Using more links will add nothing but complexity.

It seems that your highest traffic is during early morning hours (backups?). During normal working hours you use less than 10% of capacity.

Using more links will add nothing but complexity.

paulsolov:

I noticed that your rc listing has only signle subnet for vif.

Are you using ifgrp3 for all storage protocols? like cifs, iscsi and NFS or just for NFS

Can you please post the listing if you have a filer config for multi-protocol storage support?

I noticed that your rc listing has only signle subnet for vif.

Are you using ifgrp3 for all storage protocols? like cifs, iscsi and NFS or just for NFS

Can you please post the listing if you have a filer config for multi-protocol storage support?

this is not my filer, if you have a specific question open a question, the configuration depends on the environment, personally I setup an intereface with a trunk and configure vlans depending on the environment.

ASKER

As for the ESXi we have NIC team policy based on port ID. One of the NICs goes to switch A and the other NIC goes to switch B. So not able to do LAG like Etherchannel or HP trunks.