Improve the Performance of Your Virtual Environment

I have over 30 years in the IT industry. During this time I have worked with a variety of products in a variety of industries.

Published:

Browse All Articles > Improve the Performance of Your Virtual Environment

Virtualization has become very popular over the last decade. In many cases, if a company does not have their entire data center virtualized, it is probably at least 90% or greater at this point. However, bottlenecks can still exist within virtualized infrastructures, especially with storage.

Current State of Virtual Environments

Virtualized environments rely heavily on shared storage or storage area networks (SANs). These are made by a wide range of vendors such as EMC, HP, NetApp, etc. Generally, the problem with these types of storage solutions is that the entire amount of available space cannot be consumed. This is due to the fact that configuring SANs involves allowing a certain number of disks or spindle counts to ensure performance. This problem has been offset over the years through adding faster resources such as SSD drives and memory. Adding a combination of drives allows storage pools and tiers to be created to provide faster access to extremely active (hot) data and pushes less active (cold) data onto slower disk. While these types of configurations have been very effective, they come with a high cost. SLC based, enterprise grade SSD’s for SAN arrays can cost as much as $10,000 per drive, with memory modules possibly exceeding that cost.

All-Flash Arrays as a Solution

Within the past few years, all-flash arrays have become an option for driving better storage performance. Products such as PureStorage and XtremeIO, to name a few, tend to utilize enterprise MLC (eMLC) flash in an attempt to drive better performance from traditional architecture used in virtualized environments. These can typically be expanded by adding additional nodes and allowing the systems to “rebalance” without any need for scheduled downtime. The node addition\expansion not only allows a simpler path to space and performance expansions, but newer product upgrades as well.

Hyper-Converged Systems as a Solution

Now, hyper converged systems such as Nutanix, Simplivity, and VMware EVO Rail are starting to become more common. These systems combine both compute and storage into a single unit or arrays to simplify configurations, reduce complexity and costs, while improving performance. The idea is that everything has been pre-packaged and designed to provide the best performance possible, with little or no external configuration needed by an administrator. Unfortunately, these types of systems make the assumption that the compute and storage require expansion at the same rate. As most administrators know, storage capacity needs typically grow at a much greater rate. Expanding both at the same time may not only mean increase hardware acquisition costs, but increased licensing cost as well.

However, with all of the improvements in compute and storage performance, there can still be bottlenecks. What many people to not realize is that while the compute end has gotten faster and more powerful, and storage has gotten faster, the connecting bus speeds have not improved much. This is now starting to create a bottleneck on the bus itself. So, what is the best way to address this problem? The answer is PernixData!

On-board Memory or Solid State Drives as a Solution

PernixData is a unique product, which is currently available only for VMware environments. It is a kernel based solution that aggregates flash or RAM that exist on the physical host to accelerate both reads and writes in a clustered, fault tolerant way. You can use host memory in addition to server-side flash and target your highest demanding workloads using RAM. However due to the cost of memory that configuration is probably only used when absolutely necessary. Once installed, the product allows the administrator to create an FVP cluster. This is a container of sorts that you associate acceleration resources (RAM or flash), then assign the VMs that you want accelerated with that pool. This pool does not present any new persistent storage to the vSphere cluster. It is used to simply accelerate all of the I/O. With FVP you can accelerate reads, writes, or both, and on a per VM basis. This places the storage response to the host within the hypervisor itself, rather than waiting on a response from the backend storage system. In addition, since the space is pooled across all host, the data is safe in the event a host would go offline before a write acknowledgement is received from the SAN.

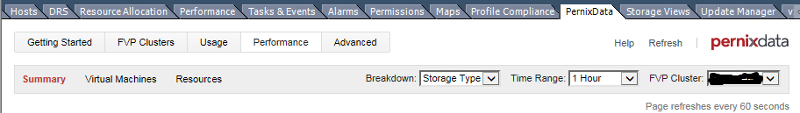

Once installed in the environment, a tab called PernixData is added vCenter. From this tab, there are several option for observing how the product is performing including an overall summary, usage, and performance tabs as shown below.

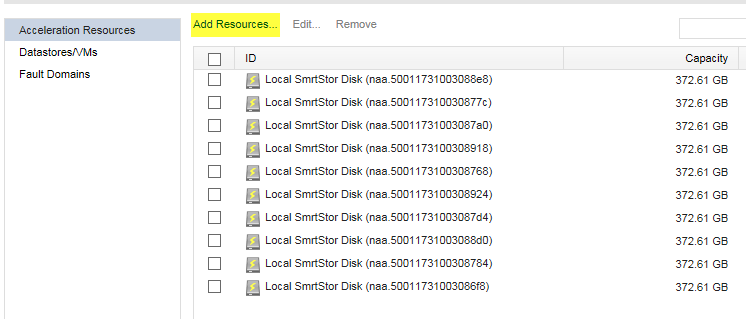

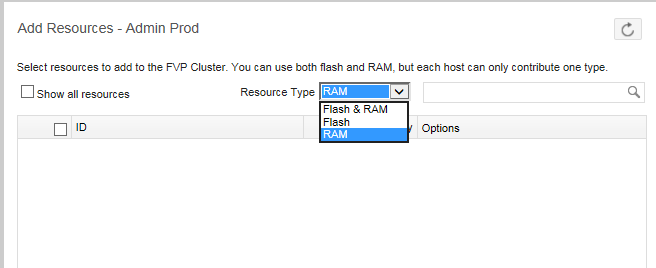

![Pernix-vCenter-Plug-in.png]() Configuring the resources is as simple as a few clicks. Under the FVP clusters, select “Add Resources”, and select from either flash, RAM, or both. Keep in mind though that a host can only use one type of resource. Therefore, you can only actually use one type. The drop down only presents you with both options for the purpose of configuring the FVP.

Configuring the resources is as simple as a few clicks. Under the FVP clusters, select “Add Resources”, and select from either flash, RAM, or both. Keep in mind though that a host can only use one type of resource. Therefore, you can only actually use one type. The drop down only presents you with both options for the purpose of configuring the FVP.

![Pernix-Resources.png]()

![Pernix-Creation.png]() Once configured, the virtual environment will begin to monitor the data and “learn” which data should reside on the FVP to provide a faster response to the virtual machines. The performance can be monitored. As you can see below, the bulk of the response come from the FVP, pushing the IOPS of the actual datastore to a more stable, consistent pattern. This not only improves the performance, it reduces the amount of impact on the storage arrays, the storage fabric, and the HBAs on the host. In my experience with an EMC storage array, the Fast Cache usage was reduced to only 9% utilization.

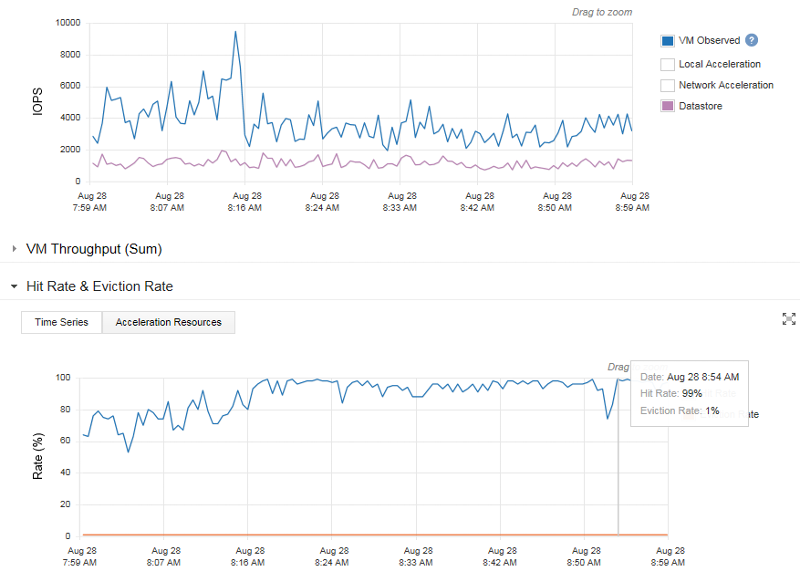

Once configured, the virtual environment will begin to monitor the data and “learn” which data should reside on the FVP to provide a faster response to the virtual machines. The performance can be monitored. As you can see below, the bulk of the response come from the FVP, pushing the IOPS of the actual datastore to a more stable, consistent pattern. This not only improves the performance, it reduces the amount of impact on the storage arrays, the storage fabric, and the HBAs on the host. In my experience with an EMC storage array, the Fast Cache usage was reduced to only 9% utilization.

![Pernix-Graph.png]() In conclusion, if you are looking at purchasing a new storage solution to address your storage performance issues, my suggestion would be to consider implementing PernixData. Faster storage arrays often just mask the underlying problem. PernixData FVP, and what they refer to as a ‘decoupled’ architecture addresses the problem where they originate; at the host layer. Even if the expansion requirement is due to a need for additional space, you would be well served by implementing this product. This will allow the purchase to consist more of slower, less expensive disk rather than faster, higher cost ones. In the end, you will see a higher performing virtual environment with much lower storage cost.

In conclusion, if you are looking at purchasing a new storage solution to address your storage performance issues, my suggestion would be to consider implementing PernixData. Faster storage arrays often just mask the underlying problem. PernixData FVP, and what they refer to as a ‘decoupled’ architecture addresses the problem where they originate; at the host layer. Even if the expansion requirement is due to a need for additional space, you would be well served by implementing this product. This will allow the purchase to consist more of slower, less expensive disk rather than faster, higher cost ones. In the end, you will see a higher performing virtual environment with much lower storage cost.

Thank you for reading my article. I hope you find it useful, especially if you are experiencing storage bottlenecks. If you found it helpful, please indicate so with the button below this article. Any feedback is appreciated.

Thank you,

Rodney Barnhardt

Virtualized environments rely heavily on shared storage or storage area networks (SANs). These are made by a wide range of vendors such as EMC, HP, NetApp, etc. Generally, the problem with these types of storage solutions is that the entire amount of available space cannot be consumed. This is due to the fact that configuring SANs involves allowing a certain number of disks or spindle counts to ensure performance. This problem has been offset over the years through adding faster resources such as SSD drives and memory. Adding a combination of drives allows storage pools and tiers to be created to provide faster access to extremely active (hot) data and pushes less active (cold) data onto slower disk. While these types of configurations have been very effective, they come with a high cost. SLC based, enterprise grade SSD’s for SAN arrays can cost as much as $10,000 per drive, with memory modules possibly exceeding that cost.

All-Flash Arrays as a Solution

Within the past few years, all-flash arrays have become an option for driving better storage performance. Products such as PureStorage and XtremeIO, to name a few, tend to utilize enterprise MLC (eMLC) flash in an attempt to drive better performance from traditional architecture used in virtualized environments. These can typically be expanded by adding additional nodes and allowing the systems to “rebalance” without any need for scheduled downtime. The node addition\expansion not only allows a simpler path to space and performance expansions, but newer product upgrades as well.

Hyper-Converged Systems as a Solution

Now, hyper converged systems such as Nutanix, Simplivity, and VMware EVO Rail are starting to become more common. These systems combine both compute and storage into a single unit or arrays to simplify configurations, reduce complexity and costs, while improving performance. The idea is that everything has been pre-packaged and designed to provide the best performance possible, with little or no external configuration needed by an administrator. Unfortunately, these types of systems make the assumption that the compute and storage require expansion at the same rate. As most administrators know, storage capacity needs typically grow at a much greater rate. Expanding both at the same time may not only mean increase hardware acquisition costs, but increased licensing cost as well.

However, with all of the improvements in compute and storage performance, there can still be bottlenecks. What many people to not realize is that while the compute end has gotten faster and more powerful, and storage has gotten faster, the connecting bus speeds have not improved much. This is now starting to create a bottleneck on the bus itself. So, what is the best way to address this problem? The answer is PernixData!

On-board Memory or Solid State Drives as a Solution

PernixData is a unique product, which is currently available only for VMware environments. It is a kernel based solution that aggregates flash or RAM that exist on the physical host to accelerate both reads and writes in a clustered, fault tolerant way. You can use host memory in addition to server-side flash and target your highest demanding workloads using RAM. However due to the cost of memory that configuration is probably only used when absolutely necessary. Once installed, the product allows the administrator to create an FVP cluster. This is a container of sorts that you associate acceleration resources (RAM or flash), then assign the VMs that you want accelerated with that pool. This pool does not present any new persistent storage to the vSphere cluster. It is used to simply accelerate all of the I/O. With FVP you can accelerate reads, writes, or both, and on a per VM basis. This places the storage response to the host within the hypervisor itself, rather than waiting on a response from the backend storage system. In addition, since the space is pooled across all host, the data is safe in the event a host would go offline before a write acknowledgement is received from the SAN.

Once installed in the environment, a tab called PernixData is added vCenter. From this tab, there are several option for observing how the product is performing including an overall summary, usage, and performance tabs as shown below.

Configuring the resources is as simple as a few clicks. Under the FVP clusters, select “Add Resources”, and select from either flash, RAM, or both. Keep in mind though that a host can only use one type of resource. Therefore, you can only actually use one type. The drop down only presents you with both options for the purpose of configuring the FVP.

Configuring the resources is as simple as a few clicks. Under the FVP clusters, select “Add Resources”, and select from either flash, RAM, or both. Keep in mind though that a host can only use one type of resource. Therefore, you can only actually use one type. The drop down only presents you with both options for the purpose of configuring the FVP.

Once configured, the virtual environment will begin to monitor the data and “learn” which data should reside on the FVP to provide a faster response to the virtual machines. The performance can be monitored. As you can see below, the bulk of the response come from the FVP, pushing the IOPS of the actual datastore to a more stable, consistent pattern. This not only improves the performance, it reduces the amount of impact on the storage arrays, the storage fabric, and the HBAs on the host. In my experience with an EMC storage array, the Fast Cache usage was reduced to only 9% utilization.

Once configured, the virtual environment will begin to monitor the data and “learn” which data should reside on the FVP to provide a faster response to the virtual machines. The performance can be monitored. As you can see below, the bulk of the response come from the FVP, pushing the IOPS of the actual datastore to a more stable, consistent pattern. This not only improves the performance, it reduces the amount of impact on the storage arrays, the storage fabric, and the HBAs on the host. In my experience with an EMC storage array, the Fast Cache usage was reduced to only 9% utilization.

In conclusion, if you are looking at purchasing a new storage solution to address your storage performance issues, my suggestion would be to consider implementing PernixData. Faster storage arrays often just mask the underlying problem. PernixData FVP, and what they refer to as a ‘decoupled’ architecture addresses the problem where they originate; at the host layer. Even if the expansion requirement is due to a need for additional space, you would be well served by implementing this product. This will allow the purchase to consist more of slower, less expensive disk rather than faster, higher cost ones. In the end, you will see a higher performing virtual environment with much lower storage cost.

In conclusion, if you are looking at purchasing a new storage solution to address your storage performance issues, my suggestion would be to consider implementing PernixData. Faster storage arrays often just mask the underlying problem. PernixData FVP, and what they refer to as a ‘decoupled’ architecture addresses the problem where they originate; at the host layer. Even if the expansion requirement is due to a need for additional space, you would be well served by implementing this product. This will allow the purchase to consist more of slower, less expensive disk rather than faster, higher cost ones. In the end, you will see a higher performing virtual environment with much lower storage cost.

Thank you for reading my article. I hope you find it useful, especially if you are experiencing storage bottlenecks. If you found it helpful, please indicate so with the button below this article. Any feedback is appreciated.

Thank you,

Rodney Barnhardt

Have a question about something in this article? You can receive help directly from the article author. Sign up for a free trial to get started.

Comments (2)

Commented:

Commented: