Introduction

Big Data refers to data collections that are so large and complex that they are difficult for traditional database tools to manage. Big Data is considered as the base of the future in the field of Information Technology (IT). Organizations today are dependent upon the data sizes, which is why their interest is increasing in Big Data analytics. The key to Big Data is organizing data for quick reference to get the source from summaries and indexes. Amazon AWS uses DDN with Lustre, Microsoft has been using Cray with Lustre; and Google uses FUSE or their own storage [1][2][3][4][5].

Big Data knowledge can enable crafting the right plan or strategy and make you ready for the battle of the industry. But like all other different fields, if you are new to something, you have to face some problems as challenges. Today, we are here with typical Big Data challenges faced by the organizations along with their solutions.

Understanding

Frequently many organizations neglect to know the advantages and disadvantages of Big Data as a new technology in the market. They are also unable to understand the importance of Big Data for their business organization. Without any reasonable information, they have different perspectives, like it may be dangerous for the project, or maybe it is expensive and many more.

You need to do proper research to understand the benefits, advantages and disadvantages of Big Data. Never accept or reject any technology without understanding the deep concept. To see Big Data acknowledgements at different levels, you must complete attending workshops and the various events of Big Data. You can also contact your allies which are using the technology in the present time and also making benefits or profits from it. Big Data is a given, and it is a requirement for Artificial Intelligence, Deep Learning training [6]. To do in-depth learning training you need as much data as possible, the point of Deep Learning is in part to find patterns you may not see. If you are not doing deep learning, you need to process the data by other algorithms and try to keep up with the information as it comes in. Big Data is not done in real-time. We train with the Big Data and use that to find algorithms we apply in real-time, like self-driving cars.

Concepts

Data Structures should be established to better manage Big Data. Data structures allow for the effective management and indexing of large data sets. Data structure generally refers to either structured or unstructured data [7].

Structured

- Definition: Data is generally in a relational database management system (RDBMS).

- Examples: Tabled data that contains names, phone numbers, addresses, social security numbers, and any items that can be contained in client data.

- Database: "Structured Query Language" (SQL) for the required relational databases.

Unstructured

- Definition: Everything that does not fall under structured data.

- Examples: Text files, email, social media, websites, text messages, phone calls, location data, media files, imagery, and sensory data, to name a few.

- Database: The most common database of this type is "not only SQL (NoSQL)".

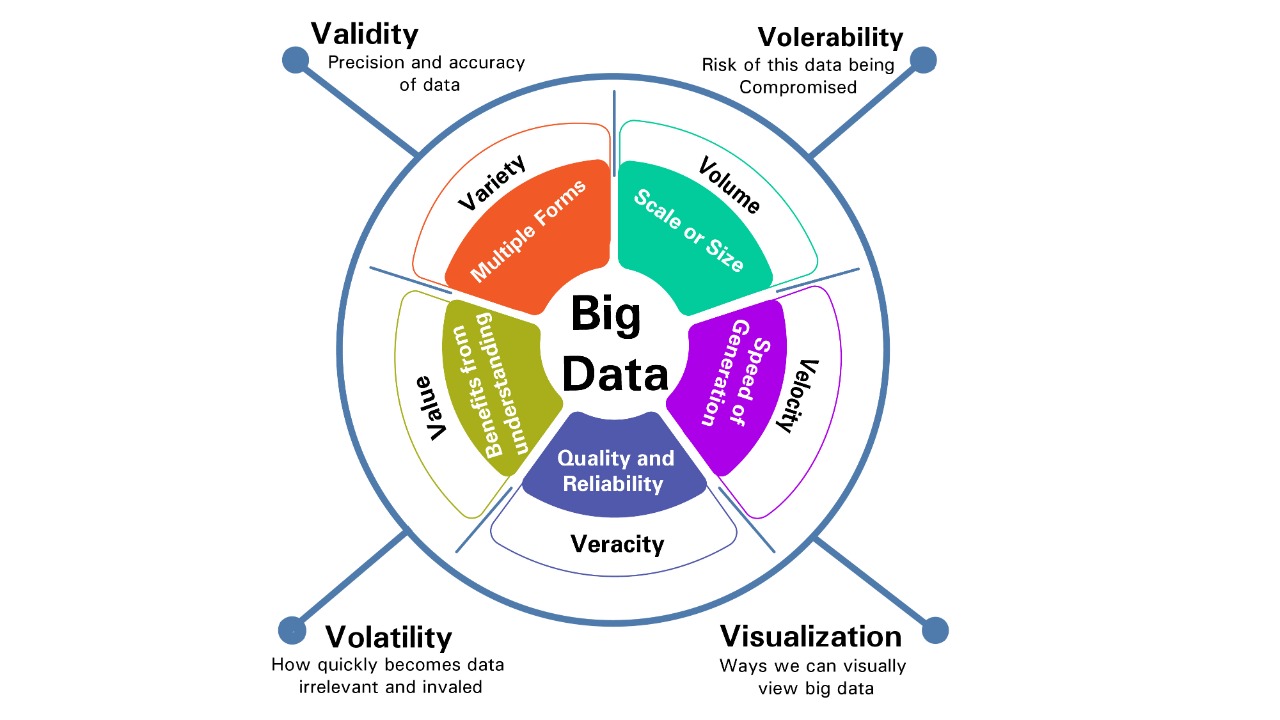

As per the definition and guideline of Big Data, the attributes of Big Data are abridged as "5Vs", i.e., Volume, Variety, Velocity, Value and Veracity. Keeping in mind this is a growing field [8][9].

The base definition is based on the three V’s: Variety, Volume and Velocity.

- Variety: Multiple forms of the data – Variety refers to the many types of data that come from many sources.

- Volume: The scale or size of data – Volume is the amount of data being generated.

- Velocity: Analysis of moving or streaming data – Velocity refers to the speed of data generation and the rate at which it is being processed.

The importance of Big Data is the value added by measurable, reliable data. The modern version of Big Data still follows the definition of very large, complex data, but recently has been expanded to include the V’s value and veracity.

- Value: The benefits of understanding data.

- Veracity: Uncertainty of data – Veracity refers to the quality of the data - is it accurate and reliable?

The constant evolution of Big Data means its main concepts are always evolving. Our current understanding will also evolve beyond the 5 Vs, as we further define what Big Data means in the future. Some possible additions to the V’s are the following:

- Validity – refers more specifically to the precision and accuracy of data.

- Vulnerability – relates to cybersecurity risk.

- Volatility – refers to how quickly the data becomes irrelevant and invalid.

- Visualization – represents the many ways we can view Big Data.

Security

Big Data involves the integration of data with various divisions of the business organizations. Many organizations think that Big Data can be a threat when they share information with various third-party software to make data visible for other departments of the organization. Big Data always provides plenty of backend dispersed data storage, which is not supported locally by different platforms. The third-party software can only see the data, but they may access the data for their use.

While new technologies are being introduced and Big Data are being used in many ways, the security and confidentiality of Big Data have been considered a concern. Big Data includes various security and privacy concerns. The main issues in (BDS) Big Data Security are protecting and verifying data [10][11].

Due to the large volume, speed and diversity of Big Data, the processing of such large data is challenging for conventional security models. This paradigm presents a challenge to security professionals who must adapt to the massive scope of Big Data. The following table lists common threats to Big Data:

|

Threats |

Description |

|

Breach of privacy |

Big Data is a solution often used to store great volumes of personal information. Such a large store of data may make it easier for an attacker to steal sensitive personal information in one comprehensive attack. |

|

Privilege escalation |

Because Big Data can represent wide swaths of information, some users may be able to view data that they are not authorized to view. This is especially true if systems are not in place to restrict how users can view and edit database entries. Multiple users with unrestricted visibility to data can threaten its confidentiality. |

|

Repudiation |

The size of Big Data may make event monitoring difficult or infeasible. Without proper controls for non-repudiation, an attacker may be able to change data and then plausibly deny having done so. |

|

Forensic |

Complications include accurately securing, collecting, and evaluating Big Data sets is especially difficult because Big Data implementations often lack a consistent structure and have a variety of different sources.

|

Cloud

Big Data is a data warehouse where organizations can save a huge amount of data. Big Data is, in many cases, a cloud-based storage space. Big Data is always prepared to handle, clean, process and perform various activities on the data. Today’s business organizations have a massive amount of data, and they are saving them in the cloud as Big Data.

Big Data is not the cloud. Big Data is large, fast and diverse data. The cloud is one tool that has a solution. Effectively in house computing, set up correctly, is an internal cloud where the data is only accessible to people you directly give access to, internally. There is a major security concern on truly sensitive data in the cloud (meaning like AWS, Azure, etc.), where a foreign government, other company and their contractors all have potential access to your data, and you have limited control [12].

Another challenge faced by organizations is the cost of data storage in the Big Data. Most companies think that Big Data will cost them much as compared to the traditional data storing methods. But this is nothing more than a myth. The cost will depend on your needs or requirements. Setting up internally requires hardware, software, maintenance and the most skilled people to set up and maintain the internal cloud. Cloud providers have the efficiency of scale that they can take advantage of for both cost, scale, co-location and speed.

Example Use Cases

Organizations can quickly get lost in the wide range of the Big Data technologies available in the market. The various types of Big Data technology can confuse organizations while choosing one for their business organization or projects. If you try to explore the ocean with incomplete or partial knowledge, then you can never have a clear view of the things you expect from an application or a technology. For example, Big Data tools such as Google BigQuery and Apache Hadoop can be useful platforms for developing your own analysis tools. Third-party cloud-based apps also provide log analysis services.

Big Data in itself has no value; however, it has great potential. Big Data is used in every aspect of modern life. We use the information in everything. Since information is now easily accessible and shared, each person should be made aware of what their connection to Big Data looks like. Big Data can be used for solving problems related to efficiency by looking at how people and processes impact the overall workflow of the organization [13][14][15][16][17].

- CCTV: Governments are using camera surveillance to control populations, track terrorism, and catch criminals with facial recognition. It also helps understanding traffic patterns to make roadways safer or to make transportation more efficient. Camera data can even assist in understanding where to place access controls, like card readers, to make them more secure. This area is boundless and will continue to shape and impact security in very new ways into the future.

- Phones: We use Big Data in phones every day. That notification that you parked your car in a certain location, or when your map knows your home address, are examples of Big Data analytics at work. This is just one of many ways mobile devices are shaping Big Data and cyber-security.

- Network Anomalies: The amount of logged data on organizations’ networks has gotten to the point that without Big Data, it would be impossible to detect attackers. This is why security information and event management systems (SIEMs) have become a standard component in almost any mid-to-enterprise network architecture. These tools allow for advanced correlation on large data sets. On the engineering side, these systems end up being limited by how they handle the Big Data problem. If they cannot deal with the massive amounts of data logged, the security benefits can be limited. Many cybersecurity professionals do Big Data analysis related to cybersecurity before the data even gets to the SIEM, because there is so much data on networks that it is almost impossible to handle, even with Big Data. Network problems almost always come down to latency and throughput, and a reason to handle this on internal resources, not on an external cloud, where DOS, networking issues, server load are blocking.

- Intrusion Detection: Big Data architectures are replacing traditional IDS systems because of the massive amounts of data, high throughput requirements, and the need to understand in as close to real-time as possible. Intrusion detection is one area where Big Data is relatively new in application and is just starting to be heavily researched. There are now a significant number of white papers published on this topic, especially in the area of reduction of “false positives”. If the current hypothesis is correct, then we will reach a point where we can trust that a security event is a threat, and eliminate the false-positive fatigue that analysts are currently facing.

- Internet of Things (IoT): IoT devices are everywhere, generating huge data footprints, yet they have minimal amounts of storage or logging capabilities. Since these devices interconnect to other systems, they can report a lot of data, and Big Data can handle this unstructured data in valuable ways. This data may allow us to detect a health issue from a smart watch before the wearer recognizes it (we are already seeing this happen), to know when a device needs repairing before it breaks (think vibration monitoring systems in manufacturing), to understand inefficiencies in a process, or to predict when a person is walking up to a store in order to have what they are going to buy ready at the cash register. The applications for IoT with Big Data are boundless and will likely reshape how we live.

- Compliance: Big Data and risk scoring are reshaping compliance. In many industries, you must meet specific government requirements for compliance, and Big Data is allowing organizations to define their compliance levels to define risk scores. There are even tools that enable someone to upload full network diagrams, and these tools then develop a risk score. This aggregates with all the other data required for compliance to define a more accurate understanding of risk to ensure the organization can meet its compliance requirements. In many cases, such Big Data analysis allows for a better risk score, which leads to a more secure environment. Risk scoring will become more valuable to industry as it looks to secure networks and data from attackers.

Conclusion

Big Data is considered as the base of the future in the field of Information Technology. The goal of Big Data is to automate multiple processes to assist in finding value. Big Data has turned out to be one of the most encouraging and winning innovations to anticipate future patterns. It is advisable to do proper research and explore technology as much as you can.

References:

[1]https://aws.amazon.com/big-data/what-is-big-data/

[2]https://www.oracle.com/big-data/what-is-big-data.html

[3]https://aws.amazon.com/fsx/lustre/

[4]https://www.cray.com/solutions/supercomputing-as-a-service/cray-clusterstor-in-azure

[5]https://cloud.google.com/storage/docs/gcs-fuse

[6]https://www.ibm.com/blogs/systems/ai-machine-learning-and-deep-learning-whats-the-difference/

[7]https://blogs.oracle.com/bigdata/structured-vs-unstructured-data

[8]https://tdwi.org/articles/2017/02/08/10-vs-of-big-data.aspx

[9]https://thesai.org/Downloads/Volume7No3/Paper_37-Extract_Five_Categories_CPIVW.pdf

[10]https://journalofbigdata.springeropen.com/articles/10.1186/s40537-016-0059-y

[11]https://www.sciencedirect.com/science/article/pii/S1877050916322864

[12]https://www.hindawi.com/journals/sp/2018/5418679/

[13]https://intellipaat.com/blog/7-big-data-examples-application-of-big-data-in-real-life/

[14]https://arxiv.org/ftp/arxiv/papers/1905/1905.00490.pdf

[15]https://insidebigdata.com/white-paper/risk-scoring-big-data-and-data-analytics/

[16] https://medium.com/xnewdata/iot-big-data-success-case-1646291b55cb

[17]https://hadoop.apache.org/

Have a question about something in this article? You can receive help directly from the article author. Sign up for a free trial to get started.

Comments (0)