Browse All Articles > Who filled transaction log of SQL Server database?

This article guides you in finding the user, process and host, which produced too many transactions filling the transaction log and preventing you from shrinking it.

Transaction log (the .ldf file of a database) of SQL Server database can grow not only in the FULL RECOVERY or BULK_LOGGED model but even in SIMPLE. You may face with the issue when you already performed some full backups and/or log backups but you cannot shrink that big transaction log.

However backup should truncate transaction log, right? Well, not always.

The following example is presented in a standard Microsoft sample database AdventureWorks on an on-premise SQL Server Standard (or any) Edition.

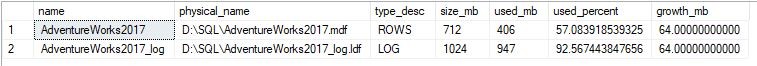

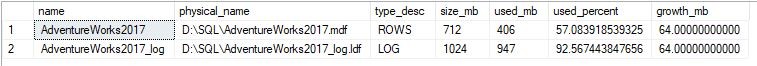

If you want to know the characteristics of the log and data file then use this query:![]()

You can extract the following information from the result above:

If we use the FULL recovery model and we already made a log backup, then we should expect that used_percent of the log is decreased. But the same if we have a SIMPLE recovery model because in that case, we should not do any backup to truncate the used portion of the transaction log. In SIMPLE recovery the transaction log should be truncated automatically.

So what's going on?

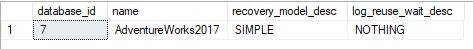

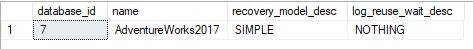

To check the log truncation problem, let's see the result of the following query:![]()

You can see that the recovery model is SIMPLE. Ok, so log should be really truncated automatically. But it is not. And the log_resue_wait_desc is NOTHING. Why? Because we saw that it is filled to 92%.

So we have to know that log_reuse_wait and log_reuse_wait_desc shows a status after the latest log truncation operation like:

If we have run any of these commands, then log_reuse_wait will show the latest status when there was no problem with the transaction log.

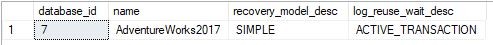

To trigger out refreshing of that status run a manual checkpoint:

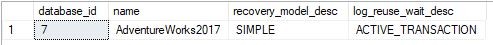

Let's check now the status:![]() Aha, here we are. The reason why we cannot shrink the log is an ACTIVE_TRANSACTION. There can be many reasons what you can read here or in Books Online.

Aha, here we are. The reason why we cannot shrink the log is an ACTIVE_TRANSACTION. There can be many reasons what you can read here or in Books Online.

In case of ACTIVE_TRANSACTION you can find that transaction by the following query:

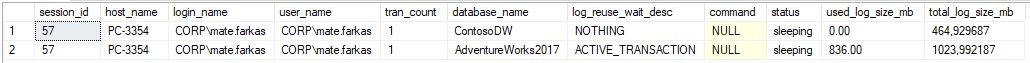

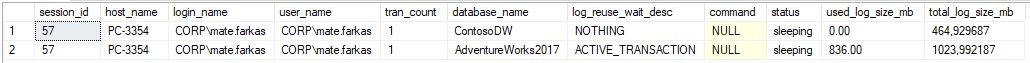

![]()

This query lists only users who are currently running any data modification transaction. The result above shows us the followings:

So here you can see that user CORP\mate.farkas already does not run his query but keeps open the transaction. The query keeps opened a transaction in another database as well (ContosoDW) but there it does not create any transaction log. So he forgot to run a ROLLBACK or COMMIT TRANSACTION command.

Now you can call that user and ask him/her to finish that transaction. As a final solution, you can KILL the session_id, but this must be the latest possibility.

However backup should truncate transaction log, right? Well, not always.

The following example is presented in a standard Microsoft sample database AdventureWorks on an on-premise SQL Server Standard (or any) Edition.

If you want to know the characteristics of the log and data file then use this query:

select name,

physical_name,

type_desc,

size * 8 / 1024 as size_mb,

fileproperty(name, 'SpaceUsed') * 8 / 1024 as used_mb,

100.0 * fileproperty(name, 'SpaceUsed') / size as used_percent,

case is_percent_growth

when 0 then growth * 8 / 1024

else size * growth / 100.0 * 8 / 1024

end as growth_mb

from AdventureWorks2017.sys.database_files

You can extract the following information from the result above:

- This database has only 1 transaction log. This query would show all of them.

- Size of the transaction log (1024 MB) is greater than data file (712 MB)

- We check only transaction logs, where type_desc = LOG and the most important is used_percent

- Fullness of transaction is over 92%

- Soon the transaction log will allocate more 64 MB space on disk drive D:

If we use the FULL recovery model and we already made a log backup, then we should expect that used_percent of the log is decreased. But the same if we have a SIMPLE recovery model because in that case, we should not do any backup to truncate the used portion of the transaction log. In SIMPLE recovery the transaction log should be truncated automatically.

So what's going on?

To check the log truncation problem, let's see the result of the following query:

select database_id, [name], recovery_model, log_reuse_wait_desc

from sys.databases

where [name] = 'AdventureWorks2017'

You can see that the recovery model is SIMPLE. Ok, so log should be really truncated automatically. But it is not. And the log_resue_wait_desc is NOTHING. Why? Because we saw that it is filled to 92%.

So we have to know that log_reuse_wait and log_reuse_wait_desc shows a status after the latest log truncation operation like:

- DATABASE or LOG BACKUP

- CHECKPOINT

- LOG SHRINK

If we have run any of these commands, then log_reuse_wait will show the latest status when there was no problem with the transaction log.

To trigger out refreshing of that status run a manual checkpoint:

checkpoint;

Let's check now the status:

select database_id, [name], recovery_model, log_reuse_wait_desc

from sys.databases

where [name] = 'AdventureWorks2017'In case of ACTIVE_TRANSACTION you can find that transaction by the following query:

select s.session_id,

s.host_name,

s.login_name,

s.nt_domain + '\' + s.nt_user_name as [user_name],

s.open_transaction_count as [tran_count],

d.name as [database_name],

d.log_reuse_wait_desc,

r.command,

s.status,

cast((

dt.database_transaction_log_bytes_reserved +

dt.database_transaction_log_bytes_reserved_system +

dt.database_transaction_log_bytes_used +

dt.database_transaction_log_bytes_used_system) / 1048576 as decimal(20, 2)) as used_log_size_mb,

ls.total_log_size_mb

from sys.dm_exec_sessions s

inner join sys.dm_tran_session_transactions st on s.session_id = st.session_id

inner join sys.dm_tran_database_transactions dt on st.transaction_id = dt.transaction_id

inner join sys.databases d with (nolock) on dt.database_id = d.database_id

cross apply sys.dm_db_log_stats(dt.database_id) ls

left join sys.dm_exec_requests r on s.session_id = r.session_id

where s.open_transaction_count > 0

This query lists only users who are currently running any data modification transaction. The result above shows us the followings:

- session_id: (or better known as SPID), this can be useful

- host_name: this shows the name of the client machine

- login_name, user_name: this is the "guilty" user who filled the transaction log

- log_reuse_wait_desc: you already know that is this

- tran_count: number of nested transactions opened by the session

- database_name: here you can see how many other databases are affected by this session

- command: if the query is still running then you can see here that command

- status: if you see here sleeping then user does not really run a query just keeps open a transaction

- used_log_size_mb: transaction log amount generated by this session

- total_log_size_mb: total available size of the transaction log of the database

So here you can see that user CORP\mate.farkas already does not run his query but keeps open the transaction. The query keeps opened a transaction in another database as well (ContosoDW) but there it does not create any transaction log. So he forgot to run a ROLLBACK or COMMIT TRANSACTION command.

Now you can call that user and ask him/her to finish that transaction. As a final solution, you can KILL the session_id, but this must be the latest possibility.

Have a question about something in this article? You can receive help directly from the article author. Sign up for a free trial to get started.

Comments (0)