Why is my SAN/Restore so slow?

Hi all

Last night I made a massive mistake and managed to delete the 2 vmdk's containing my Exchange Mailboxes and Logs.

Never fear, for I had done a full backup of the VMDK's earlier that day with VMWare Data Recovery.

At 2:30am it had completed 20%. Its now 13:45 the next day and its still on only. 75%

Monitoring the SAN using Resource Monitor, I can see Disk I/O is sitting at around 5-10MB/sec. Network Utilisation is around 100-120Mbps.

This was done on a bit of a budget as detailed below but it still should not be this slow in my opinion. Where is my bottleneck?

THe iSCSI SAN Disks are NL SATA disks. Yes I know, but still should not be this slow?

There are 5 1Gb links going into the SAN Switch (seperate network to Data), but only 1 of these are operational as I messed up the Link Teaming (chose wrong type).

Could it be this? Surely if this was maxing out, it'd be at 90%+ utilisation?

The disks are 4 NL SAS (SATA) disks in Raid 5 or 6 (cant rememebr which, and I cant access the concole right now).

Using DiskSpeed, the Max Read speed is clocked at 229.82MB/sec. Which is miles from 5-10MB/sec its operating at for this restore.

The Data network (which is the VM Management and Client Access network) is only 100Mbps (this will change to a GB Switch later this week), but I dont think the restore needs to even touch the data network?

Where is my bottleneck?

I'll see if I can knock up a quick diagram.

Last night I made a massive mistake and managed to delete the 2 vmdk's containing my Exchange Mailboxes and Logs.

Never fear, for I had done a full backup of the VMDK's earlier that day with VMWare Data Recovery.

At 2:30am it had completed 20%. Its now 13:45 the next day and its still on only. 75%

Monitoring the SAN using Resource Monitor, I can see Disk I/O is sitting at around 5-10MB/sec. Network Utilisation is around 100-120Mbps.

This was done on a bit of a budget as detailed below but it still should not be this slow in my opinion. Where is my bottleneck?

THe iSCSI SAN Disks are NL SATA disks. Yes I know, but still should not be this slow?

There are 5 1Gb links going into the SAN Switch (seperate network to Data), but only 1 of these are operational as I messed up the Link Teaming (chose wrong type).

Could it be this? Surely if this was maxing out, it'd be at 90%+ utilisation?

The disks are 4 NL SAS (SATA) disks in Raid 5 or 6 (cant rememebr which, and I cant access the concole right now).

Using DiskSpeed, the Max Read speed is clocked at 229.82MB/sec. Which is miles from 5-10MB/sec its operating at for this restore.

The Data network (which is the VM Management and Client Access network) is only 100Mbps (this will change to a GB Switch later this week), but I dont think the restore needs to even touch the data network?

Where is my bottleneck?

I'll see if I can knock up a quick diagram.

I think it just go to the vm-management so it will work better with a gbe san switch

The vDR restore would be performed through the Management Interface to the ESX host Server.

If this is the slow point, this could be the issue, I would have a look at the Network Performance Charts in vCenter. Have a look at what peaks you've got in the history.

If this is the slow point, this could be the issue, I would have a look at the Network Performance Charts in vCenter. Have a look at what peaks you've got in the history.

ASKER

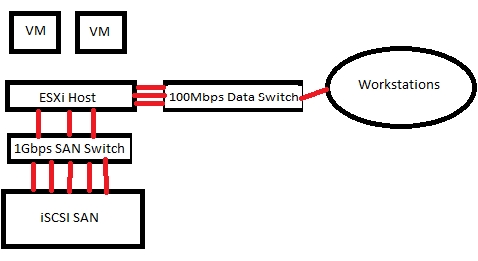

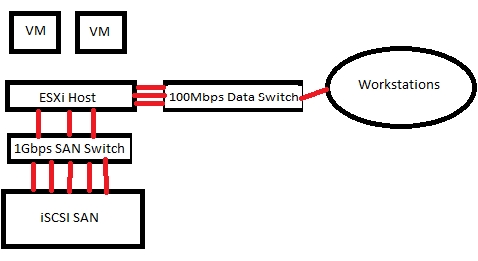

Excuse the quick diagram, serves the purpose.

3 links from ESXi Host to Data Network are obviously stuck at 100Mbps each (soon to change). Teamed within VMWare.

3 links from ESXi Host to SAN Swicth are 1000Mbps, again teamed in VMWare.

5 links from SAN Switch to SAN are 1Gbps links, although I messed up and only 1 is active, rest are on standby.

3 links from ESXi Host to Data Network are obviously stuck at 100Mbps each (soon to change). Teamed within VMWare.

3 links from ESXi Host to SAN Swicth are 1000Mbps, again teamed in VMWare.

5 links from SAN Switch to SAN are 1Gbps links, although I messed up and only 1 is active, rest are on standby.

We you restoring a backup from the SAN to the SAN?

ASKER

vDR and the Restore target are on the same array os the slower disks...but surely this should not be why?

If the vDR is operating on the Management Network (which yes, that makes sense as it only has 1 interface) the it is stuck at 100Mbps - there is my bottleneck I assume.

If the vDR is operating on the Management Network (which yes, that makes sense as it only has 1 interface) the it is stuck at 100Mbps - there is my bottleneck I assume.

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

So your vDR appliance repository is on the same Array, as the VM you are restoring?

ASKER

The Backup Destination for vDR was created just as a test, while we wait for the NFS drive to arrive (which will be the "proper" backup destination.

It is coincidence in this case that the Backup on the SAN was the only backup of Exchange I had ready to hand, which is why I'm using it.

It is coincidence in this case that the Backup on the SAN was the only backup of Exchange I had ready to hand, which is why I'm using it.

ASKER

vDR is a Virtual Appliance on the 100MBps Switch, ESXi host is also connected to the same switch!

Networking Communication between vDR appliance and ESXi host is 100MBps!

So your vDR appliance repository is on the same Array, as the VM you are restoring?

Correct. I guess that answers my question.

Networking Communication between vDR appliance and ESXi host is 100MBps!

So your vDR appliance repository is on the same Array, as the VM you are restoring?

Correct. I guess that answers my question.

ASKER

Guess I'll just have to sit tight and wait for it to finish. Later this week I should have the hardware available to get the Data network on Gbe and also have a seperate NFS drive to do backups to.

I will reherse this restore again and see what performance is like.

I will reherse this restore again and see what performance is like.

If you were reading from and writing to the same desintation this will be a bottleneck for sure.

We recommend the vDR repository is on the following:-

1. Local ESX datastore (remember you can have more than one repository on many different platforms, NFS, CIFs and Local ESX datastores).

2. SAN LUN (but not the same SAN lun as other VMs)

3. NFS SAN (or backup NAS) using FAST 1Gbe/2GBe links, and Jumbo Frames.

4. CIFs Share on SAN or Windows Servers using FAST 1Gbe/2GBe links

We recommend the vDR repository is on the following:-

1. Local ESX datastore (remember you can have more than one repository on many different platforms, NFS, CIFs and Local ESX datastores).

2. SAN LUN (but not the same SAN lun as other VMs)

3. NFS SAN (or backup NAS) using FAST 1Gbe/2GBe links, and Jumbo Frames.

4. CIFs Share on SAN or Windows Servers using FAST 1Gbe/2GBe links

also set up the nic teaming that will help

also if you can use a nic team for the management I use 2 gbe nics in a team for my vclient machine

also if you can use a nic team for the management I use 2 gbe nics in a team for my vclient machine

ASKER

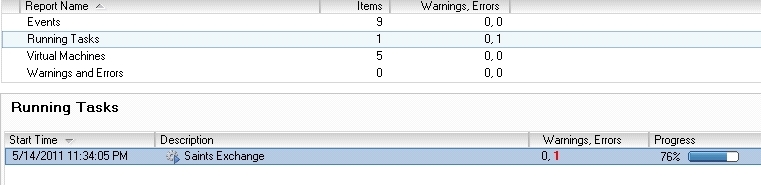

Although the restore is still going, I can see in the vDR view there is 1 error registered against the Restore, but I cant find the error message anywhere.

Does it not show up til the restore has finished?

Does it not show up til the restore has finished?

We've done alot of work in the vDR area, so any questions just ask.

Make sure if you can split your vDR repositiory if possible, e.g. not larger than 500GB.

and make sure you have fast links.

and TEST TEST TEST and TEST some more.

Local ESX disk is fast.

Make sure if you can split your vDR repositiory if possible, e.g. not larger than 500GB.

and make sure you have fast links.

and TEST TEST TEST and TEST some more.

Local ESX disk is fast.

Check the main vDR log.

ASKER

After this restore I will set up the SAN to SAN Switch to use Adaptive Load Balancing so make use of all 5 links.

I shall be using number 3 on that list later this week.

I shall be using number 3 on that list later this week.

Just make sure you get thise Jumbo Frames enabled!

ASKER

The current switch does not support Jumbo Frames, but the replacement switch will :)

How much % increase would you expect to see?

Screenshot of restore - you can see the error, but I cant work out where to see what it says?

How much % increase would you expect to see?

Screenshot of restore - you can see the error, but I cant work out where to see what it says?

have a look at Warings and Errors, other wise the main vDR log.

To view the basic data recovery logs:

1. In the vSphere Client, click Home > Solutions and Applications > VMware Data Recovery.

2. Enter the virtual machine name or IP address of the backup appliance and click Connect.

3. Click the Configuration tab.

4. Click Log under Configure.

1. In the vSphere Client, click Home > Solutions and Applications > VMware Data Recovery.

2. Enter the virtual machine name or IP address of the backup appliance and click Connect.

3. Click the Configuration tab.

4. Click Log under Configure.

Where is the vDR repositiy stored?

and where is the target destination?