ncomper

asked on

VMware VDR backup times

Hi All,

i have started using the VDR backup software that is free from Vmware, we have the following configuration in place.

3 Host Cluster

10 windows servers (2008)

20 Redhat Servers

Each Host has a VDR installed to prevent any access issues, each VDR has 6-10 VM's in individual jobs. the VDR are configured as follows

VDR - OS installed on Production SAN

iSCSI - 2x 500Gb drives linked from a QNAP device

Backup timescales:

Redhat server - Approx 1-2 hours complete initial backup (80Gb)

Windows Server - Approx 6-14 hours complete backup (120Gb)

I know that the windows machines are slightly larger and will have more processing applications in use than the Redhat but is there a way to check the speeds of the backups to ensure that they are running correct and have no underlying issues I/O performance issues or other.....

Many thanks,

i have started using the VDR backup software that is free from Vmware, we have the following configuration in place.

3 Host Cluster

10 windows servers (2008)

20 Redhat Servers

Each Host has a VDR installed to prevent any access issues, each VDR has 6-10 VM's in individual jobs. the VDR are configured as follows

VDR - OS installed on Production SAN

iSCSI - 2x 500Gb drives linked from a QNAP device

Backup timescales:

Redhat server - Approx 1-2 hours complete initial backup (80Gb)

Windows Server - Approx 6-14 hours complete backup (120Gb)

I know that the windows machines are slightly larger and will have more processing applications in use than the Redhat but is there a way to check the speeds of the backups to ensure that they are running correct and have no underlying issues I/O performance issues or other.....

Many thanks,

ASKER

i am only allowing a single backup to take place at once until i am happy that the process is working and to ensure that backup 1 will not impact backup 2.

How long are you finding, after the initial backup?

ASKER

We are still working on the initial backup, we have not yet started any inc jobs

ASKER

I have completed additional checks and can add the following information that may help:

On the QNAP device i have Balance-rr configured on ports 1+2

I have spoken with a few people and had a suggestion to drop this to a single port and test, i have also completed a little research and found the following:

http://www.qnap.com/en/index.php?sn=7004

this point to a potential Balance-alb or 802.3ad aggregation. Has any one had experience of this and do you think these are the correct steps moving forward?

On the QNAP device i have Balance-rr configured on ports 1+2

I have spoken with a few people and had a suggestion to drop this to a single port and test, i have also completed a little research and found the following:

http://www.qnap.com/en/index.php?sn=7004

this point to a potential Balance-alb or 802.3ad aggregation. Has any one had experience of this and do you think these are the correct steps moving forward?

ASKER

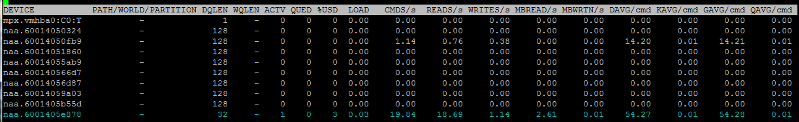

On Performing the esxtop i can see the following performance issues. (this is while a VDR restore is in progress)

Can any one review the image and let me know if there is any thing i can look into to improve

Can any one review the image and let me know if there is any thing i can look into to improve

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Afternoon All and thanks for the feedback.

in essence the above was correct, this was a miss management of IP addressing (bad route) once i pulled the iscsi drive on to the local ESX network (no routing required) and removed the routing requirement the speed improved massive.

I found out that the latency between the servers & SAN along with the multiple jobs running forced the connection to fail. In turn this made the ESX 4.1 boxes stress out and cause all sorts of issues. Using the ESx top command managed to narrow down the location of the issue

in essence the above was correct, this was a miss management of IP addressing (bad route) once i pulled the iscsi drive on to the local ESX network (no routing required) and removed the routing requirement the speed improved massive.

I found out that the latency between the servers & SAN along with the multiple jobs running forced the connection to fail. In turn this made the ESX 4.1 boxes stress out and cause all sorts of issues. Using the ESx top command managed to narrow down the location of the issue

But once the VM has been backed-up once, Incremental changes should be faster.