Excel: Borrow SQL statement from text file when populating Excel as external source

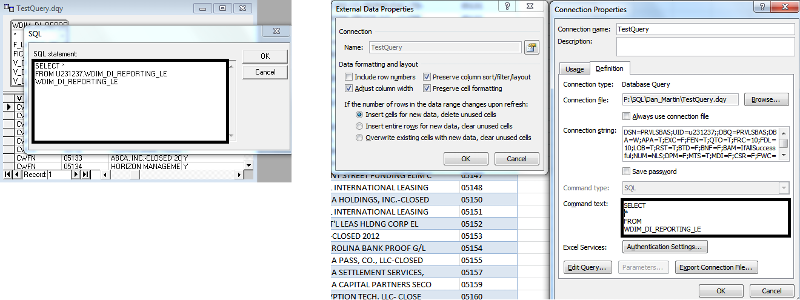

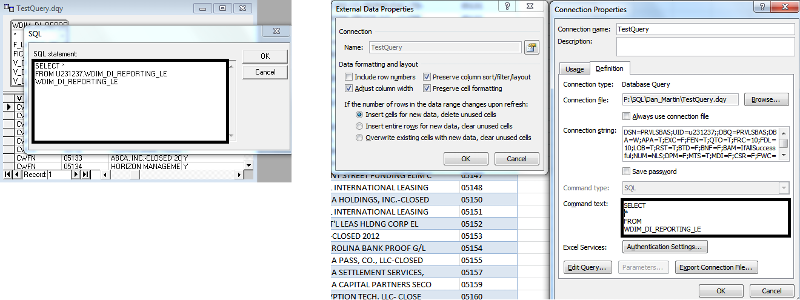

I have a fairly long SQL statement, around 65KB, from which I would like to migrate its dataset to Excel. I have two main methods I use for importing data into Excel: Microsoft Query, and the Command Text dialogue within the Properties of an externally derived dataset. See the image below.

The problem with both of these is that they have a limit of how much SQL content they can take. 65KB turns out to be more SQL code than either of these can handle. I am curious about whether I can use Notepad to create a SQL statement in its own text file, and then use Excel to establish a connection to this source. The query would actually have two sources: the connection string, establishing the data source and the text file containing the SQL statement.

The following is the technique I'm currently using:

Figuring out how to have Excel execute a SQL statement by reading it directly from a text file would provide the following advantages:

All of this seems like a good idea to me, and I'm here to ask you experts if the current technology allows for what I'm envisioning. Thanks! ~Peter Ferber

The problem with both of these is that they have a limit of how much SQL content they can take. 65KB turns out to be more SQL code than either of these can handle. I am curious about whether I can use Notepad to create a SQL statement in its own text file, and then use Excel to establish a connection to this source. The query would actually have two sources: the connection string, establishing the data source and the text file containing the SQL statement.

The following is the technique I'm currently using:

1.

Within VBA or VB.net, create an ADO connection to the desired data source.2.

Import a text file containing my long SQL statement, execute that statement with the ADO connection, and store the results into an ADO recordset.3.

Export the contents of the recordset to a tab-separated text file.4.

Read the text file directly into Excel.Figuring out how to have Excel execute a SQL statement by reading it directly from a text file would provide the following advantages:

Override the code-size limitation inherent in Microsoft Query and the Command Text dialogue.

Streamline the process by eliminating step 3, thus saving time by eliminating the creation of one redundant copy of the dataset.

Replace the link with Excel from static to dynamic, enabling analysts to see changes to the underlying data source by pushing the "Refresh" button.

Simplify the work of maintaining the SQL statement, by editing the text file instead of having to go into Microsoft Query or the Command-Text dialogue within Excel.

All of this seems like a good idea to me, and I'm here to ask you experts if the current technology allows for what I'm envisioning. Thanks! ~Peter Ferber

ASKER

Hello, fanpages, and thanks for your comments. The main issue I have is with the limitation of Microsoft Query and the Command Text dialogue, which allow for someting less than 64KB of text when generating a SQL statement. Before bumping into this limit, I was perfectly content to use these two tools to create external data sources. I generally prefer external data sources because they are so clean, and being able to press the "refresh" button to insure that the presented dataset and the underlying tables that make up that dataset are in synch is tremendously alluring. The fly in the ointment came when I copied and pasted a fairly long SQL statement into the two mentioned dialogues, and found that they both truncated my query, with no way to add more text.

The 4 steps I list above were my way around this limitation. The ADO recordset object, both in VBA and VB.Net, can generate results from a SQL statement weighing in at more than 64KB; and, to make this work, I sacrificed my direct link to the data source. The consequence of that sacrifice is that, if the underlying tables change, I must run my process again to have my dataset reflect those changes.

I actually have a coded procedure that automatically produces manilla text files of SQL statements, and it will typically concatenate multiple quarters into one dataset, "daisy chaining" SQL blocks with "APPEND ALL" clauses, and several quarters, daisy chained together, often makes for a lengthy SQL statement.

To reiterate my question, I seek to get around the 64KB limitation while maintaining a direct link to the data source. What accomplishes these two goals will satisfy my requirement. If I can link to a SQL statement that is written as a text file, that's icing on the cake, because my VB code is already responsible for generating finished SQL-code files: finessed and ready to run.

Thanks, ~Peter Ferber

The 4 steps I list above were my way around this limitation. The ADO recordset object, both in VBA and VB.Net, can generate results from a SQL statement weighing in at more than 64KB; and, to make this work, I sacrificed my direct link to the data source. The consequence of that sacrifice is that, if the underlying tables change, I must run my process again to have my dataset reflect those changes.

I actually have a coded procedure that automatically produces manilla text files of SQL statements, and it will typically concatenate multiple quarters into one dataset, "daisy chaining" SQL blocks with "APPEND ALL" clauses, and several quarters, daisy chained together, often makes for a lengthy SQL statement.

To reiterate my question, I seek to get around the 64KB limitation while maintaining a direct link to the data source. What accomplishes these two goals will satisfy my requirement. If I can link to a SQL statement that is written as a text file, that's icing on the cake, because my VB code is already responsible for generating finished SQL-code files: finessed and ready to run.

Thanks, ~Peter Ferber

can you paste one of your >64kb sql queries here please? (as text, in a code block, not an image)

ASKER

Well, sure, if this will help. The following actually weighs in at only 28KB, but you'll get the idea looking at it. It covers 20 quarters, from 2011Q3 to 2014Q2. The code works off a generic template, replacing placeholders for actual dates: 2011Q3, 2011Q4, 2012Q1, etc. The archive tables are named for the last day of each respective quarter: 20110930, 20111231, 20120330, etc. You can calculate the total SQL statement size as the generic template times the number of quarters covered, plus the UNION ALL's. Growing the template or increasing the number of quarters covered will grow the SQL volume accordingly. I just tested the Command Text dialogue, and it did handle this much code, but I suspect it is close to its limit. ~Peter Ferber

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2011Q3' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20110930 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20110930 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20110930 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20111231 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '30-Sep-2011'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2011Q4' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20111231 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20111231 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20111231 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20111231 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '31-Dec-2011'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2012Q1' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20120331 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20120331 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20120331 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20120331 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '31-Mar-2012'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2012Q2' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20120630 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20120630 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20120630 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20120630 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '30-Jun-2012'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2012Q3' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20120930 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20120930 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20120930 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20120930 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '30-Sep-2012'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2012Q4' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20121231 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20121231 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20121231 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20121231 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '31-Dec-2012'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2013Q1' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20130331 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20130331 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20130331 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20130331 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '31-Mar-2013'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2013Q2' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20130630 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20130630 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20130630 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20130630 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '30-Jun-2013'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2013Q3' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20130930 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20130930 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20130930 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20130930 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '30-Sep-2013'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2013Q4' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20131231 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20131231 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20131231 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20131231 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '31-Dec-2013'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2014Q1' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20140331 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20140331 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20140331 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20140331 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '31-Mar-2014'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

UNION ALL

SELECT

V_BOOK_TRANSIT_NAME As AU

, 'BQR' AS BAND_TYPE

, LOBus.V_RC_CODE_LVL1_DESC as LOB

, Exposures.N_PD

, Exposures.N_LGD

, COUNT (*) AS Num_Exp

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN 1

ELSE 0

END

) AS Num_Def_Exp

, SUM (Exposures.N_EXP_GL_BALANCE) AS Outstanding_Bal

, SUM (Exposures.N_COMMITMENT_AMT) AS Commit_Amt

, MAX (Exposures.N_EXP_GL_BALANCE) AS Max_Bal

, MIN (Exposures.N_EXP_GL_BALANCE) AS Min_Bal

, AVG (Exposures.N_EXP_GL_BALANCE) AS Avg_Bal

, SUM (Exposures.N_EAD_AMT_ADV) AS EAD

, SUM (Exposures.N_CAPITAL_CHARGE_ADV) AS Capital_Charge

, SUM (Exposures.N_RISK_BASED_CAPITAL_ADV) AS Risk_Based_Capital

, SUM (Exposures.N_EL_AMT) AS Expected_Loss

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EXP_GL_BALANCE

ELSE 0

END

) AS Def_Bal

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_RISK_BASED_CAPITAL_ADV

ELSE 0

END

) AS Def_RBC

, SUM

(

CASE

WHEN NVL (Exposures.F_EXP_DEFAULTED_FLAG, 'N') = 'Y'

THEN Exposures.N_EL_AMT

ELSE 0

END

) AS Def_EL

, '2014Q2' AS YEAR_QTR

FROM

REVARCBIII.GET_EXPOSURES_20140630 Exposures

JOIN REVARCBIII.GET_REPORT_LEGAL_ENTITY_20140630 RPT_LE

ON Exposures.V_COMPANY_CODE = RPT_LE.V_LE_NODE

JOIN REVARCBIII.GET_LINE_OF_BUSINESS_20140630 LOBus

ON Exposures.N_LOB_SKEY = LOBus.N_LOB_SKEY

JOIN REVARCBIII.GET_LOB_ACCOUNTNG_UNIT_20140630 LE_AU

On Exposures.V_BOOK_TRANSIT_CODE = LE_AU.V_BOOK_TRANSIT_CODE

WHERE

NVL (Exposures.F_GL_EXPOSURE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_INTERNAL_TRADE_FLAG, 'N') <> 'Y'

AND NVL (Exposures.F_RTCRRI_FLAG, 'N') <> 'Y'

AND RPT_LE.V_DI_NODE = 'CWFN'

AND Exposures.FIC_MIS_DATE = '30-Jun-2014'

GROUP BY

V_BOOK_TRANSIT_NAME

, LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

, Exposures.FIC_MIS_DATE

ORDER BY

LOBus.V_RC_CODE_LVL1_DESC

, Exposures.N_PD

, Exposures.N_LGD

Thank you, yes this really does help me understand.

A quick observation or two.

You should remove all ORDER BY clauses except the last, and that last one can refer to the column aliases, e.g.

In the example query you start at '2011Q3' and progress to '2014Q2'

Can you retain all the old quarters in a workbook? and only refresh the quarter that needs it, and progress at change of quarter by retaining the now past quarter in the workbook etc.

OR

Could you not iterate the queries, one quarter on each iteration? or one year on each iteration?

A quick observation or two.

You should remove all ORDER BY clauses except the last, and that last one can refer to the column aliases, e.g.

ORDER BY AU , BAND_TYPE , LOB

In the example query you start at '2011Q3' and progress to '2014Q2'

Can you retain all the old quarters in a workbook? and only refresh the quarter that needs it, and progress at change of quarter by retaining the now past quarter in the workbook etc.

OR

Could you not iterate the queries, one quarter on each iteration? or one year on each iteration?

ASKER

The first idea about ordering is interesting, it may be an unfortunate nature of the beast, since I have a template that reiterates itself, and it's possible that there would be only one. One idea to segregate the ordering clause from the rest of the query, so that it's guaranteed to happen only once, at the end of the query. Part of the elegance of my system is that the template simply gets replicated, with the exception that the placeholders are replaced with the appropriate quarter. Another thought is to have the code search for the order clause, and put it in only at the end. Stuff to ponder: thank you.

Retaining old quarters in a workbook is not really feasible, because the procedure creates a new workbook each time the process runs, with the list of quarters the user has specified for the run. Nothing, by its nature, is retained. If it were, this would be a fine idea.

Your last point: I don't really understand it. My process is a kind of cookie-cutter procedure, in which a template containing placeholders undergoes a series of search-and-replace processes that generates one quarter's worth of functional SQL code. In an industrial process of self-replication, the procedure strings together functional, quarter-specific code blocks, separating each with a "UNION ALL" clause.

Finally, getting back to my original question, I'm trying to get past the 64KB limitation that both Microsoft Query and the Command Text dialogue imposes (double click the image at the end of my original question for a better look at the two environments that impose this limitation). I reiterate the key issue that this post seeks to address. The other information is interesting, but amounts to side trips to the central question I'm posing.

Thanks, ~Peter Ferber

Retaining old quarters in a workbook is not really feasible, because the procedure creates a new workbook each time the process runs, with the list of quarters the user has specified for the run. Nothing, by its nature, is retained. If it were, this would be a fine idea.

Your last point: I don't really understand it. My process is a kind of cookie-cutter procedure, in which a template containing placeholders undergoes a series of search-and-replace processes that generates one quarter's worth of functional SQL code. In an industrial process of self-replication, the procedure strings together functional, quarter-specific code blocks, separating each with a "UNION ALL" clause.

Finally, getting back to my original question, I'm trying to get past the 64KB limitation that both Microsoft Query and the Command Text dialogue imposes (double click the image at the end of my original question for a better look at the two environments that impose this limitation). I reiterate the key issue that this post seeks to address. The other information is interesting, but amounts to side trips to the central question I'm posing.

Thanks, ~Peter Ferber

"My process is a kind of cookie-cutter procedure, in which a template containing placeholders undergoes a series of search-and-replace processes that generates one quarter's worth of functional SQL code. "

>> then execute that sql query for that quarter

>> APPEND to the workbook

>> repeat (until done)

This way you are only executing small queries none of them using UNION ALL

OR an option would be to do this for each year, perhaps a little more complex.

Personally I'd go with the "run each quarter" approach as it probably isn't too big a change to the existing script's logic.

>> then execute that sql query for that quarter

>> APPEND to the workbook

>> repeat (until done)

This way you are only executing small queries none of them using UNION ALL

OR an option would be to do this for each year, perhaps a little more complex.

Personally I'd go with the "run each quarter" approach as it probably isn't too big a change to the existing script's logic.

ASKER

Your idea is intriguing but not practical. The problem is really with Microsoft Query and the Command Text dialogue: I don't like the way they reformat my queries, getting rid of all my carefully laid out stylistics, and the limitation of the query size in these two applications is the problem I am asking the experts here to address. I know that the problem is not with the ADO connection itself, because it has handled a SQL statement that is larger than 64KB. Also, I just discovered a new problem with Microsoft Query: it doesn't seem to be able to handle a nested query: when I had a SELECT clause inside parantheses, it gave me the error, "Couldn't Add the table '('". Aaaaaaarg.

I think that both Microsoft Query and the Command Text dialogue are weak engines, also bogged down with a size limitation. What would be nice is if Excel attached to a robust query engine that takes a manilla text file name as a parameter and process the contents it finds there, keeping the stylistics of the file intact while taking, as input, the SQL statement that constitutes the file. I'm open to the (sad) prospect that Excel may not provide this functionality. I will give full credit to the one who can definitively answer that question. Thanks, ~Peter Ferber

I think that both Microsoft Query and the Command Text dialogue are weak engines, also bogged down with a size limitation. What would be nice is if Excel attached to a robust query engine that takes a manilla text file name as a parameter and process the contents it finds there, keeping the stylistics of the file intact while taking, as input, the SQL statement that constitutes the file. I'm open to the (sad) prospect that Excel may not provide this functionality. I will give full credit to the one who can definitively answer that question. Thanks, ~Peter Ferber

>>" the limitation of the query size in these two applications is the problem I am asking the experts here to address. "

yes, exactly, that is what my suggestion addresses

I'm not suggesting you do this manually...

using VBA:

I'm not writing that VBA for you however

yes, exactly, that is what my suggestion addresses

I'm not suggesting you do this manually...

using VBA:

grab template sql

change the necessary values for A quarter

run this query which appends to the workbook

repeat until you reach the current quarter

I'm not writing that VBA for you however

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

The solution, though imperfect, is far better than anything up with which the experts came. The experience was frustrating for me, as there was a persistence to push a solution I didn't want, and not actually answering the question I was asking.

Have you considered specifying the required SQL statement within a (different, potentially hidden) worksheet within the same workbook, but distinct from the worksheet where the results will be displayed?

Also, "3. Export the contents of the recordset to a tab-separated text file" & your further request; "Streamline the process by eliminating step 3, thus saving time by eliminating the creation of one redundant copy of the dataset".

Implementing step 3 will be additional effort for which Expert (or Experts) assist you here.

Step 3 is not required at all (unless, again, you have a specific requirement to export the resultant dataset to the tab-separated text file).

Finally, what is it specifically that you require help with in your (non-trivial) requirements list?

If you respond with "All of it" you are likely to receive less assistance than attempting some of this task yourself, & seeking guidance if/when you are struggling with certain automated tasks.