MS SQL Server time between records

In the SQL Below I would like to know... how much time elapsed between records

In other words...

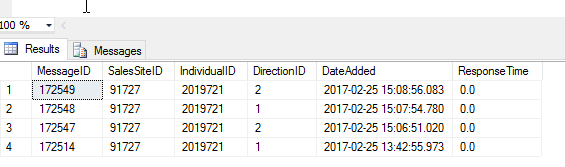

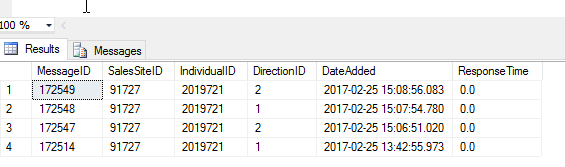

It appears there was a minute and 2 seconds between MessageID 172549

and MessageID 172548

And a Minute 3 seconds between 172548 and 172547

I assume there will need to be RowNumbers implemented some way???

In other words...

It appears there was a minute and 2 seconds between MessageID 172549

and MessageID 172548

And a Minute 3 seconds between 172548 and 172547

I assume there will need to be RowNumbers implemented some way???

DECLARE @IndividualID BIGINT

SET @IndividualID = 2019721

SELECT MessageID ,

SalesSiteID ,

IndividualID,

DirectionID ,

DateAdded,

0.0 ResponseTime

FROM dbo.ewMessages

WHERE DateAdded > GETDATE() - 1

AND ISNULL(IndividualID, 0) > 0

AND IndividualID = @IndividualID

ORDER BY IndividualID ,

SalesSiteID ,

MessageID DESC;

ASKER

Agx

I am traveling at the moment but will take a look this evening EST

I am traveling at the moment but will take a look this evening EST

The answer may depend on which version on MS SQL Server you use.

LEAD() and LAG() are the relevant functions for this request (starting with 2012), but you may not have them available to you. e.g.

NULL cannot be compared using equals, or not equals

so this predicate:

AND IndividualID = @IndividualID

will never return any rows where IndividualID is null

so that makes this predicate:

AND ISNULL(IndividualID, 0) > 0

redundant. Plus, using ISNULL() this way can be a clause of slowness in queries.

Be wary of using functions in the where clause, avoid them if possible.

---- & ----

In future questions would you add some re-usable sample data instead of an image?

e.g. copy/paste the data from that result into a code block within the question

[+edit]just like _agx_ did in that answer - which I had not seen while preparing mine :)

LEAD() and LAG() are the relevant functions for this request (starting with 2012), but you may not have them available to you. e.g.

DECLARE @IndividualID bigint

SET @IndividualID = 2019721

SELECT

MessageID

, SalesSiteID

, IndividualID

, DirectionID

, DateAdded

, LAG(DateAdded) OVER(PARTITION BY SalesSiteID,IndividualID

ORDER BY DateAdded desc) AS PrevDateAdded

, DATEDIFF(second,LAG(DateAdded) OVER(PARTITION BY SalesSiteID,IndividualID

ORDER BY DateAdded desc), DateAdded) AS ResponseTime

FROM dbo.ewMessages

WHERE DateAdded > GETDATE() - 1

AND IndividualID = @IndividualID

ORDER BY

IndividualID

, SalesSiteID

, MessageID DESC

;DECLARE @IndividualID bigint

SET @IndividualID = 2019721

SELECT

MessageID

, SalesSiteID

, IndividualID

, DirectionID

, DateAdded

, DATEDIFF(SECOND,PrevDateAdded, DateAdded) AS ResponseTime

FROM dbo.ewMessages AS d

OUTER APPLY (

SELECT TOP 1

DateAdded AS PrevDateAdded

FROM dbo.ewMessages AS p

WHERE d.SalesSiteID = p.SalesSiteID

AND d.IndividualID = p.IndividualID

ORDER BY DateAdded DESC

) AS OA

WHERE DateAdded > GETDATE() - 1

AND IndividualID = @IndividualID

ORDER BY

IndividualID

, SalesSiteID

, MessageID DESC

;NULL cannot be compared using equals, or not equals

so this predicate:

AND IndividualID = @IndividualID

will never return any rows where IndividualID is null

so that makes this predicate:

AND ISNULL(IndividualID, 0) > 0

redundant. Plus, using ISNULL() this way can be a clause of slowness in queries.

Be wary of using functions in the where clause, avoid them if possible.

---- & ----

In future questions would you add some re-usable sample data instead of an image?

e.g. copy/paste the data from that result into a code block within the question

[+edit]just like _agx_ did in that answer - which I had not seen while preparing mine :)

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Sure thing

I will be at a desk later this evening

I will be at a desk later this evening

@PortletPaul - Cool. Didn't know about LEAD/LAG in 2012. Learn something new .... :-)

SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Spectacular.

Implemented and working flawlessly

Implemented and working flawlessly

Out of curiosity, did you ever try the query I suggested?

Edit: Wondering how it stacked up against Paul's ;-) If it didn't work, it's good to know why in case it's something to avoid in future.

Edit: Wondering how it stacked up against Paul's ;-) If it didn't work, it's good to know why in case it's something to avoid in future.

@_agx_

I would expect the row_number() based approach to work too.

Hard to gauge the performance implications without testing but the apply operator is often surprisingly efficient even if it does involve a correlated subquery (anecdotally - the optimiser appears to handle this particularly well).

Had LEAD/LAG been available I would expect that approach to be the best for performance.

I would expect the row_number() based approach to work too.

Hard to gauge the performance implications without testing but the apply operator is often surprisingly efficient even if it does involve a correlated subquery (anecdotally - the optimiser appears to handle this particularly well).

Had LEAD/LAG been available I would expect that approach to be the best for performance.

>> the apply operator is often surprisingly efficient even if it does involve a correlated subquery

@PortletPaul - Funny you should say that. I've always liked the "cleaner" apply syntax, but worried about the correlated subquery aspect. I'll have to look into that more and get a better idea of what it's doing beneath the hood. Thanks!

@PortletPaul - Funny you should say that. I've always liked the "cleaner" apply syntax, but worried about the correlated subquery aspect. I'll have to look into that more and get a better idea of what it's doing beneath the hood. Thanks!

ASKER

Hey guys

I ran them all against a very large table of data and the one I chose came out slightly quicker.

That was the only reason I chose it

And oh yeah.... the lag and lead was fun to see as well

I ran them all against a very large table of data and the one I chose came out slightly quicker.

That was the only reason I chose it

And oh yeah.... the lag and lead was fun to see as well

Thanks for the feedback - always useful - and appreciated!

Ditto. Thanks guys!

Open in new window

Query:

Open in new window