abhishake yadav

asked on

Extract information from a list using Tcl

Hello ,

I have multiple log files which contain values like this with headers :

I want to make a header file which contains each row from column 1 as individual column headers and min - max from each of the m and present it in column format.

Info in log files:

Trace Header Min Max Mean

aaa 1 6 xx

bbb 2 7 xxx

What I want :

aaa bbb

1-6 2-7

Thanks for help

I have multiple log files which contain values like this with headers :

TRACE HEADER====================================================================================================================

MIN MAX MEAN COUNT

Trace sequence number within line [001-004]: 1 140400 70200.50 140400

Trace sequence number within SEGY file [005-008]: 1 140400 70200.50 140400

Original field record number [009-012]: 1001 4900 2950.50 140400

Trace number within original field record [013-016]: 1 36 18.50 140400

Energy source point [017-020]: 1001 4900 2950.50 140400

Not used for NFH [021-024]: 0 0 0.00 140400I want to make a header file which contains each row from column 1 as individual column headers and min - max from each of the m and present it in column format.

Info in log files:

Trace Header Min Max Mean

aaa 1 6 xx

bbb 2 7 xxx

What I want :

aaa bbb

1-6 2-7

Thanks for help

ASKER

I tried using split and regex but to no avail , I can extract all the info with bash script but since I am new to Tcl I couldn't do much .

ASKER

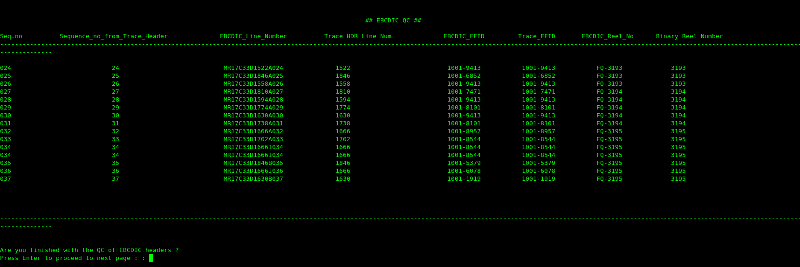

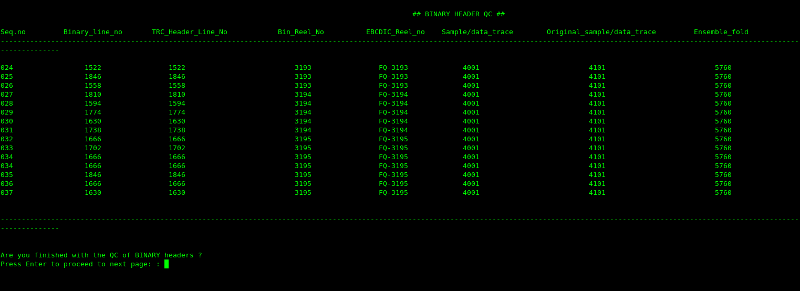

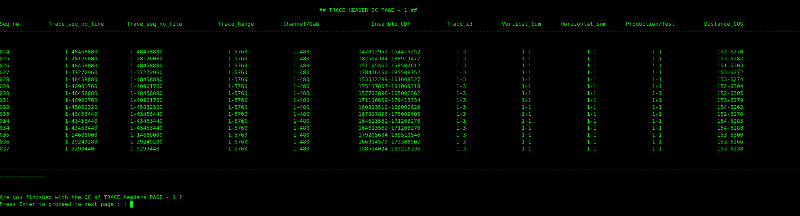

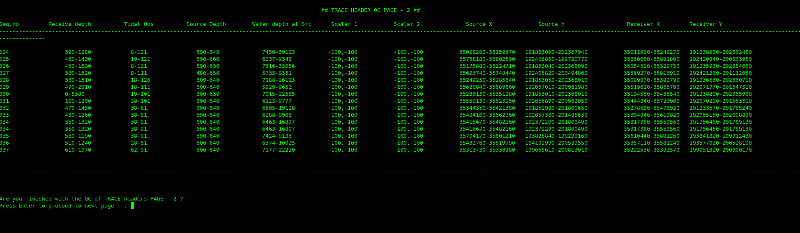

The whole log file is

FILE SUMMARY====================================================================

Filename: ./MR17C33D1666J036.sgy

Filesize: 42892224 B

41886.94 KiB

40.91 MiB

0.04 GiB

0.00 TiB

EBCDIC HEADER===================================================================

C01 CLIENT: TULLOW MAURITANIA LTD PROJECT NO: MRBKC3_17_3D_S1_PS

C02 SURVEY: MRBKC3_17_3D_S1_PS CONTRACTOR: POLARCUS ADIRA AS

C03 SAIL LINE: MR17C33D1666J036 SEQUENCE: 036 DATE: 30 Oct 2017

C04 DATA TYPE: FAR FIELD SIGNATURE REEL: FP 309

C05 FORMAT THIS FILE: SEG-Y SAMPLE CODE: 4BYTE IBM FLOATING PT SAMPLE RATE:0.5MS

C06 DATA TRACES/RECORD: 0 AUX TRACES/RECORD: 1 BYTES/SAMPLE: 4

C07 ACQUISITION PARAMETERS: CONTINUOUS RECORDING MODE: ON

C08 VESSEL : M/V POLARCUS ALIMA ENERGY SOURCE : BOLT LL/LLXT

C09 POSITIONING : FUGRO STARFIX HP VOLUME : 3 X 2495 CU.IN

C10 : FUGRO STARFIX G2 GUN DEPTH/SEPAR : 6M / 37.5M

C11 FORMAT : SEGD 8058 REV 1.0

C12 SHOT INTERVAL : 12.5M

C13 REC LENGTH/SI : 1024MS/0.5MS

C14 HYDROPHONE TYPE : AGH 7720 C RECORDING SYSTEM : SEAMAP GUNLINK

C15 LOW-CUT FILTER: 2HZ 6dB/OCT (ANALOG) HIGH-CUT FILTER : 200HZ 370DB/OCT

C16

C17 GAIN: 0DB; POLARITY : SEG FOR CAUSAL DATA, PRESSURE ONSET - NEGATIVE VALUE

C18 ES ANTENNA IL OFFSET TO NEAREST CMP: 150M

C19 GEODETIC PARAMETERS: COORDINATE UNITS : INT METERS

C20 DATUM : WGS84 SPHEROID : WGS84

C21 PROJECTION : UTM ZONE : 28N

C22

C23

C24

C25

C26 TRACE HEADER REMAPS (NAME, FIRST BYTE, FORMAT, UNITS;):

C27

C28 SOURCE MEASUREMENT UNIT,231,2I,PSI;

C29 NAV GUN_MASK(1-STBD,2-CENTER,3-PORT),211,2I;

C30 SEGD GUN_MASK(3-STBD,12-CENTER,48-PORT),213,2I;

C31 CDP BIN GRID (ORIGIN REFERS TO IL:XL=0:0):

C32 ORIGIN: X=322993.75; Y=1905944.00; DI(XLINE INC)=6.25M; DJ(ILINE INC)=18.75M

C33 AZJ(IL,LINE HEADING)=0 DEG; AZI(XL)=90 DEG; (ALL CLOCKWISE FROM NORTH)

C34 GRID CORNERS : INLINE=0800, XLINE=00562 X=337993.75, Y=1909456.50

C35 INLINE=2245, XLINE=00562 X=365087.50, Y=1909456.50

C36 INLINE=2245, XLINE=19786 X=365087.50, Y=2029606.50

C37 INLINE=0800, XLINE=19786 X=337993.75, Y=2029606.50

C38

C39 PROCESSED & QC'ED BY: POLARCUS ALIMA GEOPHYSICAL DEPARTMENT

C40 END EBCDIC

BINARY FILE HEADER==============================================================

Job identification number 44317

Line number 1666

Reel number 309

#Data traces per ensemble 0

#Auxiliary traces per ensemble 1

Sample interval 500

Sample interval - field record 500

#Samples per data trace 2049

#Samples per data trace - field record 2049

Data format sample code 1

Ensemble fold 1

Trace sorting code 1

Vertical sum code 1

Amplituide recovery method 1

Measurement system 1

Impulse signal polarity 1

SEGY revision number - major 1

SEGY revision number - minor 0

Fixed length trace flag 1

#Extended textual header blocks 0

TRACE HEADER====================================================================================================================

MIN MAX MEAN COUNT

Trace sequence number within line [001-004]: 1 5084 2542.50 5084

Trace sequence number within SEGY file [005-008]: 1 5084 2542.50 5084

Original field record number [009-012]: 995 6078 3536.50 5084

Trace number within original field record [013-016]: 1 1 1.00 5084

Energy source point [017-020]: 2867 7950 5408.50 5084

Ensemble number [021-024]: 0 0 0.00 5084

Trace number within ensemble [025-028]: 0 0 0.00 5084

Trace identification code [029-030]: 0 0 0.00 5084

Vertical summed traces yielding this trace [031-032]: 1 1 1.00 5084

Horizontally stacked traces yielding this trace [033-034]: 1 1 1.00 5084

Data Use [035-036]: 1 1 1.00 5084

Distance from COS to centre of receiver group [037-040]: 0 0 0.00 5084

Receiver group depth (scaler #1) [041-044]: 0 0 0.00 5084

Tidal correction to vertical datum (scaler #1) [045-048]: 0 0 0.00 5084

Source depth (scaler #1) [049-052]: 0 0 0.00 5084

Water depth at source (scaler #1) [061-064]: 0 0 0.00 5084

Scalar #1 (-ve => divisor) [069-070]: 0 0 0.00 5084

Scalar #2 (-ve => divisor) [071-072]: 0 0 0.00 5084

Source coordinate - X (scalar #2) [073-076]: 354203 354278 354240.66 5084

Source coordinate - Y (scalar #2) [077-080]: 1941782 2005319 1973550.47 5084

Receiver group coordinate - X (scalar #2) [081-084]: 0 0 0.00 5084

Receiver group coordinate - Y (scalar #2) [085-088]: 0 0 0.00 5084

Coordinate units [089-090]: 1 1 1.00 5084

Water velocity (as used in p1 water depth) [091-092]: 1501 1501 1501.00 5084

Sample skew (Scalar #4) [095-096]: 0 0 0.00 5084

Water bottom time (Scalar #4) [097-098]: 0 0 0.00 5084

Source static correction (Scalar #4) [099-100]: 0 0 0.00 5084

Receiver group static correction (Scalar #4) [101-102]: 0 0 0.00 5084

Total static correction applied (Scalar #4) [103-104]: 0 0 0.00 5084

Lag time A (Scalar #4) [105-106]: 0 0 0.00 5084

lag time B (Scalar #4) [107-108]: 0 0 0.00 5084

Delay recording time (Scalar #4) [109-110]: 0 0 0.00 5084

Number of samples in this trace [115-116]: 2049 2049 2049.00 5084

Sample interval for this trace [117-118]: 500 500 500.00 5084

Gain type of field instruments [119-120]: 1 1 1.00 5084

Instrument gain - constant [121-122]: 0 0 0.00 5084

Instrument early/initial gain [123-124]: 0 0 0.00 5084

Streamer section serial number [125-128]: 0 0 0.00 5084

Section sensitivity scalar [129-130]: 0 0 0.00 5084

Anti-alias filter frequency [141-142]: 200 200 200.00 5084

Anti-alias filter slope [143-144]: 370 370 370.00 5084

Bandpass filter type [147-148]: 0 0 0.00 5084

Low cut filter frequency [149-150]: 2 2 2.00 5084

High cut frequency [151-152]: 200 200 200.00 5084

Low cut filter slope [153-154]: 6 6 6.00 5084

High cut filter slope [155-156]: 370 370 370.00 5084

Year data recorded [157-158]: 2017 2017 2017.00 5084

Day of year data recorded [159-160]: 303 303 303.00 5084

Hour of day data recorded [161-162]: 10 19 14.91 5084

Minute of hour data recorded [163-164]: 0 59 29.80 5084

Second of minute data recorded [165-166]: 0 59 29.53 5084

Time basis code [167-168]: 4 4 4.00 5084

This file elevation datum [171-172]: 2 2 2.00 5084

Final survey elevation datum [173-174]: 1 1 1.00 5084

Ensemble coordinate - X [181-184]: 354203 354278 354240.66 5084

Ensemble coordinate - Y [185-188]: 1941782 2005319 1973550.47 5084

3D inline number [189-192]: 0 0 0.00 5084

3D crossline number [193-196]: 0 0 0.00 5084

Shotpoint number (scalar #3) [197-200]: 2867 7950 5408.50 5084

Scalar #3 (-ve => divisor) [201-202]: 0 0 0.00 5084

Trace value measurement [203-204]: 3 3 3.00 5084

Cable identification number [205-206]: 0 0 0.00 5084

Acquisition sequence number [207-208]: 36 36 36.00 5084

Numerical sail line number [209-210]: 0 0 0.00 5084

Gun mask [211-212]: 1 3 2.00 5084

Original Gun Mask [213-214]: 3 48 21.00 5084

Scalar #4 (-ve => divisor) [215-216]: -10 -10 -10.00 5084

Start time of data [221-222]: 0 0 0.00 5084

End time of data [223-224]: 1024 1024 1024.00 5084

Source measurement [225-228]: 2000 2000 2000.00 5084

Source measurement - mantissa [229-230]: 0 0 0.00 5084

Source measurement unit [231-232]: -1 -1 -1.00 5084

Continuous recording Delta [233-236]: 0 0 0.00 5084

--------------------------------------------------------------------------------------------------------------------------------

TRACES PROCESSED: 5084

DATA PROCESSED: 40.91 MiB

ELAPSED TIME: 0.96 s

PROCESSING RATE: 42.80 MiB/s

================================================================================================================================ASKER

One of the other problems that I faced was separating the log file itself into major chunks , but I can't download text-utils :: split due to limited access .

Can you post your working bash script? Then we can look at translating it to Tcl.

I still don't understand how you want the output file to look - can you post a sample?

I still don't understand how you want the output file to look - can you post a sample?

ASKER

Hi Duncan ,

please see the script :

please see the script :

#!/bin/bash

#location of the header log files

#Script to QC log files generated by SEGY-HCM script , created by Abhishake

clear

loc=/alima/large_files/ali044317-tullow-mauritania/navmerge/headers

cd "$loc"

log_size=15671

cd $loc/Logs

rm line_name binary_line_num seq_no ebcdic_sp ebcdic_ffid ebcdic_reel binary_reel_no trc_count 2>/dev/null

rm 61_trc_seq 64_trc_line_num ebcdic_qc ebcdic_qc_ ebcdic_qc__ 2>/dev/null

rm bin_qc bin_qc_ bin_qc__ 2>/dev/null

rm 1 2 5 7 8 9_tid 10 11 12 13 14 15 16 17 18 trc_qc_1 trc_qc_1_ trc_qc_1__ 2>/dev/null

rm 19 20 21 22 23 24 25 26_skw_stat 27_wbt 28 29 30 trc_qc_2 trc_qc_2_ trc_qc_2__ 2>/dev/null

rm 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 trc_qc_3 trc_qc_3_ trc_qc_3__ 2>/dev/null

rm 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 trc_qc_4 trc_qc_4_ trc_qc_4__ 2>/dev/null

rm 62 63 65 66 67_sens 68 69 70 71 72 73 74 75 trc_qc_5 trc_qc_5_ trc_qc_5__ 2>/dev/null

rm mb_size 4_trc_ffid 6_trc_sp fold trc_qc_6 trc_qc_6_ trc_qc_6__ sample_data sample_orig 2>/dev/null

rm trc_qc_7 trc_qc_7_ trc_qc_7__ 2>/dev/null

rm trc_qc_8 trc_qc_8_ trc_qc_8__ 2>/dev/null

rm trc_qc_s trc_qc_s_ trc_qc_s__ not_used not_used_boat jitter orig_delay 2>/dev/null

#---------------some check to see if logs are still running by IRDB Deamon--------------------------------------------------

nfiles=$(ls -l $loc | wc -l)

true_nfiles=$(( $nfiles - 2 ))

tot_size=$(( $true_nfiles * $log_size ))

tot_sum=$( ls -l $loc | tail -"$true_nfiles" | awk '{print $5}' | awk '{ sum+=$1} END {print sum}')

#tot_sum_2=$(( $tot_sum + $log_size ))

if

[[ $tot_size -eq $tot_sum ]]

then

echo

echo

echo

read -p "Enter the range of Sequences you want to see , program will start counting from the last Sequence::::"" " range

echo

echo

echo

else

echo "Logs are still running let them finish and try again"

exit 1

fi

if

[[ $range =~ ^[-+]?[0-9]+$ ]] #2>/dev/null

then

echo

echo

echo " "

echo

echo

else

echo

echo

echo "The number should be an integer"

echo

echo

exit 1

fi

if

[[ $range -gt $true_nfiles ]] #2>/dev/null

then

echo

echo

echo

echo

echo "Enter a sane choice please , the number that you have entered appears to be more than the total number of Seg-Y files present , total numer of files to run QC is, "$true_nfiles" Seg-Y files, Please enter a number less than this"

echo

echo "If you enter zero you will see nothing !! " "Run the script again with sensible range"

echo

echo

echo

echo

exit 1

else

clear

echo " "

cd "$loc"

for filename in $( ls "$loc" )

do

#-------------------------------------------EBCDIC PART----------------------------------------------------------------------------------------------------

grep "C03" $filename | awk '{print $4 }' >> $loc/Logs/line_name

grep "C03" $filename | awk '{print $6 }' >> $loc/Logs/seq_no

#grep "C03" $filename | awk -F - '{print $1" " $2 }' | awk '{ printf "%-5s %s\n",$6 , $7}'| awk '{print $1"-"$2}' >> $loc/Logs/ebcdic_sp

grep "C04" $filename | awk -F : '{print $3 }' | awk '{print $1}' | awk -F - '{printf "%-5s %s\n", $1 , $2}' | awk '{print $1"-"$2}'>> $loc/Logs/ebcdic_ffid

grep "C04" $filename | awk -F : '{print $4 }' | awk '{print $1"-"$2}' >> $loc/Logs/ebcdic_reel

#------------------------------------------------BINARY PART------------------------------------------------------------------------------------------------

grep "Line number" $filename | awk '{print $3}' >> $loc/Logs/binary_line_num

grep "Reel number" $filename | awk '{print $3}' >> $loc/Logs/binary_reel_no

grep "#Samples per data trace" $filename | awk '{print $5}' | head -1 >> $loc/Logs/sample_data

grep "#Samples per data trace - field record" $filename | awk '{print $8}' >> $loc/Logs/sample_orig

grep "Ensemble fold" $filename | awk '{print $3}' >> $loc/Logs/fold

#--------------------------------------------------------------TRACE HEADER PART----------------------------------------------------------------------------

grep "Trace sequence number within line" $filename |awk '{print $7"-"$8}' >> $loc/Logs/1

grep "Trace sequence number within SEGY file" $filename |awk '{print $8"-"$9}' >> $loc/Logs/2

grep "Original field record number" $filename | awk '{printf "%-5s %s\n" , $6, $7}' | awk '{print $1"-"$2}'>> $loc/Logs/4_trc_ffid

grep "Trace number within original field record" $filename |awk '{print $8"-"$9}' >> $loc/Logs/5

grep "Energy source point" $filename | awk '{printf "%-5s %s\n" , $5, $6}'| awk '{print $1"-"$2}' >> $loc/Logs/6_trc_sp

grep "Ensemble number" $filename| awk '{print $4"-"$5}' >> $loc/Logs/7

grep "Trace number within ensemble" $filename |awk '{print $6"-"$7}' >> $loc/Logs/8

grep "Trace identification code" $filename | awk '{print $5 "-" $6}' >> $loc/Logs/9_tid

grep "Vertical summed traces yielding this trace" $filename |awk '{print $8"-"$9}' >> $loc/Logs/10

grep "Horizontally stacked traces yielding this trace" $filename |awk '{print $8"-"$9}' >> $loc/Logs/11

grep "Data Use" $filename | awk '{print $4"-"$5}' >> $loc/Logs/12

grep "Distance from COS to centre of receiver group" $filename |awk '{print $10"-"$11}' >> $loc/Logs/13

grep "Receiver group depth (scaler #1)" $filename | awk '{print $7"-"$8}' >> $loc/Logs/14

grep "Tidal correction to vertical datum (scaler #1)" $filename |awk '{print $9"-"$10}' >> $loc/Logs/15

grep "Source depth (scaler #1)" $filename | awk '{print $6"-"$7}' >> $loc/Logs/16

grep "Water depth at source (scaler #1)" $filename | awk '{print $8"-"$9}' >> $loc/Logs/17

grep "Scalar #1 (-ve => divisor)" $filename | head -1 | awk '{print $7","$8}' >> $loc/Logs/18

grep "Scalar #2 (-ve => divisor)" $filename | head -2 | tail -1 | awk '{print $7","$8}' >> $loc/Logs/19

grep "Source coordinate - X (scalar #2)" $filename | awk '{print $8"-"$9}' >> $loc/Logs/20

grep "Source coordinate - Y (scalar #2)" $filename | awk '{print $8"-"$9}' >> $loc/Logs/21

grep "Receiver group coordinate - X (scalar #2)" $filename | awk '{print $9"-"$10}' >> $loc/Logs/22

grep "Receiver group coordinate - Y (scalar #2)" $filename | awk '{print $9"-"$10}' >> $loc/Logs/23

grep "Coordinate units" $filename | awk '{print $4"-"$5}' >> $loc/Logs/24

grep "Water velocity (as used in p1 water depth)" $filename | awk '{print $10"-"$11}' >> $loc/Logs/25

grep "Sample skew (Scalar #4)" $filename | awk '{print $6"-"$7}' >> $loc/Logs/26_skw_stat

grep "Water bottom time (Scalar #4)" $filename | awk '{print $7"-"$8}' >> $loc/Logs/27_wbt

grep "Source static correction (Scalar #4)" $filename | awk '{print $7"-"$8}' >> $loc/Logs/28

grep "Receiver group static correction (Scalar #4)" $filename | awk '{print $8"-"$9}' >> $loc/Logs/29

grep "Total static correction applied (Scalar #4)" $filename | awk '{print $8"-"$9}' >> $loc/Logs/30

grep "Lag time A (Scalar #4)" $filename | awk '{print $7"-"$8}' >> $loc/Logs/31

grep "lag time B (Scalar #4)" $filename | awk '{print $7"-"$8}' >> $loc/Logs/32

grep "Delay recording time (Scalar #4)" $filename | awk '{print $7"-"$8}' >> $loc/Logs/33

grep "Number of samples in this trace" $filename | awk '{print $10}' >> $loc/Logs/orig_delay

grep "Sample interval for this trace" $filename | awk '{print $8}' >> $loc/Logs/34

grep "Gain type of field instruments" $filename | awk '{print $9}' >> $loc/Logs/35

grep "Instrument gain - constant" $filename | awk '{print $8}' >> $loc/Logs/36

grep "Instrument early/initial gain" $filename | awk '{print $7}' >> $loc/Logs/37

grep "Streamer section serial number" $filename | awk '{print $6"-"$7}' >> $loc/Logs/38

grep "Section sensitivity scalar" $filename | awk '{print $5"-"$6}' >> $loc/Logs/39

grep "Anti-alias filter frequency" $filename | awk '{print $5"-"$6}' >> $loc/Logs/40

grep "Anti-alias filter slope" $filename | awk '{print $7}' >> $loc/Logs/41

grep "Bandpass filter type" $filename | awk '{print $7}' >> $loc/Logs/42

grep "Low cut filter frequency" $filename | awk '{print $8}' >> $loc/Logs/43

grep "High cut frequency" $filename | awk '{print $5"-"$6}' >> $loc/Logs/44

grep "Low cut filter slope" $filename | awk '{print $8}' >> $loc/Logs/45

grep "High cut filter slope" $filename | awk '{print $8}' >> $loc/Logs/46

grep "Year data recorded" $filename | awk '{print $5"-"$6}' >> $loc/Logs/47

grep "Day of year data recorded" $filename | awk '{print $7"-"$8}' >> $loc/Logs/48

grep "Hour of day data recorded" $filename | awk '{print $7"-"$8}' >> $loc/Logs/49

grep "Minute of hour data recorded" $filename | awk '{print $7"-"$8}' >> $loc/Logs/50

grep "Second of minute data recorded" $filename | awk '{print $7"-"$8}' >> $loc/Logs/51

grep "Time basis code" $filename | awk '{print $7}' >> $loc/Logs/52

grep "This file elevation datum" $filename | awk '{print $8}' >> $loc/Logs/53

grep "Final survey elevation datum" $filename | awk '{print $8}' >> $loc/Logs/54

grep "Ensemble coordinate - X" $filename | awk '{print $6"-"$7}' >> $loc/Logs/55

grep "Ensemble coordinate - Y" $filename | awk '{print $6"-"$7}' >> $loc/Logs/56

grep "3D inline number" $filename | awk '{print $5"-"$6}' >> $loc/Logs/57

grep "3D crossline number" $filename | awk '{print $5"-"$6}' >> $loc/Logs/75

grep "Shotpoint number (scalar #3)" $filename | awk '{print $6"-"$7}' >> $loc/Logs/58

grep "Scalar #3 (-ve => divisor)" $filename | tail -1 | awk '{print $7","$8}' >> $loc/Logs/59

grep "Trace value measurement" $filename | awk '{print $5"-"$6}' >> $loc/Logs/60

grep "Cable identification number" $filename | awk '{print $6}' >> $loc/Logs/61_trc_seq

grep "Acquisition sequence number" $filename | awk '{print $6}' >> $loc/Logs/62

grep "Numerical sail line number" $filename | awk '{print $7}' >> $loc/Logs/63

grep "Gun mask" $filename | awk '{print $4"-"$5}' >> $loc/Logs/64_trc_line_num

grep "Original Gun Mask" $filename | awk '{print $5"-"$6}' >> $loc/Logs/65

grep "Scalar #4 (-ve => divisor)" $filename | awk '{print $7","$8}' >> $loc/Logs/66

grep "Start time of data" $filename | awk '{print $6"-"$7}' >> $loc/Logs/67_sens

grep "End time of data" $filename | awk '{print $6"-"$7}' >> $loc/Logs/68

grep "Source measurement" $filename | awk '{print $6}' | head -1 >> $loc/Logs/69

grep "Source measurement - mantissa" $filename | awk '{print $6"-"$7}' >> $loc/Logs/70

grep "Source measurement unit" $filename | awk '{print $5","$6}' >> $loc/Logs/71

grep "Continuous recording Delta" $filename | awk '{print $5"-"$6}' >> $loc/Logs/72

#--------------------------------New addition from version 2 -----------------------------------

#grep "Not used" $filename | awk '{print $6}' | awk '{ sum+=$1} END {print sum}' >> $loc/Logs/not_used

#grep "boat" $filename | awk '{print $7}' | awk '{ sum+=$1} END {print sum}' >> $loc/Logs/not_used_boat

#grep "Value obs jitter in aux chan timing. Not used for NFH" $filename | awk '{print $15}' >> $loc/Logs/jitter

#---------------------------------------------------------------SUMMARY PART-----------------------------------------------------------------------------------

grep "TRACES PROCESSED:" $filename | awk '{print $3}' >> $loc/Logs/trc_count

grep "DATA PROCESSED:" $filename | awk '{print $3}' | awk -F . '{print $1}' >>$loc/Logs/mb_size

done

#--------------------------------------------------------------Display part--------------------------------------------------------------------------------------

cd "$loc"/Logs

paste seq_no 62 line_name 63 ebcdic_ffid 4_trc_ffid ebcdic_reel binary_reel_no > ebcdic_qc

paste seq_no binary_line_num 63 binary_reel_no ebcdic_reel sample_data sample_orig fold > bin_qc

paste seq_no 1 2 5 8 7 9_tid 10 11 12 13 > trc_qc_1

paste seq_no 14 15 16 17 18 19 20 21 22 23 > trc_qc_2

paste seq_no 24 25 26_skw_stat 27_wbt 28 29 30 31 > trc_qc_3

paste seq_no 32 33 orig_delay 34 35 36 37 38 39 > trc_qc_4

paste seq_no 40 41 42 43 44 45 46 47 > trc_qc_5

paste seq_no 48 49 50 51 52 53 54 > trc_qc_6

paste seq_no 55 56 57 75 58 59 60 62 63 64_trc_line_num 65 > trc_qc_7

paste seq_no 66 67_sens 68 69 70 71 72 > trc_qc_8

#cat trc_qc_7

#----

paste seq_no trc_count mb_size > trc_qc_s

#-----

sort -t $'\t' -k 1,1 -V ebcdic_qc > ebcdic_qc_

column -t ebcdic_qc_ > ebcdic_qc__

sort -t $'\t' -k 1,1 -V bin_qc > bin_qc_

column -t bin_qc_ > bin_qc__

sort -t $'\t' -k 1,1 -V trc_qc_1 > trc_qc_1_

column -t trc_qc_1_ > trc_qc_1__

sort -t $'\t' -k 1,1 -V trc_qc_2 > trc_qc_2_

column -t trc_qc_2_ > trc_qc_2__

sort -t $'\t' -k 1,1 -V trc_qc_3 > trc_qc_3_

column -t trc_qc_3_ > trc_qc_3__

sort -t $'\t' -k 1,1 -V trc_qc_4 > trc_qc_4_

column -t trc_qc_4_ > trc_qc_4__

sort -t $'\t' -k 1,1 -V trc_qc_5 > trc_qc_5_

column -t trc_qc_5_ > trc_qc_5__

sort -t $'\t' -k 1,1 -V trc_qc_6 > trc_qc_6_

column -t trc_qc_6_ > trc_qc_6__

sort -t $'\t' -k 1,1 -V trc_qc_7 > trc_qc_7_

column -t trc_qc_7_ > trc_qc_7__

sort -t $'\t' -k 1,1 -V trc_qc_8 > trc_qc_8_

column -t trc_qc_8_ > trc_qc_8__

#--------------------------------------

sort -t $'\t' -k 1,1 -V trc_qc_s > trc_qc_s_

column -t trc_qc_s_ > trc_qc_s__

#----------------Screen display part -----------------------------------------------------------------------------------------------------------------------------------------------------------

#--------------------------------EBCDIC QC-------------------------------------------------------------------

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "" ## EBCDIC QC ## "

echo " "

echo Seq.no" " Sequence_no_from_Trace_Header" "EBCDIC_Line_Number" "Trace HDR Line Num" "EBCDIC_FFID" "Trace_FFID" "EBCDIC_Reel_No" "Binary Reel Number" "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-30s%-30s%-30s%-30s%-20s%-20s%-20s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8}' ebcdic_qc__ > ebcdic_qc___

cat ebcdic_qc___ | tail -$range

echo " "

echo " "

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

echo "Are you finished with the QC of EBCDIC headers ? "

read -p "Press Enter to proceed to next page : : " choice

if

[ "${choice,,}"="y" ]; then

clear

echo " "

echo " "" ## BINARY HEADER QC ## "

echo " "

echo Seq.no" " Binary_line_no" "TRC_Header_Line_No" "Bin_Reel_No" "EBCDIC_Reel_no" "Sample/data_trace" "Original_sample/data_trace" "Ensemble_fold" "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-20s%-30s%-20s%-20s%-30s%-30s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8}' bin_qc__ > bin_qc___

cat bin_qc___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of BINARY headers ? "

read -p "Press Enter to proceed to next page: : " choice_2

if

[ "${choice_2,,}"="y" ]; then

clear

echo " "

echo " "" ## TRACE HEADER QC PAGE - 1 ## "

echo " "

echo Seq.no" " Trace_seq_no_line" "Trace_seq_no_file" "Trace_Range" "Channel/Cab" "Ensemble_CDP" "Trace_id" "Vertical_Sum" "Horizontal_sum" "Production/Test" "Distance_COS""

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-20s%-30s%-20s%-20s%-30s%-20s%-20s%-20s%-20s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8,$9,$10,$11}' trc_qc_1__ > trc_qc_1___

cat trc_qc_1___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 1 ? "

read -p "Press Enter to proceed to next page : : " choice_3

if

[ "${choice_3,,}"="y" ]; then

clear

echo " "

echo " "" ## TRACE HEADER QC PAGE - 2 ## "

echo " "

echo Seq.no" " Receive_depth" "Tidal_Obs" "Source_Depth" "Water_depth_at_Src" "Scalar_1" "Scalar_2" "Source_X" "Source_Y" "Receiver_X" "Receiver_Y""

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-20s%-20s%-20s%-20s%-20s%-20s%-20s%-30s%-20s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8,$9,$10,$11}' trc_qc_2__ > trc_qc_2___

cat trc_qc_2___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 2 ? "

read -p "Press Enter to proceed to next page : : " choice_4

if

[ "${choice_4,,}"="y" ]; then

clear

echo " "

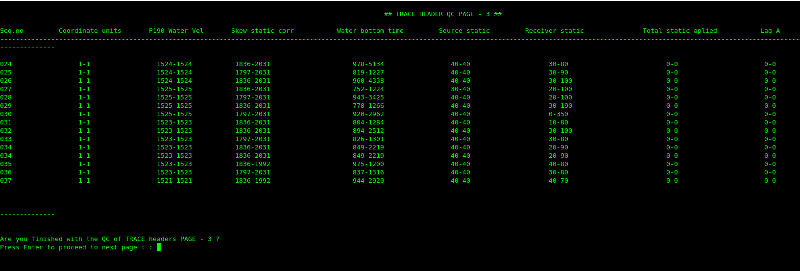

echo " "" ## TRACE HEADER QC PAGE - 3 ## "

echo " "

echo Seq.no" " Coordinate_units" "P190_Water_Vel" "Skew_static_corr" "Water_bottom_time" "Source_static" "Receiver_static" "Total_static_aplied" "Lag_A" "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-20s%-20s%-30s%-25s%-25s%-30s%-25s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8,$9}' trc_qc_3__ > trc_qc_3___

cat trc_qc_3___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 3 ? "

read -p "Press Enter to proceed to next page : : " choice_5

if

[ "${choice_5,,}"="y" ]; then

clear

echo " "

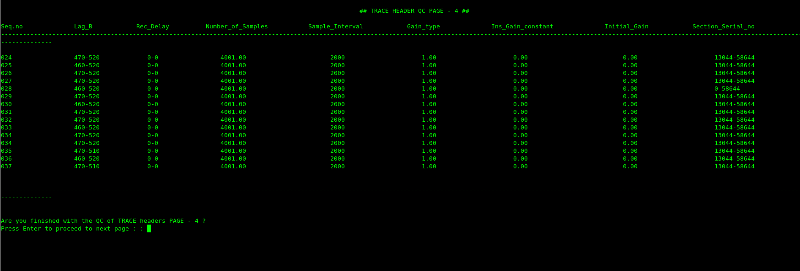

echo " "" ## TRACE HEADER QC PAGE - 4 ## "

echo " "

echo Seq.no" " Lag_B " "Rec_Delay" "Number_of_Samples" "Sample_Interval" "Gain_type" "Ins_Gain_constant" "Initial_Gain" "Section_Serial_no" "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-20s%-20s%-30s%-25s%-25s%-30s%-25s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8,$9}' trc_qc_4__ > trc_qc_4___

cat trc_qc_4___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 4 ? "

read -p "Press Enter to proceed to next page : : " choice_6

if

[ "${choice_6,,}"="y" ]; then

clear

echo " "

echo " "" ## TRACE HEADER QC PAGE - 5 ## "

echo " "

echo Seq.no" "A.A_filter_freq" "A.A_filter_slope" "B.P_filter_type" "LC_Filter_freq" "HC_Filter_freq" "Low_cut_slope" "High_cut_slope" "Year" "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-20s%-30s%-30s%-25s%-25s%-30s%-25s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8,$9}' trc_qc_5__ > trc_qc_5___

cat trc_qc_5___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 5 ? "

read -p " Press Enter to proceed to next page : : " choice_7

if

[ "${choice_7,,}"="y" ]; then

clear

echo " "

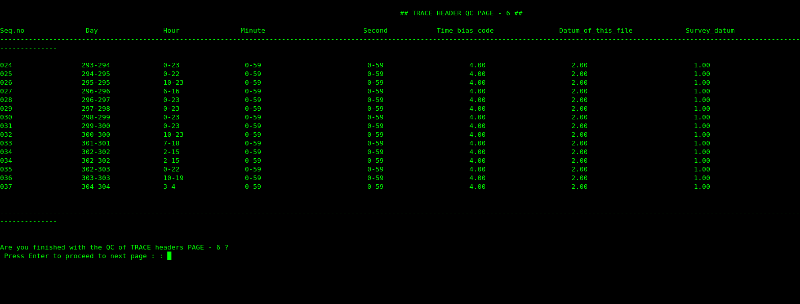

echo " "" ## TRACE HEADER QC PAGE - 6 ## "

echo " "

echo Seq.no" "Day " "Hour " "Minute " "Second " "Time_bias_code" "Datum_of_this_file" "Survey_datum " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-20s%-20s%-30s%-25s%-25s%-30s%-25s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8,$9}' trc_qc_6__ > trc_qc_6___

cat trc_qc_6___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 6 ? "

read -p " Press Enter to proceed to next page : : " choice_8

if

[ "${choice_8,,}"="y" ]; then

clear

echo " "

echo " ## TRACE HEADER QC PAGE - 7 ## "

echo " "

echo Seq.no" "Ensemble_X " "Ensemble_Y " "3D_IL_Number " "3D_XL_Number" "Shotpoint_number" "Scalar3" "Trace value mearsure" "Seq.No" "Sail line Number" "Gun_Mask" "Original_Gun_mask ""

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-30s%-30s%-20s%-30s%-25s%-25s%-30s%-25s%-25s%-25s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8,$9,$10,$11,$12}' trc_qc_7__ > trc_qc_7___

cat trc_qc_7___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 7 ? "

read -p " Press Enter to proceed to next page : : " choice_8

if

[ "${choice_8,,}"="y" ]; then

clear

echo " "

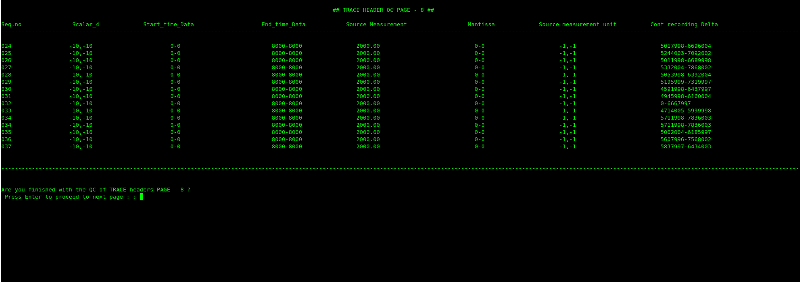

echo " "" ## TRACE HEADER QC PAGE - 8 ## "

echo " "

echo Seq.no" "Scalar_4" "Start_time_Data" "End_time_Data" " Source Measurement" "Mantissa" "Source measurement unit" "Cont recording Delta" "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-20s%-30s%-30s%-25s%-35s%-25s%-30s%-10s\n",$1,$2,$3,$4,$5,$6,$7,$8}' trc_qc_8__ > trc_qc_8___

cat trc_qc_8___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "Are you finished with the QC of TRACE headers PAGE - 8 ? "

read -p " Press Enter to proceed to next page : : " choice_9

if

[ "${choice_9,,}"="y" ]; then

clear

echo " "

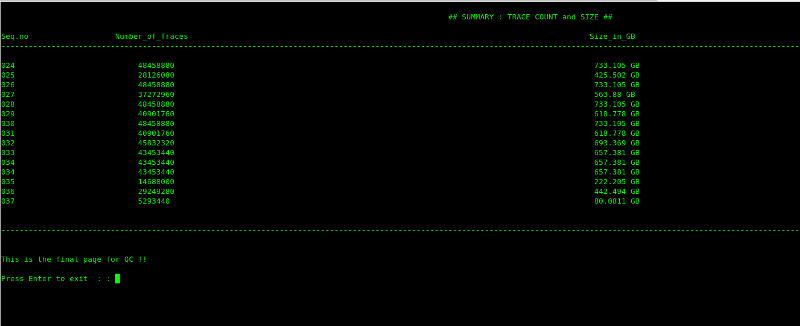

echo " "" ## SUMMARY : TRACE COUNT and SIZE ## "

echo " "

echo Seq.no" "Number_of_Traces" "Size_in_GB " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

awk '{printf "%-30s%-25s%-25s%-25s%-25s%-20s\n",$1,$2,$4,$5,$6, ($3/1024)" ""GB"}' trc_qc_s__ > trc_qc_s___

cat trc_qc_s___ | tail -$range

echo " "

echo " "

echo "---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

#echo " ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- "

echo " "

echo " "

fi

echo "This is the final page for QC !! "

echo " "

read -p "Press Enter to exit : : " choice_10

if

[ "${choice_10,,}"="y" ]; then

clear

fi

rm line_name binary_line_num seq_no ebcdic_ffid ebcdic_reel binary_reel_no trc_count

rm 61_trc_seq 64_trc_line_num ebcdic_qc ebcdic_qc_ ebcdic_qc__

rm bin_qc bin_qc_ bin_qc__

rm 1 2 5 7 8 9_tid 10 11 12 13 14 15 16 17 18 trc_qc_1 trc_qc_1_ trc_qc_1__

rm 19 20 21 22 23 24 25 26_skw_stat 27_wbt 28 29 30 trc_qc_2 trc_qc_2_ trc_qc_2__

rm 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 trc_qc_3 trc_qc_3_ trc_qc_3__

rm 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 trc_qc_4 trc_qc_4_ trc_qc_4__

rm 62 63 65 66 67_sens 68 69 70 71 72 75 trc_qc_5 trc_qc_5_ trc_qc_5__

rm mb_size 4_trc_ffid 6_trc_sp fold trc_qc_6 trc_qc_6_ trc_qc_6__ sample_data sample_orig

rm trc_qc_7 trc_qc_7_ trc_qc_7__

rm trc_qc_8 trc_qc_8_ trc_qc_8__

rm trc_qc_s trc_qc_s_ trc_qc_s__ orig_delay

fi ASKER

See above the out put from the script , it's multiple pages .

I see many blank lines in the script. Are any of these significant?

ASKER

Hi Duncan,

Thanks for reply.

No They are not significant , just there for spacing .

Thanks for reply.

No They are not significant , just there for spacing .

After removing them, it's still a 449-line script. I was hoping to run it on the supplied log file tweaked to just process the log file you posted so I would have a benchmark output file. Will I need extra files to do that?

Alternative approach: I'll post how to translate commands from the top downwards. After converting the first echo command for instance, you should be able to fix all of them.

Alternative approach: I'll post how to translate commands from the top downwards. After converting the first echo command for instance, you should be able to fix all of them.

since I am new to Tcl I couldn't do much

How did you come by the Tk script to display as you posted above? Tk is a Tcl extension.

How did you come by the Tk script to display as you posted above? Tk is a Tcl extension.

I'm having trouble understanding why you are unhappy with the setup as-is. You run your shell script followed by your Tk script right?

ASKER

Hi Duncan,

I picked up Tk very quickly with the help of a book and online resources as I found it much easier than Tcl.

The display is not yet ready as I am still working on it, the reason that I wanted to shift to Tcl was so that I could integrate the the GUI made in Tk and the the actual operations which the script does on the log files , as Tk doesn't work with bash and I wanted to supply couple of variables through entry widgets to the script.

I picked up Tk very quickly with the help of a book and online resources as I found it much easier than Tcl.

The display is not yet ready as I am still working on it, the reason that I wanted to shift to Tcl was so that I could integrate the the GUI made in Tk and the the actual operations which the script does on the log files , as Tk doesn't work with bash and I wanted to supply couple of variables through entry widgets to the script.

ASKER

Hi Duncan,

I picked up Tk very quickly with the help of a book and online resources as I found it much easier than Tcl.

The display is not yet ready as I am still working on it, the reason that I wanted to shift to Tcl was so that I could integrate the the GUI made in Tk and the the actual operations which the script does on the log files , as Tk doesn't work with bash and I wanted to supply couple of variables through entry widgets to the script.

I picked up Tk very quickly with the help of a book and online resources as I found it much easier than Tcl.

The display is not yet ready as I am still working on it, the reason that I wanted to shift to Tcl was so that I could integrate the the GUI made in Tk and the the actual operations which the script does on the log files , as Tk doesn't work with bash and I wanted to supply couple of variables through entry widgets to the script.

ASKER

No, you will not need additional files I guess you can just run it on one single file and you will just get one row in output .

ASKER

Not guess, wrong choice of word.

ASKER

It will run on just one file.

I need to go shortly so will just do the first few lines. I imagine you really want this code to integrate with Tk (so, run under wish rather than tclsh - it does make a difference).

Lines 1-3 remain as-is (yes, really!)

Now for ... THE TRICK

You can obey arbitrary shell commands before starting wish

This is fun! But I really have to go now. Will do more later

Lines 1-3 remain as-is (yes, really!)

#!/bin/bash

#location of the header log files

#Script to QC log files generated by SEGY-HCM script , created by AbhishakeNow for ... THE TRICK

# Tk treats the next line as a comment continuation but sh obeys it \

exec wish "$0" -- "$@"You can obey arbitrary shell commands before starting wish

# Tk treats the next line as a comment continuation but sh obeys it \

echo "Hi there from $0";\

exec wish "$0" -- "$@"This is fun! But I really have to go now. Will do more later

Here's the next batch

My intention is to do enough so you can do the rest.

In the meantime, please do try to familiarise yourself with the new code as posted so far. All Tcl commands have man pages - they'e in section n. So you can enter e.g. man n puts.

When I have a burst of Tcl activity to do, I like to have one terminal window cd'd to /usr/man/mann (you may have /usr/share/man/mann). The I can ls to remind me what commands are available, or actaully see any man page by entering e.g. man ./puts.n.gz. You don't have to enter the full path: just man ./pu <Tab> for instance.

If you start wish in a terminal window, you can experiment with the commands as posted.

diff -r1.4 ee150.sh

7,9c7,8

< puts [exec clear]

< exit

< loc=/alima/large_files/ali044317-tullow-mauritania/navmerge/headers

---

> puts -nonewline [exec clear]

> set loc /alima/large_files/ali044317-tullow-mauritania/navmerge/headers

11c10

< log_size=15671

---

> set log_size 15671

13,24c12,23

< rm line_name binary_line_num seq_no ebcdic_sp ebcdic_ffid ebcdic_reel binary_reel_no trc_count 2>/dev/null

< rm 61_trc_seq 64_trc_line_num ebcdic_qc ebcdic_qc_ ebcdic_qc__ 2>/dev/null

< rm bin_qc bin_qc_ bin_qc__ 2>/dev/null

< rm 1 2 5 7 8 9_tid 10 11 12 13 14 15 16 17 18 trc_qc_1 trc_qc_1_ trc_qc_1__ 2>/dev/null

< rm 19 20 21 22 23 24 25 26_skw_stat 27_wbt 28 29 30 trc_qc_2 trc_qc_2_ trc_qc_2__ 2>/dev/null

< rm 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 trc_qc_3 trc_qc_3_ trc_qc_3__ 2>/dev/null

< rm 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 trc_qc_4 trc_qc_4_ trc_qc_4__ 2>/dev/null

< rm 62 63 65 66 67_sens 68 69 70 71 72 73 74 75 trc_qc_5 trc_qc_5_ trc_qc_5__ 2>/dev/null

< rm mb_size 4_trc_ffid 6_trc_sp fold trc_qc_6 trc_qc_6_ trc_qc_6__ sample_data sample_orig 2>/dev/null

< rm trc_qc_7 trc_qc_7_ trc_qc_7__ 2>/dev/null

< rm trc_qc_8 trc_qc_8_ trc_qc_8__ 2>/dev/null

< rm trc_qc_s trc_qc_s_ trc_qc_s__ not_used not_used_boat jitter orig_delay 2>/dev/null

---

> catch "exec rm line_name binary_line_num seq_no ebcdic_sp ebcdic_ffid ebcdic_reel binary_reel_no trc_count 2>/dev/null"

> catch "exec rm 61_trc_seq 64_trc_line_num ebcdic_qc ebcdic_qc_ ebcdic_qc__ 2>/dev/null"

> catch "exec rm bin_qc bin_qc_ bin_qc__ 2>/dev/null"

> catch "exec rm 1 2 5 7 8 9_tid 10 11 12 13 14 15 16 17 18 trc_qc_1 trc_qc_1_ trc_qc_1__ 2>/dev/null"

> catch "exec rm 19 20 21 22 23 24 25 26_skw_stat 27_wbt 28 29 30 trc_qc_2 trc_qc_2_ trc_qc_2__ 2>/dev/null"

> catch "exec rm 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 trc_qc_3 trc_qc_3_ trc_qc_3__ 2>/dev/null"

> catch "exec rm 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 trc_qc_4 trc_qc_4_ trc_qc_4__ 2>/dev/null"

> catch "exec rm 62 63 65 66 67_sens 68 69 70 71 72 73 74 75 trc_qc_5 trc_qc_5_ trc_qc_5__ 2>/dev/null"

> catch "exec rm mb_size 4_trc_ffid 6_trc_sp fold trc_qc_6 trc_qc_6_ trc_qc_6__ sample_data sample_orig 2>/dev/null"

> catch "exec rm trc_qc_7 trc_qc_7_ trc_qc_7__ 2>/dev/null"

> catch "exec rm trc_qc_8 trc_qc_8_ trc_qc_8__ 2>/dev/null"

> catch "exec rm trc_qc_s trc_qc_s_ trc_qc_s__ not_used not_used_boat jitter orig_delay 2>/dev/null"

26,29c25,28

< nfiles=$(ls -l $loc | wc -l)

< true_nfiles=$(( $nfiles - 2 ))

< tot_size=$(( $true_nfiles * $log_size ))

< tot_sum=$( ls -l $loc | tail -"$true_nfiles" | awk '{print $5}' | awk '{ sum+=$1} END {print sum}')

---

> set nfiles [exec ls -l $loc | wc -l]

> set true_nfiles [expr $nfiles - 2]

> set tot_size [expr $true_nfiles * $log_size]

> set tot_sum [exec ls -l $loc | tail -$true_nfiles | awk {{print $5}} | awk {{ sum+=$1} END {print sum}}]

31,44c30,46

< if

< [[ $tot_size -eq $tot_sum ]]

< then

< echo

< echo

< echo

< read -p "Enter the range of Sequences you want to see , program will start counting from the last Sequence::::"" " range

< echo

< echo

< echo

< else

< echo "Logs are still running let them finish and try again"

< exit 1

< fi

---

> if {$tot_size == $tot_sum} \

> {

> puts {}

> puts {}

> puts {}

> puts -nonewline {Enter the range of Sequences you want to see , program will start counting from the last Sequence:::: }

> flush stdout

> gets stdin range

> puts {}

> puts {}

> puts {}

> } \

> else \

> {

> puts "Logs are still running let them finish and try again"

> exit 1

> } ;# if {$tot_size == $tot_sum} elseMy intention is to do enough so you can do the rest.

In the meantime, please do try to familiarise yourself with the new code as posted so far. All Tcl commands have man pages - they'e in section n. So you can enter e.g. man n puts.

When I have a burst of Tcl activity to do, I like to have one terminal window cd'd to /usr/man/mann (you may have /usr/share/man/mann). The I can ls to remind me what commands are available, or actaully see any man page by entering e.g. man ./puts.n.gz. You don't have to enter the full path: just man ./pu <Tab> for instance.

If you start wish in a terminal window, you can experiment with the commands as posted.

Hi Abhishake,

Is there any difference between this Q and https://www.experts-exchange.com/questions/29066823/Help-in-converting-a-shell-script-to-tcl.html?cid=1752 ?

Sorry to not have posted for a few days - I haven't forgotton you.

Cheers ... Duncan.

Is there any difference between this Q and https://www.experts-exchange.com/questions/29066823/Help-in-converting-a-shell-script-to-tcl.html?cid=1752 ?

Sorry to not have posted for a few days - I haven't forgotton you.

Cheers ... Duncan.

Here are the explanatory notes to https:#a42363354

I changed puts at line 3 to puts -nonewline to avoid extra line feed.

The exit at line 4 was just my end-mark as to how far I had progressed. I omitted it from this batch.

Line 5 is changed to the Tcl way of setting variables.

The rm commands in lines 14-25 are cloaked with an exec (how Tcl obeys system commands) and a catch (because rm still returns error status when you suppress error output with 2>/dev/null). You might want to consider using rm -f but I'm only translating, not improving.

For line 40, translate bash $() construct to calling exec as a function (square brackets). exec returns stdout when you do this unless the command failed, in which case it returns stderr.

For lines 41 & 42, use the Tcl expr function to do the calculation.

Line 43 is another exec but beware. The Tcl equivalent of bash single-quote is a pair of curly braces. So awk doesn't see the outer curly braces but it does see the inner ones. Curly brace pairs are very fundamental in Tcl - they even have to match inside comments.

Lines 50 - 63 comprise the first if statement. Tcl if statements look like if {test} [then] {statements} [[else] {statements}]. Line breaks outside curly brackets must be escaped. Because I mainly use C and like the curly braces to line up, I end the if line with a backslash. No-one else does this. Inside the if block, echo translates to puts but unlike echo, puts must have a single argument. There's no exact equivalent to bash read, I output and flush a prompt string then read a line of input.

I changed puts at line 3 to puts -nonewline to avoid extra line feed.

The exit at line 4 was just my end-mark as to how far I had progressed. I omitted it from this batch.

Line 5 is changed to the Tcl way of setting variables.

The rm commands in lines 14-25 are cloaked with an exec (how Tcl obeys system commands) and a catch (because rm still returns error status when you suppress error output with 2>/dev/null). You might want to consider using rm -f but I'm only translating, not improving.

For line 40, translate bash $() construct to calling exec as a function (square brackets). exec returns stdout when you do this unless the command failed, in which case it returns stderr.

For lines 41 & 42, use the Tcl expr function to do the calculation.

Line 43 is another exec but beware. The Tcl equivalent of bash single-quote is a pair of curly braces. So awk doesn't see the outer curly braces but it does see the inner ones. Curly brace pairs are very fundamental in Tcl - they even have to match inside comments.

Lines 50 - 63 comprise the first if statement. Tcl if statements look like if {test} [then] {statements} [[else] {statements}]. Line breaks outside curly brackets must be escaped. Because I mainly use C and like the curly braces to line up, I end the if line with a backslash. No-one else does this. Inside the if block, echo translates to puts but unlike echo, puts must have a single argument. There's no exact equivalent to bash read, I output and flush a prompt string then read a line of input.

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

I believe I gave the QA enough to go on, and was expecting him to try to do some for himself. I remain happy to help with any difficulties he encounters in the process. But since I started posting script updates, he has never responded

Are you saying you really want a file like

Open in new window

(and the rest) or what?