SQL Server CPU allocation

I'm staggering about in the dark here, because i don't know what i'm doing......

We have a 7 year old SBS 2008 server running SQL Server 2008 and Exchange 2007. The server is dual Xeon quad core with 12G ram. So the server thinks it's got 16 cores. I've had a couple of previous questions in here trying to track down the cause of the poor server/network performance we have been seeing in the past few months. The only thing which has changed recently is that I have migrated an MsAccess database back-end into the SQL Server. We have 8 users of the database who now access the SQL Server.

Yesterday I rebooted the server and found that everything was much, much faster afterwards until someone opened their database application and started to untilize the SQL Server. At that point everything slowed down again. So I made the guess that maybe our problems were related to the SQL Server waking up and grabbing the server resources.

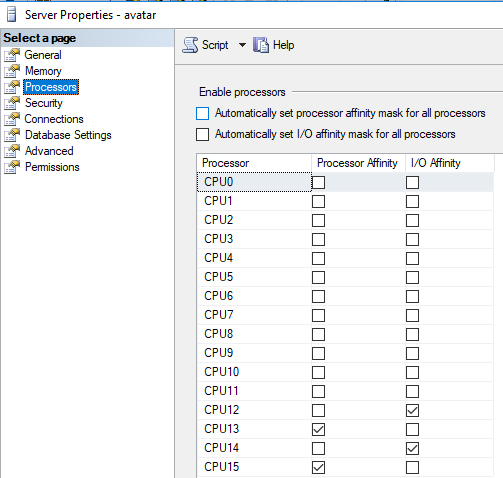

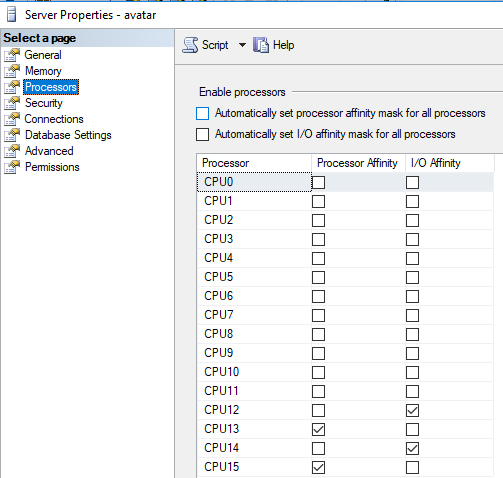

In the SQL Server Processor properties I saw that it was configured to automatically set the Processor Affinity Mask and I/O Affinity Mask for all processors. I changed it so that the Processor Affinity is ticked on 2 CPU's and the I/O affinity is ticked on 2 CPU's, leaving the other 12 CPU's available for other things.

This has speeded things up massively, and we don't notice our database application running any slower because of this change.

I will welcome any comments about this, but I have 2 specific questions.....

1. How can it be that by releasing 12 CPU cores, the server has speeded up 10 fold, when previously the System Idle Process was mostly at 98/99% anyway? I would have thought that with that tiny anount of CPU utilization, the other server processes should have been able to run seamlessly. We're only a small business. This server has a pretty quiet life.

2. Please look at the attached screenshot. Have I got the Affinity flags set correctly? Could it be done any better? For instance, would 3 x 1 or 1 x 3, be better than 2 x 2 that I currently have configured?

We have a 7 year old SBS 2008 server running SQL Server 2008 and Exchange 2007. The server is dual Xeon quad core with 12G ram. So the server thinks it's got 16 cores. I've had a couple of previous questions in here trying to track down the cause of the poor server/network performance we have been seeing in the past few months. The only thing which has changed recently is that I have migrated an MsAccess database back-end into the SQL Server. We have 8 users of the database who now access the SQL Server.

Yesterday I rebooted the server and found that everything was much, much faster afterwards until someone opened their database application and started to untilize the SQL Server. At that point everything slowed down again. So I made the guess that maybe our problems were related to the SQL Server waking up and grabbing the server resources.

In the SQL Server Processor properties I saw that it was configured to automatically set the Processor Affinity Mask and I/O Affinity Mask for all processors. I changed it so that the Processor Affinity is ticked on 2 CPU's and the I/O affinity is ticked on 2 CPU's, leaving the other 12 CPU's available for other things.

This has speeded things up massively, and we don't notice our database application running any slower because of this change.

I will welcome any comments about this, but I have 2 specific questions.....

1. How can it be that by releasing 12 CPU cores, the server has speeded up 10 fold, when previously the System Idle Process was mostly at 98/99% anyway? I would have thought that with that tiny anount of CPU utilization, the other server processes should have been able to run seamlessly. We're only a small business. This server has a pretty quiet life.

2. Please look at the attached screenshot. Have I got the Affinity flags set correctly? Could it be done any better? For instance, would 3 x 1 or 1 x 3, be better than 2 x 2 that I currently have configured?

Everything depends on the database size, structure and usage.

Think about that in Access all resources was provided by computer of each user. Now all those performance must be provided by the SQL Server alone. In this case you can optimize the performance with the following steps:

- Give more CPU cores for SQL Server (6 or 8 cores for Processor and the same 6-8 for IO) because in case of CPU intensive queries it can speed up the parallel executions

- Create necessary indexes on tables. If you are not familiar with this ask a database expert to help to create those indexes or DTA (Database Tuning Advisor) tool to find missing indexes

- Put databases (system and user) on a different disk from other resources (File shares, Exchange database etc).

Think about that in Access all resources was provided by computer of each user. Now all those performance must be provided by the SQL Server alone. In this case you can optimize the performance with the following steps:

- Give more CPU cores for SQL Server (6 or 8 cores for Processor and the same 6-8 for IO) because in case of CPU intensive queries it can speed up the parallel executions

- Create necessary indexes on tables. If you are not familiar with this ask a database expert to help to create those indexes or DTA (Database Tuning Advisor) tool to find missing indexes

- Put databases (system and user) on a different disk from other resources (File shares, Exchange database etc).

ASKER

Ste5an: Thanks for your comprehensive reply. I'll try and answer as much as I am able...

Our Server is a Dell Power Edge T410, The Dell Diagnostic software installed reports that the maximum memory allowed is 13Gb. So we're pretty much maxed out with our 12G of ECC memory. Can ECC memory slow down?

The disks were changed a couple of years ago when one of them failed. I don't believe that the disks can be a factor in this issue because now that I stopped SQL Server using some of the cores, the speed is back to normal

I've never seen the SQL Server taking more than 4G ram, and i've now limited it to 3G because when it takes 4G, the server memory is almost 100% utilized.

Our Server is a Dell Power Edge T410, The Dell Diagnostic software installed reports that the maximum memory allowed is 13Gb. So we're pretty much maxed out with our 12G of ECC memory. Can ECC memory slow down?

The disks were changed a couple of years ago when one of them failed. I don't believe that the disks can be a factor in this issue because now that I stopped SQL Server using some of the cores, the speed is back to normal

I've never seen the SQL Server taking more than 4G ram, and i've now limited it to 3G because when it takes 4G, the server memory is almost 100% utilized.

ASKER

@Máté Farkas: You suggested giving 6 or 8 cores to Processing and the same to I/O. Surely I would be back in my original situation then? It's only by restricting SQL Server to 4 cores that i've got the other server processes running normally.

The database is currently 1.4Gb and yes my tables are properly indexed. The SQL Server performance isn't an issue, our database seems just as fast no matter how many cores are allocated to SQL Server.

The database is currently 1.4Gb and yes my tables are properly indexed. The SQL Server performance isn't an issue, our database seems just as fast no matter how many cores are allocated to SQL Server.

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Ste5an: Thanks for the advice. I will try deactivating hyperthreading and see what the performance is like afterwards. The biggest problem encountered by my users is slow file access. For instance, I have a housekeeping task which copies 20 x 1K files between 2 folders on the server, This should take less than 1 second. During the time that our server has been running slow, this task has been taking 30 seconds. Today after restricting SQL Server to 4 cores, it takes less than 1 second again.

Even though that task was taking 30 seconds, the server was still showing that it was 98% idle. So this is what prompted my original question. How can things be so slow when the server shows itself to be idle.

Even though that task was taking 30 seconds, the server was still showing that it was 98% idle. So this is what prompted my original question. How can things be so slow when the server shows itself to be idle.

You shouldn't change the CPU affinity unless you really know what you're doing. Best thing to do is to let the OS to take care of that.

With that said, by the description of the issue, for me you're facing memory resource issues. Try to provide more memory to the SQL Server instance and check if the performance is better after.

I've never seen the SQL Server taking more than 4G ram, and i've now limited it to 3G because when it takes 4G, the server memory is almost 100% utilized.Where did you see the SQL Server memory usage? Hopefully not from the Task Manager, as it will give you a wrong information.

With that said, by the description of the issue, for me you're facing memory resource issues. Try to provide more memory to the SQL Server instance and check if the performance is better after.

ASKER

@Vitor Montalvão:

>> You shouldn't change the CPU affinity unless you really know what you're doing

As I said at the start of this thread, with the Affinities being set automatically the server is really slow. Today I've managed to give my users some decent network performance by setting the affinities manually. But one of the reasons for starting this thread was because I have no idea if i've done it the best way.

>> Where did you see the SQL Server memory usage? Hopefully not from the Task Manager

Yes I did see the 4G memory usage in Task Manager. I don't know where else I can see it?

>> Try to provide more memory to the SQL Server instance

In Task Manager, I was seeing that SQL Server was using 4G and there was only ½G free memory. So thats why I restricted SQL Server to 3G. No matter what I do, SQL Server always seems to work OK. The database access is always great.

>> You shouldn't change the CPU affinity unless you really know what you're doing

As I said at the start of this thread, with the Affinities being set automatically the server is really slow. Today I've managed to give my users some decent network performance by setting the affinities manually. But one of the reasons for starting this thread was because I have no idea if i've done it the best way.

>> Where did you see the SQL Server memory usage? Hopefully not from the Task Manager

Yes I did see the 4G memory usage in Task Manager. I don't know where else I can see it?

>> Try to provide more memory to the SQL Server instance

In Task Manager, I was seeing that SQL Server was using 4G and there was only ½G free memory. So thats why I restricted SQL Server to 3G. No matter what I do, SQL Server always seems to work OK. The database access is always great.

ASKER

Ok it's the end of the working day. i've rebooted the server with hyperthreading disabled. I also set the SQL Server processor affinities back to auto. Everything seems nice and fast so far. I'll see what happens tomorrow when everyone starts using it.

SBS 2008 eh?

Use SQL Studio Manager to open the instance, right click on the root of the instance & properties, and limit the amount of memory the instance can use to 1GB.

Do the same for the WSUS instance.

12GB is really tight as a rule.

My SBS 2008 Setup Checklist for reference.

Use SQL Studio Manager to open the instance, right click on the root of the instance & properties, and limit the amount of memory the instance can use to 1GB.

Do the same for the WSUS instance.

12GB is really tight as a rule.

My SBS 2008 Setup Checklist for reference.

i've rebooted the server with hyperthreading disabled. I also set the SQL Server processor affinities back to auto. Everything seems nice and fast so far.Good. Hyperthreading from old servers were very bad. Nowadays are better.

Set affinities to auto should be the default and as I said before, only change it if you really know what you're doing. From here and without access to your server we can't tell you what is the best configuration for your.

Yes I did see the 4G memory usage in Task Manager. I don't know where else I can see it?You can only check inside SQL Server by running the following command in Management Studio:

DBCC MEMORYSTATUS

It could also be over-parallelization of queries. The default SQL setting on when to use parallel processing is ridiculously low.

EXEC sp_configure 'cost threshold for parallelism'

If it's less than, say, 40, set it to, say, 40:

EXEC sp_configure 'cost threshold for parallelism', 40

RECONFIGURE

That's not a hard-and-fast number, but it's very reasonable. I think the default was 5 or at most 10, which are way too low and still too low.

EXEC sp_configure 'cost threshold for parallelism'

If it's less than, say, 40, set it to, say, 40:

EXEC sp_configure 'cost threshold for parallelism', 40

RECONFIGURE

That's not a hard-and-fast number, but it's very reasonable. I think the default was 5 or at most 10, which are way too low and still too low.

ASKER

Morning all,

Today my server is very fast and my users are happy :) In Sql Server the Affinities are set to auto and the memory is unrestricted.

The thing I find strangest is that Task Manager is now reporting 5.7G free memory. For years it's been showing less than 1G unless I throttled SQL Server back.

i ran DBCC MEMORYSTATUS. It reports VM Reserved 12,689,400 and VM Committed 2,328,820. Task manager is reporting sqlserver.exe using 2,593,728K

There's been a lot of useful comments, but i'm going to award the points to Ste5an because it was he who initially suggested that I disabled Hyperthreading, and that's all that I have changed really.. Thank you everyone for your input.

Today my server is very fast and my users are happy :) In Sql Server the Affinities are set to auto and the memory is unrestricted.

The thing I find strangest is that Task Manager is now reporting 5.7G free memory. For years it's been showing less than 1G unless I throttled SQL Server back.

i ran DBCC MEMORYSTATUS. It reports VM Reserved 12,689,400 and VM Committed 2,328,820. Task manager is reporting sqlserver.exe using 2,593,728K

There's been a lot of useful comments, but i'm going to award the points to Ste5an because it was he who initially suggested that I disabled Hyperthreading, and that's all that I have changed really.. Thank you everyone for your input.

Then having 8 cores / 16 threads with only 12 GB RAM is a waste of CPU. You need definitely more RAM.

And cause it's pretty old software: I'm not sure, right now, but your configuration maybe hurt by using hyper-threading. Deactivating it can help, but requires testing.

The overall SQL Server performance depends on the IO (HDD speed and IOPS) and the amount of RAM in comparison to the total database size. SQL Server buffers read data pages in memory as long as possible. Thus more RAM, better SQL Server performance. Thus when the total database size is lesse then RAM, then this is good.

Now for rebooting helps:

Check the min. and max. memory settings of SQL Server (right-click on the server node in SSMS, options). I would use 2GB min. memory and 8GB max. memory. Important is the max memory to avoid SQL Server grabbing all, cause Exchange requires also some memory.

And depending on your databases and processes create a maintenance plan to run a daily job to clear the buffers and the plan cache.

Open in new window