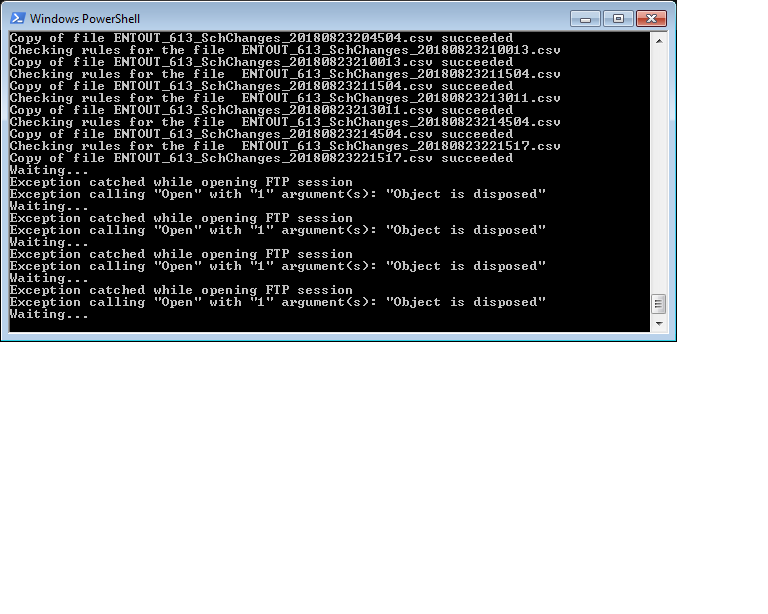

Script causing an error after running for long time.

Hi Experts,

I have the script below do download all new files from my FTP server into my local PC.

However after running it for a while I get the attached error.

Can someone help me fix this problem.?

see following for reference..

https://www.experts-exchange.com/questions/29112257/How-to-copy-only-new-files-from-FTP-site.html?anchor=a42647509¬ificationFollowed=210720838&anchorAnswerId=42647509#a42647509

Thanks in advance.

Untitled.png

I have the script below do download all new files from my FTP server into my local PC.

However after running it for a while I get the attached error.

# Load WinSCP .NET assembly

Add-Type -Path "C:\Program Files (x86)\WinSCP\WinSCPnet.dll"

# Set up session options

$sessionOptions = New-Object WinSCP.SessionOptions -Property @{

Protocol = [WinSCP.Protocol]::Sftp

HostName = "sftp.MySite.com"

UserName = "MyUserName"

Password = "MyPWD"

SshHostKeyFingerprint = "1234567890="

}

$session = New-Object WinSCP.Session

try

{

# Connect

$session.Open($sessionOptions)

# Transfer files

$sourcePath = "/Outbox/" # don't add *, will be added where necessary

$destPath = "H:\FTP\"

$destPathNew = "H:\FTP\Caspio\"

$transferOptions = New-Object WinSCP.TransferOptions

while($True)

{

try {

# Get list of matching files in the directory

$remoteFiles = $session.EnumerateRemoteFiles($sourcePath, "*.*", [WinSCP.EnumerationOptions]::None)

# Any file matched?

if ($remoteFiles.Count -gt 0) {

foreach ($fileInfo in $remoteFiles) {

try {

Write-Host "Checking rules for the file " $fileInfo.Name

# check the filename for matching the mask

if ($fileInfo.Name -like "*PAT*.*" -or $fileInfo.Name -like "*Sch*.*" -or $fileInfo.Name -like "*Full*.*") {

# check if the file exists in the local download folder

if (![System.IO.File]::Exists($destPath + $fileInfo.Name)) {

# download the file

$transferResult = $session.GetFiles($sourcePath + $fileInfo.Name, $destPath, $False, $transferOptions)

$transferResult.Check()

$destFile = $destPathNew + $fileInfo.Name # local path where to copy files

$sourceFile = $destPath + $fileInfo.Name # local path where was downloaded new files

if ( ![System.IO.File]::Exists($destFile)) { # check if the file exists in the destination folder

# copy the file in the destination folder

Copy-Item -Path $sourceFile -Destination $destFile

Write-Host "Copy of file $($fileInfo.Name) succeeded"

}

}

}

}

catch {

Write-Host "Exception catched on file $($fileInfo.Name)"

Write-Host $_.Exception.Message

}

}

}

else {

Write-Host "No files matching $wildcard found"

}

}

catch {

Write-Host "Exception catched while downloading new files"

Write-Host $_.Exception.Message

}

}

Write-Host "Waiting..."

Start-Sleep -Seconds 5

}

finally

{

$session.Dispose()

}Can someone help me fix this problem.?

see following for reference..

https://www.experts-exchange.com/questions/29112257/How-to-copy-only-new-files-from-FTP-site.html?anchor=a42647509¬ificationFollowed=210720838&anchorAnswerId=42647509#a42647509

Thanks in advance.

Untitled.png

ASKER

Hi,

In my opinion, looks like the writing messages to log is the source of failure as the error message states.

Perhaps we can remove the successful/unsuccessful messages from the script?

Thanks,

Ben

so script runtime duration can be a very long time.So far this was not the case here, we have a scheduler that creates small files on the FTP server every 15 minutes, the downloading takes just a minute.

In my opinion, looks like the writing messages to log is the source of failure as the error message states.

Perhaps we can remove the successful/unsuccessful messages from the script?

Thanks,

Ben

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Hi,

It only worked initially, but after it copied all files I then went and deleted all copied files and let it continue running, but nothing more got copied over.

See attached results.

fyi- we're planning to let this run constantly, and another program will also constantly check for files created under $destPathNew = "H:\FTP\Caspio\", process those files and delete them, therefore I had to test them in same way.

Thanks,

Ben

It only worked initially, but after it copied all files I then went and deleted all copied files and let it continue running, but nothing more got copied over.

See attached results.

fyi- we're planning to let this run constantly, and another program will also constantly check for files created under $destPathNew = "H:\FTP\Caspio\", process those files and delete them, therefore I had to test them in same way.

Thanks,

Ben

Looks like you have to move line 13 before line 25, as we need to recreate the session object each time.

Also, you may prefer using straight up sftp (as I recall Windows has this installed by default).

If you use sftp, your code becomes a single line...

If you use sftp, your code becomes a single line...

echo "commands\nexit\n" | sftp -i ~/path-to-empty-passphrase-key-file

David, though the script is not perfect, it has an import check: files are only copied over if they do not yet exist in the destination folder. You can't do that (well) with FTP directly.

ASKER

Hi,

Thanks,

Ben

though the script is not perfectIn middle of testing, but do you foresee any issues? or perhaps just referring to possible improvements? what improvements would you suggest?

Thanks,

Ben

Minor improvement. Line 47 in my code snippet above is superfluous, the existence of the destination file has already been checked (negative) in line 37.

I would also set $destfile prior to line 37 and use this var in the if.

I would also set $destfile prior to line 37 and use this var in the if.

ASKER

Thank you Qlemo!

ASKER

btw, when you have a chance please look at the following, which is my next step after this script.

https://www.experts-exchange.com/questions/29115337/Cannot-read-file-permission-denied.html?anchor=a42664113¬ificationFollowed=212034968&anchorAnswerId=42664113#a42664113

Thanks,

Ben

https://www.experts-exchange.com/questions/29115337/Cannot-read-file-permission-denied.html?anchor=a42664113¬ificationFollowed=212034968&anchorAnswerId=42664113#a42664113

Thanks,

Ben

ASKER

Hi,

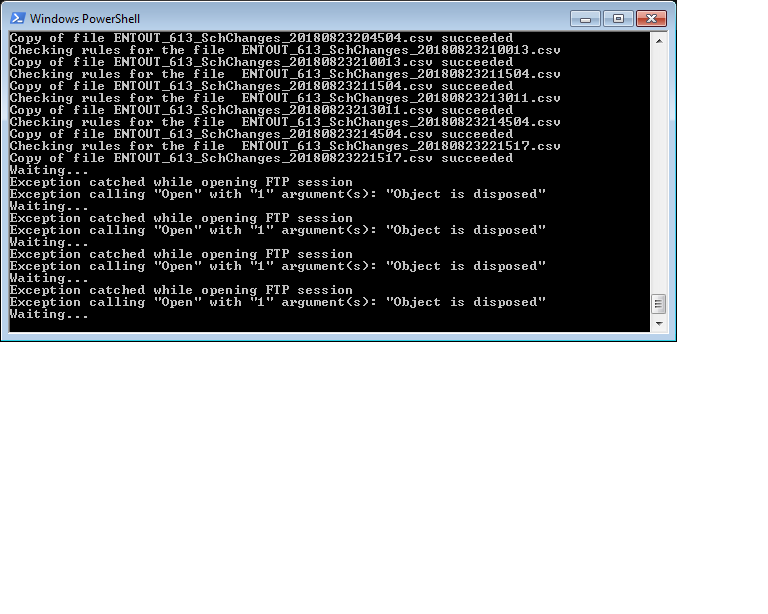

See error message gotten, perhaps this happens while file is middle being saved on the FTP server.

How should this be handled?

Thanks,

Ben

Untitled.png

See error message gotten, perhaps this happens while file is middle being saved on the FTP server.

How should this be handled?

Thanks,

Ben

Untitled.png

You have to live with such temporary failures on a living system. Only if you get an error repeatedly you need to care.

ASKER

Hi Qlemo,

When you have time, see below.

https://www.experts-exchange.com/questions/29154477/PS-Script-suddenly-stopped-to-work.html#questionAdd

Thanks,

Ben

When you have time, see below.

https://www.experts-exchange.com/questions/29154477/PS-Script-suddenly-stopped-to-work.html#questionAdd

Thanks,

Ben

ASKER

Hi Qlemo,

This script ran fine for long time, this week it started to give (sometimes) error below.

Thanks

This script ran fine for long time, this week it started to give (sometimes) error below.

PS E:\AppDev> E:\AppDev\DownloadfromHHAExchangeQlemo.ps1

Exception catched while downloading new files

An error occurred while enumerating through a collection: Timeout waiting for WinSCP to respond.Thanks

SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Hi Qlemo,

I'm waiting for whoever manages that sftp site to clear up the folder and will see if this solves the problem.

Thanks

I'm waiting for whoever manages that sftp site to clear up the folder and will see if this solves the problem.

Thanks

Likely there's some way in PowerShell to set the runtime duration to infinite, which will fix your problem.

Note: Keep in mind, this will likely effect all scripts, so no runaway script will ever exit... This may or may not be appropriate...