snyderkv

asked on

vSphere NFS one or two portgroups?

I have two target IP address on my NetApp filer configured with NFS.

I noticed some sites have a single NFS vmkernal and others have two port groups. I understand you need to do this with iSCSI for the multipathing but is it necessary for NFS? How should I set it up?

Thanks

I noticed some sites have a single NFS vmkernal and others have two port groups. I understand you need to do this with iSCSI for the multipathing but is it necessary for NFS? How should I set it up?

Thanks

ASKER

I just looked at this particular site and see 4 NFS storage IPs on the SVM and a single share so the hosts map that share i.e /voice or whatever it may be. I'm not sure if that answers your question? I didn't think NFS would be set up the same as vMotion. By the way we have two 10GB uplinks.

It really depends if you want any load balancing or resilience ?

what happens if a network link gets removed ?

what happens if a network link gets removed ?

ASKER

Well our two 10GB Uplinks on the host are active/active. So even a single NFS vmkernal would fail over to the second uplink. The NFS porgroup teaming policy will be set to load balancing based on physical NIC load.

Since clients access the share and connect to a random storage target, I figured a single NFS vmk is all we need unless traffic can be shared between two NFS vmkernels?

It seems different with vMotion because their aren't any targets on vMotion. It communicites via VMkernels. So I figured since NFS has multiple storage targets, you need just one NFS port group. But I'm not understanding how traffic flows between client and target with NFS.

Since clients access the share and connect to a random storage target, I figured a single NFS vmk is all we need unless traffic can be shared between two NFS vmkernels?

It seems different with vMotion because their aren't any targets on vMotion. It communicites via VMkernels. So I figured since NFS has multiple storage targets, you need just one NFS port group. But I'm not understanding how traffic flows between client and target with NFS.

That's fine then because iSCSI does not work like that, it requires multipath.

but having more than a single VMKernel, distributes traffic, but you then need to use different IP Address on ESXi host and Export.

and you need to check your bandwidth, and utilisation if you need to do that.

but having more than a single VMKernel, distributes traffic, but you then need to use different IP Address on ESXi host and Export.

and you need to check your bandwidth, and utilisation if you need to do that.

ASKER

Is there an NFS with Netapp best practice document or blog?

I'm migrating to a vDS so I'll control traffic via NIOC so I don't think bandwidth will be an issue. Not sure if it can be with 2x10GB nics but I may still use it.

I'm migrating to a vDS so I'll control traffic via NIOC so I don't think bandwidth will be an issue. Not sure if it can be with 2x10GB nics but I may still use it.

It will be on the NetApp NOW site.

Do you not use the NetApp Appliance to create Exports which configures and mounts NFS ?

Do you not use the NetApp Appliance to create Exports which configures and mounts NFS ?

ASKER

I'm not sure what you're talking about. The export is the share path /Voice right? We also have exports for security. I'll try and send screen shots later once I get my lab up and running again so you know what I'm talking about.

To assist with the configuration and management of the NetApp Filer with ESXi - there is an appliance!

ASKER

Are you talking about the VSA vmware storage appliance? I've used that before and I know it sets best practices for storage creation on the netapp side but I'm talking about setting up NFS on the vSphere side.

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Ok roger that I'll research to see what the best configuration would be in our environment.

ASKER

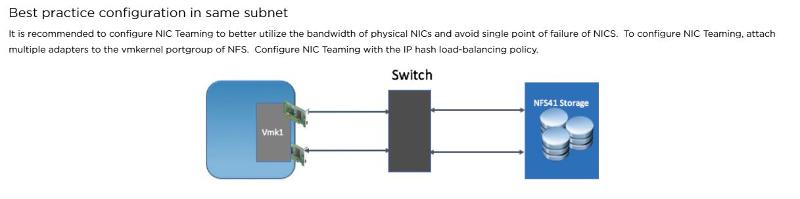

Ah ok I found an old document that answers my question so I'm posting it here in case it helps others. So this is the correct answer if anyone is confused.

https://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/partners/netapp/vmware-netapp-and-vmware-vsphere-storage-best-practices.pdf

I checked our network and storage and it's fully setup with Etherchannel meaning I do infact only need one NFS vmkernel and the storage network determines the multi-pathing to targets. For multipathing, you mount the NFS with at least two targets, during mount.

"Requires only one VMkernel port for IP storage to make use of multiple physical paths"

https://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/partners/netapp/vmware-netapp-and-vmware-vsphere-storage-best-practices.pdf

I checked our network and storage and it's fully setup with Etherchannel meaning I do infact only need one NFS vmkernel and the storage network determines the multi-pathing to targets. For multipathing, you mount the NFS with at least two targets, during mount.

"Requires only one VMkernel port for IP storage to make use of multiple physical paths"

How is the NetApp configured, and do you have Exports on different IP Addresses for your volumes ?

If you use the NetApp appliance to configure and present Exports, it will alternate between IP Addresses for Mounts/Exports, so "it load balances" the exports between Host IP Address and VMKernels, so not all your traffic is on a single IP Address.