Windows Batch & Powershell: keep lines that contain certain string.

Hello experts,

I have html file attached.

I am looking for a Windows batch or Powershell script to extract all lines that contains

https://www.experts-exchange.com/questions

I attached dummy input file and dummy expected result file.

For Powershell I have the following function but I don't know how to adapt it to get the expected result file.

If you have questions, please contact me.

Regards,

Luis.

DummyInputFile.html

DummyExpectedResultFile.txt

I have html file attached.

I am looking for a Windows batch or Powershell script to extract all lines that contains

https://www.experts-exchange.com/questions

I attached dummy input file and dummy expected result file.

For Powershell I have the following function but I don't know how to adapt it to get the expected result file.

Function Select-MyString {

[CmdletBinding(DefaultParameterSetName='ToPipeline')]

Param(

[Parameter(Position=0, Mandatory=$true)]

[String]$Pattern,

[Parameter(Position=1, Mandatory=$true)]

[String]$InputFile,

[Parameter()]

[Switch]$NotMatch,

[Parameter(Mandatory=$true, ParameterSetName='ToFile')]

[ValidateScript({Test-Path -Path ([IO.Path]::GetDirectoryName($_)) -PathType Container})]

[String]$OutputFile,

[Parameter(Mandatory=$true, ParameterSetName='ToDirectory')]

[ValidateScript({Test-Path -Path $_ -PathType Container})]

[String]$OutputDirectory,

[Parameter()]

[Microsoft.PowerShell.Commands.FileSystemCmdletProviderEncoding]$Encoding = 'ASCII'

)

$splat = @{

Path = $InputFile

Pattern = $Pattern

NotMatch = $NotMatch.ToBool()

Encoding = $Encoding

}

If ($PSCmdlet.ParameterSetName -eq 'ToPipeline') {

Select-String @splat | Select-Object -ExpandProperty Line

} Else {

If ($PSCmdlet.ParameterSetName -eq 'ToDirectory') {

$OutputFile = Join-Path -Path $OutputDirectory -ChildPath "$(Get-Date -Format 'yyyyMMdd_HHmmss')_output$([IO.Path]::GetExtension($InputFile))"

}

Select-String @splat | Select-Object -ExpandProperty Line | Set-Content -Path $OutputFile -Encoding $Encoding

}

}If you have questions, please contact me.

Regards,

Luis.

DummyInputFile.html

DummyExpectedResultFile.txt

ASKER

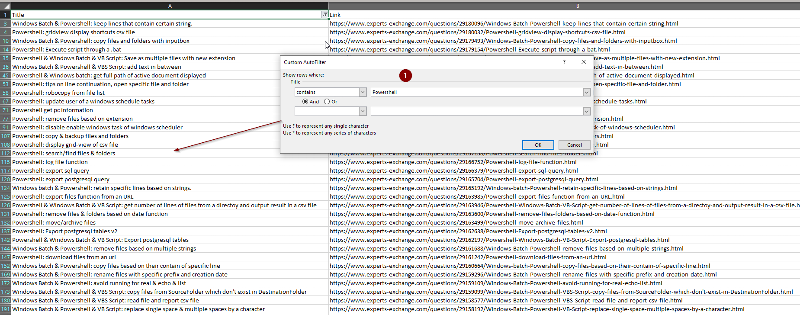

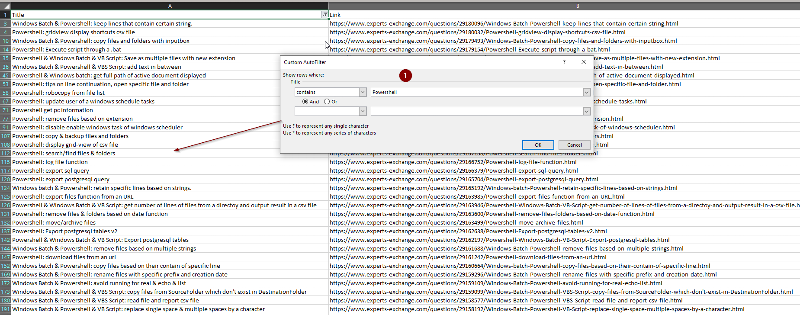

I think there is a problem with the filter as with the following I got the attached file.

# ===========================================================================

# Author:

# Creation date: 2020/04/26 09:46:05

# Description: Download HTML file and filter on specific string

# ===========================================================================

$CurrentDir = Split-Path $script:MyInvocation.MyCommand.Path

$domain = "https://www.experts-exchange.com/members/LD16.html?headerLink=account_profile"

$saveTo = $CurrentDir + '\File.txt'

#Example: $Root = $CurrentDir + '\Root2'

#Example: $FileVariable = Get-Content -Path "$($CurrentDir)\File.txt"

$WebResponse = Invoke-WebRequest $domain

$links = ($WebResponse.Links | Where-Object {$_.href -like "/*"}).href

if (Test-Path $saveTo) {

Remove-Item $saveTo

}

foreach ($link in $links)

{

$result = $domain + $link

$result | Out-File $saveTo -Append

}ASKER

Possible to have a proposal with HTML and txt file to see if HTML file downloaded is the right one?

Do you mean to download the questions that you have asked before?

ASKER

Yes, download I want to have HTML file, ref $domain = "https://www.experts-exchange.com/members/LD16.html?headerLink=account_profile"

and Txt file to debug and compare if the filter is properly applied.

and Txt file to debug and compare if the filter is properly applied.

think you probably can use this URL instead.

https://www.experts-exchange.com/searchResults.jsp?alertTypeId=10&nextSearchRow=3&searchSubmit=&rtid=10&sort=20_-1&searchDisplayTypeID=1&epp=1000&searchTitle=&searchRow=1&clauseTypeId1=20&searchInclude1=true&searchMATID1=10&searchMember1=LD16

but it only return the first 200 questions and the URLs of the questions listed may still need to be parsed.

https://www.experts-exchange.com/searchResults.jsp?alertTypeId=10&nextSearchRow=3&searchSubmit=&rtid=10&sort=20_-1&searchDisplayTypeID=1&epp=1000&searchTitle=&searchRow=1&clauseTypeId1=20&searchInclude1=true&searchMATID1=10&searchMember1=LD16

but it only return the first 200 questions and the URLs of the questions listed may still need to be parsed.

ASKER

In that case I prefer to save full HTML file manually.

From the original profile page and I made a Inspect check, when we select "questions", it actually make a post request to URL below:

https://www.experts-exchange.com/members/<MemberID>.html/profileCon

this was the API developed by EE to grab the question list.

https://www.experts-exchange.com/members/<MemberID>.html/profileCon

this was the API developed by EE to grab the question list.

ASKER

Sorry but I am getting lost here. If I am not able to download full html file I will do it manually. The final aim is just have to clean html file and have a simple list with my questions.

I just try to provide more details on how the current page is working, and hope someone else will provide you with a working solution

ASKER

Don't worry no issues. We go back to initial question to output txt file based on html file that I have and I will download manually.

The html you posted seems to have been manipulated already.

Can you please post the original, unaltered html? It's probably easier to work once with the original instead of introducing intermediary steps.

Can you please post the original, unaltered html? It's probably easier to work once with the original instead of introducing intermediary steps.

ASKER

Of course. Please find attached html file.

Can we try the following two approaches:

1.Extract all lines that contains: https://www.experts-exchange.com/questions/XXX from an SourceFile.html and generate a txt file.

SourceFile.html will be located in same location of Powershell script.

2.Download the file from a reference link, Extract all lines that contains: https://www.experts-exchange.com/questions/XXX from file downloaded and generate a txt file with all lines extracted from downloaded file.

SourceFile.html

Can we try the following two approaches:

1.Extract all lines that contains: https://www.experts-exchange.com/questions/XXX from an SourceFile.html and generate a txt file.

SourceFile.html will be located in same location of Powershell script.

2.Download the file from a reference link, Extract all lines that contains: https://www.experts-exchange.com/questions/XXX from file downloaded and generate a txt file with all lines extracted from downloaded file.

SourceFile.html

Here's something for starters; it'll extract the link and the title from the line and create a csv as output.

This could easily be changed to process a directory with input files and create one output file.

This could easily be changed to process a directory with input files and create one output file.

$inFile = '.\SourceFile.html'

$outName = 'Questions.csv'

$scriptDir = Split-Path $MyInvocation.MyCommand.Path -Parent

$outFile = "$($scriptDir)\$($outName)"

# Sample line:

# <a href="https://www.experts-exchange.com/questions/29176165/Excel-VBA-keep-remove-text-based-on-character-add-in.html" class="result-not-top-ten" title="Excel VBA: keep & remove text based on character & add-in"><span>Excel VBA: keep & remove text based on character & add-in</span></a>

Get-Content -Path $inFile -Encoding UTF8 -ErrorAction Stop | Where-Object {$_ -match '<a\s+href="https://www\.experts-exchange\.com/questions/\d+/'} | ForEach-Object {

[xml]($_.Replace(' ', ' ')) | Select-Object -Property @{n='Title'; e={$_.a.Title}}, @{n='Link'; e={$_.a.href}}

} | Export-Csv -NoTypeInformation -Encoding UTF8 -Path $outFileASKER

Great news it works!

Know that we have the right mechanism can we download the html file as proposed by Ryan: Lines commented. Thank you in advance for your help.

Know that we have the right mechanism can we download the html file as proposed by Ryan: Lines commented. Thank you in advance for your help.

# ===========================================================================

# Author:

# Creation date: 2020/04/26 09:46:05

# Description: Download EE questions and get Title + URL

# ===========================================================================

#$CurrentDir = Split-Path $script:MyInvocation.MyCommand.Path

#$domain = "https://www.experts-exchange.com/members/LD16.html?headerLink=account_profile"

#$saveTo = $CurrentDir + '\File.txt'

#

##Example: $Root = $CurrentDir + '\Root2'

##Example: $FileVariable = Get-Content -Path "$($CurrentDir)\File.txt"

#$WebResponse = Invoke-WebRequest $domain

#

#$links = ($WebResponse.Links | Where-Object {$_.href -like "/*"}).href

#

#if (Test-Path $saveTo) {

# Remove-Item $saveTo

#}

#

#foreach ($link in $links)

#{

# $result = $domain + $link

# $result | Out-File $saveTo -Append

#}

$inFile = '.\SourceFile.html'

$outName = 'Questions.csv'

$scriptDir = Split-Path $MyInvocation.MyCommand.Path -Parent

$outFile = "$($scriptDir)\$($outName)"

# Sample line:

# <a href="https://www.experts-exchange.com/questions/29176165/Excel-VBA-keep-remove-text-based-on-character-add-in.html" class="result-not-top-ten" title="Excel VBA: keep & remove text based on character & add-in"><span>Excel VBA: keep & remove text based on character & add-in</span></a>

Get-Content -Path $inFile -Encoding UTF8 -ErrorAction Stop | Where-Object {$_ -match '<a\s+href="https://www\.experts-exchange\.com/questions/\d+/'} | ForEach-Object {

[xml]($_.Replace(' ', ' ')) | Select-Object -Property @{n='Title'; e={$_.a.Title}}, @{n='Link'; e={$_.a.href}}

} | Export-Csv -NoTypeInformation -Encoding UTF8 -Delimiter ";" -Path $outFile

Automatically downloading is probably too much work, sorry. You could ask EE support if they offer a REST API to access your questions, but otherwise, it's not just done with a simple "Invoke-WebRequest", because you need to logon to the site, and send all kinds of forms back until you reach the page you want to download. And that's only good until EE changes the site.

The script could be changed so that it appends to an existing csv, while avoiding duplicates. That way, you can create a baseline with all old questions, and every now and then download the current list and add the new ones?

The script could be changed so that it appends to an existing csv, while avoiding duplicates. That way, you can create a baseline with all old questions, and every now and then download the current list and add the new ones?

ASKER

Yes. I was thinking the same. I will manually save html and execute your script. In that case if I have already a csv files with previous questions how to append new questions to previous CSV file while removing duplicates. Let's imagine I already have 850 lines in my current CSV file. Next week I download html file. How to append to previous CSV file just new questions?

Hi Luis,

You have the file with your 828 questions (that existed at the time) that I posted at a previous question for you. As indicated at that question, it was a manual effort. It is a plain text file (so is a CSV), so all you have to do is add your new questions to it. I know you're a Notepad++ user...it will be easy with that or just about any text editor. Since you've been averaging about one question per day during the last year, it's a not a big effort to add the one URL each day (or occasionally a few and sometimes none)...definitely not worthy of automation, especially since EE doesn't make it easy. :) Regards, Joe

You have the file with your 828 questions (that existed at the time) that I posted at a previous question for you. As indicated at that question, it was a manual effort. It is a plain text file (so is a CSV), so all you have to do is add your new questions to it. I know you're a Notepad++ user...it will be easy with that or just about any text editor. Since you've been averaging about one question per day during the last year, it's a not a big effort to add the one URL each day (or occasionally a few and sometimes none)...definitely not worthy of automation, especially since EE doesn't make it easy. :) Regards, Joe

ASKER

Hi Joe, Indeed, I have already the file that you posted. The problem is that it doesn't contain the title + question url as it is proposed by oBdA through his Powershell script. As a result the idea is to save manually all HTML files save them and execute the script to generate a single CSV file. Then in the future. Once every two weeks. Save manually HTML file and just append new questions to the CSV file that contains all previous questions.

Hi Luis,

I must admit to not reading the whole thread carefully...I was just surprised to see the question, since I thought that the previous question solved the problem for you, so I jumped in...sorry about that. Anyway, the Title is essentially everything after the question ID and before the HTML file extension. I say "essentially" because it contains hyphens instead of spaces and it is missing some punctuation (ampersands, colons, periods, etc.), but are those differences important to you? For example, the file has these links:

https://www.experts-exchange.com/questions/29179154/Powershell-Execute-script-through-a-bat.html

https://www.experts-exchange.com/questions/29159099/Windows-Batch-Powershell-VBS-Script-copy-files-from-SourceFolder-which-don't-exist-in-DestinationFolder.html

The Titles that you can easily extract from them (everything after the last slash and before the .html) are:

Powershell-Execute-script-

Windows-Batch-Powershell-V

The actual titles are:

Powershell: Execute script through a .bat

Windows Batch & Powershell & VBS Script: copy files from SourceFolder which don't exist in DestinationFolder

I don't know what you plan to do with the Title, but I suspect that the difference between the actual Title and the one extracted from the URL is not an issue. But if I'm wrong about that, let me know. Regards, Joe

I must admit to not reading the whole thread carefully...I was just surprised to see the question, since I thought that the previous question solved the problem for you, so I jumped in...sorry about that. Anyway, the Title is essentially everything after the question ID and before the HTML file extension. I say "essentially" because it contains hyphens instead of spaces and it is missing some punctuation (ampersands, colons, periods, etc.), but are those differences important to you? For example, the file has these links:

https://www.experts-exchange.com/questions/29179154/Powershell-Execute-script-through-a-bat.html

https://www.experts-exchange.com/questions/29159099/Windows-Batch-Powershell-VBS-Script-copy-files-from-SourceFolder-which-don't-exist-in-DestinationFolder.html

The Titles that you can easily extract from them (everything after the last slash and before the .html) are:

Powershell-Execute-script-

Windows-Batch-Powershell-V

The actual titles are:

Powershell: Execute script through a .bat

Windows Batch & Powershell & VBS Script: copy files from SourceFolder which don't exist in DestinationFolder

I don't know what you plan to do with the Title, but I suspect that the difference between the actual Title and the one extracted from the URL is not an issue. But if I'm wrong about that, let me know. Regards, Joe

ASKER

Hi Joe,

Don't worry no issue. Your approach is also valid.

I see multiple benefits here having CSV file:

1.Avoid manual operations by using text editor. I am a big fans of MS Office application and CSV format is one of my favorite format.

If I can have this CSV file without having multiple operations, I am highly satisfied.

2.Better look and feel with two columns

3.Use Custom filter swiftly:

Select line: Shift+Space

Activate the filter: Ctrl+Shift+L

And perform a search based on the title that I want:

Shift+Down Arrow+F+A (one of my favorite)

Example: Filter on "Powershell" string.

This is personal vision but I insist, your approach is also valid.

Additionally the action plan here is to call the Powershell through my AutoHotkey script file through a simple key: example <!>q:: and directly have csv file.

If I forgot how to get my questions, I just call the shortcuts mapping file through Ctrl+Shift+F6 and I perform the right search to get the Hotkey. :-)

Don't worry no issue. Your approach is also valid.

I see multiple benefits here having CSV file:

1.Avoid manual operations by using text editor. I am a big fans of MS Office application and CSV format is one of my favorite format.

If I can have this CSV file without having multiple operations, I am highly satisfied.

2.Better look and feel with two columns

3.Use Custom filter swiftly:

Select line: Shift+Space

Activate the filter: Ctrl+Shift+L

And perform a search based on the title that I want:

Shift+Down Arrow+F+A (one of my favorite)

Example: Filter on "Powershell" string.

This is personal vision but I insist, your approach is also valid.

Additionally the action plan here is to call the Powershell through my AutoHotkey script file through a simple key: example <!>q:: and directly have csv file.

If I forgot how to get my questions, I just call the shortcuts mapping file through Ctrl+Shift+F6 and I perform the right search to get the Hotkey. :-)

Sounds good, Luis, thanks for the explanation. Regards, Joe

Try this; it extracts the ID as well (so the format is not compatible to the previous version), so you can sort by ID, which will basically sort it by date.

$inFile = '.\SourceFile.html'

$outName = 'Links.csv'

$scriptDir = Split-Path $MyInvocation.MyCommand.Path -Parent

$outFile = "$($scriptDir)\$($outName)"

$links = @{}

If (Test-Path -Path $outFile) {

Import-Csv -Path $outFile | ForEach-Object {$links[$_.ID] = $_}

}

# Sample line:

# <a href="https://www.experts-exchange.com/questions/29176165/Excel-VBA-keep-remove-text-based-on-character-add-in.html" class="result-not-top-ten" title="Excel VBA: keep & remove text based on character & add-in"><span>Excel VBA: keep & remove text based on character & add-in</span></a>

Get-Content -Path $inFile -Encoding UTF8 -ErrorAction Stop |

Where-Object {$_ -match '<a\s+href="https://www\.experts-exchange\.com/questions/(?<ID>\d+)/'} |

ForEach-Object {

$link = [xml]($_.Replace(' ', ' ')) | Select-Object -Property @{n='Title'; e={$_.a.Title}}, @{n='ID'; e={$Matches['ID']}}, @{n='Link'; e={$_.a.href}}

$links[$link.ID] = $link

}

$links.Keys | Sort-Object -Descending | ForEach-Object {$links[$_]} | Export-Csv -NoTypeInformation -Encoding UTF8 -Path $outFileASKER

Great! Thank you very much for this. I will test it and let you know!

ASKER

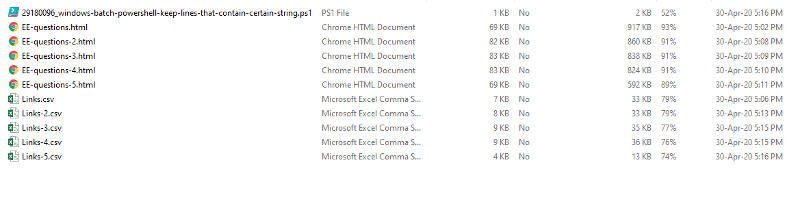

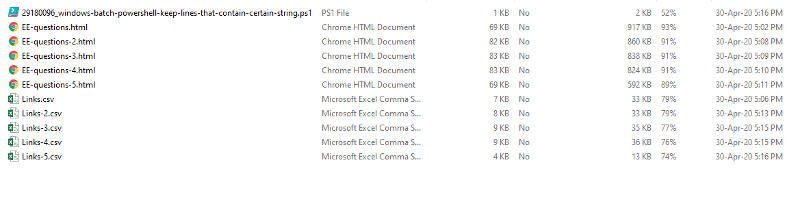

oBdA,

I was able to extract the various questions 5 files for each views:

However I start having error message as of export 3. Let me know if this is normal.

I send you attached csv files + html files.

Example of error message for export 5:

Regards,

Luis.

20200430171753_MultipleFiles.zip

I was able to extract the various questions 5 files for each views:

However I start having error message as of export 3. Let me know if this is normal.

I send you attached csv files + html files.

Example of error message for export 5:

15 char:46

+ Import-Csv -Path $outFile | ForEach-Object {$links[$_.ID] = $_}

+ ~~~~~~~~~~~~~~~~~~

+ CategoryInfo : InvalidOperation: (:) [], RuntimeException

+ FullyQualifiedErrorId : NullArrayIndexRegards,

Luis.

20200430171753_MultipleFiles.zip

Can't reproduce, but I suspect you already have an older file "Links-5.csv" there that doesn't have the ID column, or it uses a different delimiter than the ";" you added to the Export-Csv.

The Import-Csv in line 9 (when the script adds to an existing file) must use the same format as the Export-Csv in line 20.

You're aware that you don't need to change the $outName for each $inFile? They can all go into the same target csv.

Anyway, but I found another error - some titles in the new files contain unclosed <wbr> tags which HTML accepts, but XML complains about; this should fix it, and it will as well use the same delim for input and output csv:

The Import-Csv in line 9 (when the script adds to an existing file) must use the same format as the Export-Csv in line 20.

You're aware that you don't need to change the $outName for each $inFile? They can all go into the same target csv.

Anyway, but I found another error - some titles in the new files contain unclosed <wbr> tags which HTML accepts, but XML complains about; this should fix it, and it will as well use the same delim for input and output csv:

$inFile = '.\SourceFile.html'

$outName = 'Links.csv'

$delimiter = ';'

$scriptDir = Split-Path $MyInvocation.MyCommand.Path -Parent

$outFile = "$($scriptDir)\$($outName)"

$links = @{}

If (Test-Path -Path $outFile) {

Import-Csv -Path $outFile -Delimiter $delimiter | ForEach-Object {$links[$_.ID] = $_}

}

# Sample line:

# <a href="https://www.experts-exchange.com/questions/29176165/Excel-VBA-keep-remove-text-based-on-character-add-in.html" class="result-not-top-ten" title="Excel VBA: keep & remove text based on character & add-in"><span>Excel VBA: keep & remove text based on character & add-in</span></a>

Get-Content -Path $inFile -Encoding UTF8 -ErrorAction Stop |

Where-Object {$_ -match '<a\s+href="https://www\.experts-exchange\.com/questions/(?<ID>\d+)/'} |

ForEach-Object {

$link = [xml]($_.Replace(' ', ' ').Replace('<wbr>', '')) | Select-Object -Property @{n='Title'; e={$_.a.Title}}, @{n='ID'; e={$Matches['ID']}}, @{n='Link'; e={$_.a.href}}

$links[$link.ID] = $link

}

$links.Keys | Sort-Object -Descending | ForEach-Object {$links[$_]} | Export-Csv -NoTypeInformation -Encoding UTF8 -Path $outFile -Delimiter $delimiterASKER

Hi OBdA,

I didn't know that! Very good news.

With this in mind, is there a way to have a nice function with your last script and an example for calling single and multiple files. This function will be very useful as I am sure that I will re-use it if I encounter in the future a similar case.

Thank you for your help.

Regards,

Luis.

You're aware that you don't need to change the $outName for each $inFile? They can all go into the same target csv.

I didn't know that! Very good news.

With this in mind, is there a way to have a nice function with your last script and an example for calling single and multiple files. This function will be very useful as I am sure that I will re-use it if I encounter in the future a similar case.

Thank you for your help.

Regards,

Luis.

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

Thank you very much for this.

For avoiding having error messages, how should I set up the following line and get csv file with ";"?

Can I put at the end of the line -Delimiter $delimiter?

For avoiding having error messages, how should I set up the following line and get csv file with ";"?

$links.Keys | Sort-Object -Descending | ForEach-Object {$links[$_]} | Export-Csv -NoTypeInformation -Encoding UTF8 -Path $outFileCan I put at the end of the line -Delimiter $delimiter?

ASKER

For the record the following works nicely and I get the same number as shows on EE advanced search:

$inFiles = 'EE-questions-1.html'

#$inFiles = 'EE-questions-1.html','EE-questions-2.html','EE-questions-3.html','EE-questions-4.html','EE-questions-5.html'

$outName = 'EEQuestions.csv'

$delimiter = ';'

$scriptDir = Split-Path $MyInvocation.MyCommand.Path -Parent

$outFile = "$($scriptDir)\$($outName)"

$links = @{}

If (Test-Path -Path $outFile) {

Import-Csv -Path $outFile | ForEach-Object {$links[$_.ID] = $_}

}

ForEach ($inFile in $inFiles) {

Write-Host "Processing '$($inFile)'"

# Sample line:

# <a href="https://www.experts-exchange.com/questions/29176165/Excel-VBA-keep-remove-text-based-on-character-add-in.html" class="result-not-top-ten" title="Excel VBA: keep & remove text based on character & add-in"><span>Excel VBA: keep & remove text based on character & add-in</span></a>

Get-Content -Path $inFile -Encoding UTF8 -ErrorAction Stop |

Where-Object {$_ -match '<a\s+href="https://www\.experts-exchange\.com/questions/(?<ID>\d+)/'} |

ForEach-Object {

$link = [xml]($_.Replace(' ', ' ').Replace('<wbr>', '')) | Select-Object -Property @{n='Title'; e={$_.a.Title}}, @{n='ID'; e={$Matches['ID']}}, @{n='Link'; e={$_.a.href}}

$links[$link.ID] = $link

}

}

$links.Keys | Sort-Object -Descending | ForEach-Object {$links[$_]} | Export-Csv -NoTypeInformation -Encoding UTF8 -Path $outFile

Regression, sorry; edited above, just download again from https://www.experts-exchange.com/questions/29180096/Windows-Batch-Powershell-keep-lines-that-contain-certain-string.html?anchorAnswerId=43078012#a43078012

The delimiter must be present in two locations, one for Import-Csv, one for Export-Csv.

In the version you just posted, the delimiter would need to be added at line 10 (Import-Csv) and line 24 (Export-Csv)

In the version you just posted, the delimiter would need to be added at line 10 (Import-Csv) and line 24 (Export-Csv)

# Line 10:

Import-Csv -Path $outFile -Delimiter $delimiter | ForEach-Object {$links[$_.ID] = $_}

# Line 24:

$links.Keys | Sort-Object -Descending | ForEach-Object {$links[$_]} | Export-Csv -NoTypeInformation -Encoding UTF8 -Path $outFile -Delimiter $delimiterASKER

Ok, it wors! Thank you for your help!

Open in new window