Power BI append tables

So I have two queries, exactly the same columns plus a custom column that I use to tell what office the data belongs to.

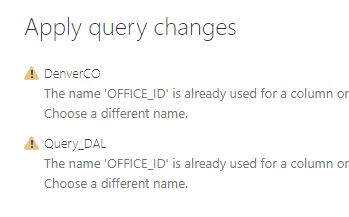

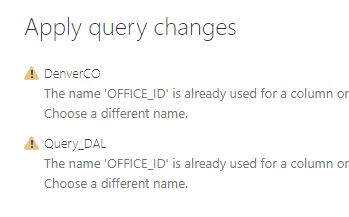

I am downloading sharepoint data from each office and the downloads are working. But when I try to append Denver data to the DAL data I get this.

That column is a custom column that has a number in it to identify where the data is from. The custom columns are exactly the same except for the value. Denver is 1 and DAL is 2.

Do I have to append to a new record set? From what I have read I did not think I needed to. I want to use the DAL table to be the main because all the graphs and such are built off of it and I did now want to have to reconnect them all to a new table.

Any suggestions?

Thanks!!!!

Jim

I am downloading sharepoint data from each office and the downloads are working. But when I try to append Denver data to the DAL data I get this.

That column is a custom column that has a number in it to identify where the data is from. The custom columns are exactly the same except for the value. Denver is 1 and DAL is 2.

Do I have to append to a new record set? From what I have read I did not think I needed to. I want to use the DAL table to be the main because all the graphs and such are built off of it and I did now want to have to reconnect them all to a new table.

Any suggestions?

Thanks!!!!

Jim

Is there two Office_ID columns in each of the files? The custom one and another?

ASKER

No, just one column in each file. It is a custom column that just has a number in it. In SQL I would use a UNION ALL to all the data from both tables. That is what I thought append did.

There is some minor problem that we are not seeing. You should be able to add the custom column with the same name to the two tables. Data types the same in the two custom columns?

There might be a solution without the custom columns if the source data file signifies the different regions. Is there a way you can share some of the data from the original files as a sample?

There might be a solution without the custom columns if the source data file signifies the different regions. Is there a way you can share some of the data from the original files as a sample?

You might try renaming your custom column OfficeID

ASKER

I can get the office for it's URL. That might be better. Let me try that and see what happens. And I will try taking the underscore out also Thanks!

You know there is functionality of combining Excel files (with standard format) from one folder, right? Then as new files are added to the folder it includes them in the consolidation. For instance if the folder had Jan and Feb, and the Mar was saved in the folder, Power Query would pick up all three. Not sure if that is applicable to you.

ASKER

No, I am reporting on SharePoint sites for the doc librarys so all my sources will be SharePoint List Online. Thanks for the tip though. Not sure if it shows but I am kind of new to Power BI. Lots of DBA experience but not BI.

Most of us are all new to PowerBI, but it is a great tool.

This might be helpful for your SharePoint connection:

https://www.google.com/search?q=PowerBI+from+sharepoint+folder&rlz=1C1GCEA_enUS887US891&oq=PowerBI+from+sharepoint+folder&aqs=chrome..69i57j0l7.9763j0j4&sourceid=chrome&ie=UTF-8#kpvalbx=_DfgaX-TsOYewtQX96ou4BQ18

https://www.google.com/search?q=PowerBI+from+sharepoint+folder&rlz=1C1GCEA_enUS887US891&oq=PowerBI+from+sharepoint+folder&aqs=chrome..69i57j0l7.9763j0j4&sourceid=chrome&ie=UTF-8#kpvalbx=_DfgaX-TsOYewtQX96ou4BQ18

ASKER

Right now my main issue is that I am having to pull data from production sites and they all contain 100's GB of data. I tried to refresh the DAL office and let it run overnight and still had not completed.

Is there a way to work with the data set already loaded and not let it refresh?

Is there a way to work with the data set already loaded and not let it refresh?

Do you know you connection type? Think the two main ones are import and direct connection.

ASKER

It is SharePoint List Online. I think I solved that small issue though. I am filtering on just the last few months of data and that seems to have cut it down quite a bit.

(EDIT: No this did not work it is still trying to go through the SharePoint site which is super super slow).

Now I just have to get the tables joined.

Thank you so much!

(EDIT: No this did not work it is still trying to go through the SharePoint site which is super super slow).

Now I just have to get the tables joined.

Thank you so much!

Hope that works! Post if you have problems. I have been recruiting others here on the site who have Power BI/DAX skills to participate on answering questions.

ASKER

Once I know what I am doing I will be glad to help as well.

So I fixed my issue of load time by selecting Not Include In Refresh for the query. Now it just works with the data in the data set and does not try to refresh.

I have a second table I created in excel and load a Office2. Same columns and values but a small subset of DAL. When I try to append or merge them it tells me that name is already used in DAL and in Office 2. So now I am back to not being able to join them.

I am going to be bald before this is over.

So I fixed my issue of load time by selecting Not Include In Refresh for the query. Now it just works with the data in the data set and does not try to refresh.

I have a second table I created in excel and load a Office2. Same columns and values but a small subset of DAL. When I try to append or merge them it tells me that name is already used in DAL and in Office 2. So now I am back to not being able to join them.

I am going to be bald before this is over.

Is it possible to share a subset of the two files, or some version close to see if I have the same issue? I would be doing it in Excel and not Power BI as I don't have that downloaded on this machine. But it should have the same issue I would think.

I am assuming you are appending similar to this video (which is very basic).

https://www.youtube.com/watch?v=jcHqWNAGV1w

The other thing to consider is the M code that brought the data in from SharePoint. When you are in the query mode if you go to View>AdvancedEditor you can see the M code for the query. This code is a good thing to know, though I am not good at it yet. But it is readable, and is the code for each of the steps in the query. Perhaps there is some issue with the naming of the OfficeID field.

https://www.youtube.com/watch?v=jcHqWNAGV1w

The other thing to consider is the M code that brought the data in from SharePoint. When you are in the query mode if you go to View>AdvancedEditor you can see the M code for the query. This code is a good thing to know, though I am not good at it yet. But it is readable, and is the code for each of the steps in the query. Perhaps there is some issue with the naming of the OfficeID field.

Jim, attached is a quick sample append for Dallas and Houston regions. Append is on the APPENDED TAB.

EE-2.xlsx

EE-2.xlsx

ASKER

Thank you, that looks to be exactly what I am trying to do.

I am trying a new method now. I just have the one table for DAL which is 750K rows. I mark it "not include in refresh " and then add a calculated column. The column is OfficeID and I just mark some records 1,2, or 3 based on the modified date.

Seems to work great and that would give me a give me a demo for my boss. Don't care right now if it is the right data or not.

Even though I mark it not included in refresh when I apply my changes it still tries to go the the original sharepoint site and I let it run for an hour and it had does not complete. I am thinking that it is trying to verifying all the rows and that will take days.

I even unhooked my PC from the network and internet and then when I apply changes it tells me it can't reach the sharepoint site and fails.

This should not be so hard. I just need to get the office selector working for my demo and it is killing me. So that means I just need to add an officeID column.

Like I said, if I have some test sites with a few thousand rows this would be a breeze or at least a mild wind. But I don't and the smallest site is 20 GB which I couldn't download in over 8 hours last night.

Any suggestions?

Thank you.

I am trying a new method now. I just have the one table for DAL which is 750K rows. I mark it "not include in refresh " and then add a calculated column. The column is OfficeID and I just mark some records 1,2, or 3 based on the modified date.

Seems to work great and that would give me a give me a demo for my boss. Don't care right now if it is the right data or not.

Even though I mark it not included in refresh when I apply my changes it still tries to go the the original sharepoint site and I let it run for an hour and it had does not complete. I am thinking that it is trying to verifying all the rows and that will take days.

I even unhooked my PC from the network and internet and then when I apply changes it tells me it can't reach the sharepoint site and fails.

This should not be so hard. I just need to get the office selector working for my demo and it is killing me. So that means I just need to add an officeID column.

Like I said, if I have some test sites with a few thousand rows this would be a breeze or at least a mild wind. But I don't and the smallest site is 20 GB which I couldn't download in over 8 hours last night.

Any suggestions?

Thank you.

After you put your SharePoint URL in you should have the ability to filter which files you need from the list. Are you doing that?

ASKER

No I am grabbing them all, I did try to use the date field to only get files past a certain time but it still ran for longer than I could wait (at least an hour).

ASKER

Here is the code for it minus the url

let

Source = SharePoint.Files("http://xxx/DAL/"),

#"Removed Columns" = Table.RemoveColumns(Source,{"Content", "Date accessed"}),

#"Inserted Text Between Delimiters" = Table.AddColumn(#"Removed Columns", "Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 6, 0), type text),

#"Renamed Columns" = Table.RenameColumns(#"Inserted Text Between Delimiters",{{"Text Between Delimiters", "Sub Folder"}}),

#"Expanded Attributes" = Table.ExpandRecordColumn(#"Renamed Columns", "Attributes", {"Size"}, {"Attributes.Size"}),

#"Renamed Columns1" = Table.RenameColumns(#"Expanded Attributes",{{"Attributes.Size", "Size"}}),

#"Changed Type" = Table.TransformColumnTypes(#"Renamed Columns1",{{"Size", Int64.Type}}),

#"Renamed Columns2" = Table.RenameColumns(#"Changed Type",{{"Size", "Bytes"}}),

#"Removed Columns1" = Table.RemoveColumns(#"Renamed Columns2",{"Date created"}),

#"Added Index" = Table.AddIndexColumn(#"Removed Columns1", "Index", 1, 1),

#"Inserted Text Between Delimiters1" = Table.AddColumn(#"Added Index", "Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 5, 0), type text),

#"Renamed Columns3" = Table.RenameColumns(#"Inserted Text Between Delimiters1",{{"Text Between Delimiters", "Sub Folder 2"}}),

#"Added Conditional Column" = Table.AddColumn(#"Renamed Columns3", "OfficeID", each if [Date modified] < #datetime(2017, 12, 31, 0, 0, 0) then 1 else if [Date modified] > #datetime(2019, 1, 1, 0, 0, 0) then 2 else 3)

in

#"Added Conditional Column"

What we are doing is work in process.

Below are the step performed in the query yu provided me. Each beginning with =....

Did you make all those changes on the SharePoint file? Do you know what each of these did?

"let

Source = SharePoint.Files("http://xxx/DAL/"),

= Table.RemoveColumns(Source,{"Content", "Date accessed"}),

= Table.AddColumn("Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 6, 0), type text),

= Table.RenameColumns({{"Text Between Delimiters", "Sub Folder"}}),

= Table.ExpandRecordColumn("Attributes", {"Size"},{"Attributes.Size"}),

= Table.RenameColumns({{"Attributes.Size", "Size"}}),

= Table.TransformColumnTypes({{"Size", Int64.Type}}),

= Table.RenameColumns({"Size", "Bytes"}}),

= Table.RemoveColumns({"Date created"}),

= Table.AddIndexColumn("Index", 1, 1),

= Table.AddColumn(Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 5, 0), type text),

= Table.RenameColumns({{"Text Between Delimiters", "Sub Folder 2"}}),

= Table.AddColumn("OfficeID", each if [Date modified] < #datetime(2017, 12, 31, 0, 0, 0) then 1 else if [Date modified] > #datetime(2019, 1, 1, 0, 0, 0) then 2 else 3)

in

#"Added Conditional Column"

Below are the step performed in the query yu provided me. Each beginning with =....

Did you make all those changes on the SharePoint file? Do you know what each of these did?

"let

Source = SharePoint.Files("http://xxx/DAL/"),

= Table.RemoveColumns(Source,{"Content", "Date accessed"}),

= Table.AddColumn("Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 6, 0), type text),

= Table.RenameColumns({{"Text Between Delimiters", "Sub Folder"}}),

= Table.ExpandRecordColumn("Attributes", {"Size"},{"Attributes.Size"}),

= Table.RenameColumns({{"Attributes.Size", "Size"}}),

= Table.TransformColumnTypes({{"Size", Int64.Type}}),

= Table.RenameColumns({"Size", "Bytes"}}),

= Table.RemoveColumns({"Date created"}),

= Table.AddIndexColumn("Index", 1, 1),

= Table.AddColumn(Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 5, 0), type text),

= Table.RenameColumns({{"Text Between Delimiters", "Sub Folder 2"}}),

= Table.AddColumn("OfficeID", each if [Date modified] < #datetime(2017, 12, 31, 0, 0, 0) then 1 else if [Date modified] > #datetime(2019, 1, 1, 0, 0, 0) then 2 else 3)

in

#"Added Conditional Column"

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

I inherited this but I do understand the process. The SHAREPOINT.FILES returns a table of contents which is what I am reporting on. The steps below are all a sequence so the step above is passed to the next step and then that step works on the data from first step.

Thanks!!!!

Connection parameters. Uses your Microsoft Account to grant access.

Source = SharePoint.Files("http://xxx/DAL/"),

Removes the columns Content and Date Accesed. I believe that Content is the actual file in a BLOB and that is why it so slow.

= Table.RemoveColumns(Source,{"Content", "Date accessed"}),

Adds a column based on folder path to break it down by // so tokenizes it to get directory folder

= Table.AddColumn("Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 6, 0), type text),

Renames the resulting column Sub Folder

= Table.RenameColumns({{"Text Between Delimiters", "Sub Folder"}}),

Next four steps get the byte size of the file from Attributes column and changes it to int64 column named Size

= Table.ExpandRecordColumn("Attributes", {"Size"},{"Attributes.Size"}),

= Table.RenameColumns({{"Attributes.Size", "Size"}}),

= Table.TransformColumnTypes({{"Size", Int64.Type}}),

= Table.RenameColumns({"Size", "Bytes"}}),

Remove date created column

= Table.RemoveColumns({"Date created"}),

This is a row number auto filled so first is 1 an second is 2 and so on. Unique Id for row

= Table.AddIndexColumn("Index", 1, 1),

Parses the data in path again to get sub folder 2

= Table.AddColumn(Text Between Delimiters", each Text.BetweenDelimiters([Folder Path], "/", "/", 5, 0), type text),

= Table.RenameColumns({{"Text Between Delimiters", "Sub Folder 2"}}),

This is my conditional column to put in the office number 1, 2, or 3 based on date range. This is to simulate different office branches even though all the records are from one office

= Table.AddColumn("OfficeID", each if [Date modified] < #datetime(2017, 12, 31, 0, 0, 0) then 1 else if [Date modified] > #datetime(2019, 1, 1, 0, 0, 0) then 2 else 3)

Thanks!!!!

ASKER

I finally got it working. Thank you so much your help. The adding the office number to the existing dataset finally finished and works.

Again thanks for you help and if I can return the favor just ask!

Again thanks for you help and if I can return the favor just ask!

Sure, Jim. Not sure we got all the way, but this is a learning for all of us. The PowerBI is relatively new to most of us. Keep the questions coming..

Jim - I found this online. If you have time to read through the email string it might add to your knowledge.

https://social.technet.microsoft.com/Forums/Lync/en-US/37765819-f2b8-48f6-aaa9-3b9a8716a947/sharepoint-online-list-power-bi-slow-query-update?forum=powerquery

https://social.technet.microsoft.com/Forums/Lync/en-US/37765819-f2b8-48f6-aaa9-3b9a8716a947/sharepoint-online-list-power-bi-slow-query-update?forum=powerquery