Vmware disk need consolidated after failed to remove Veeam snapshot

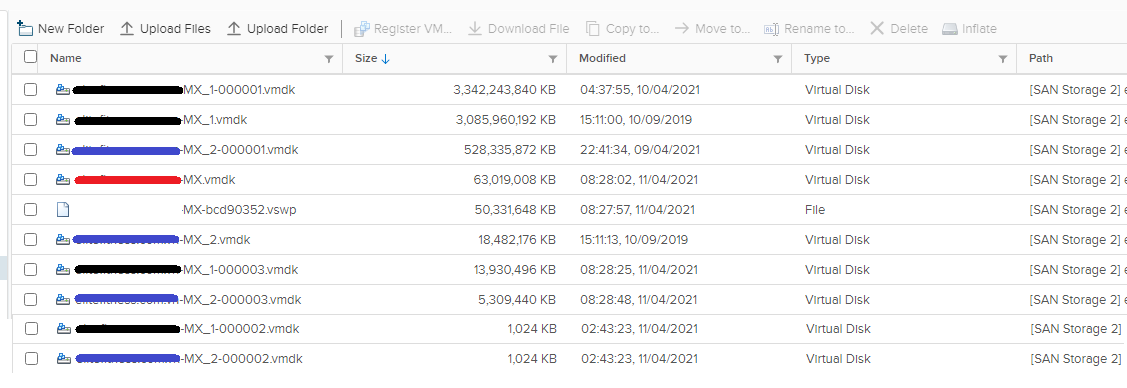

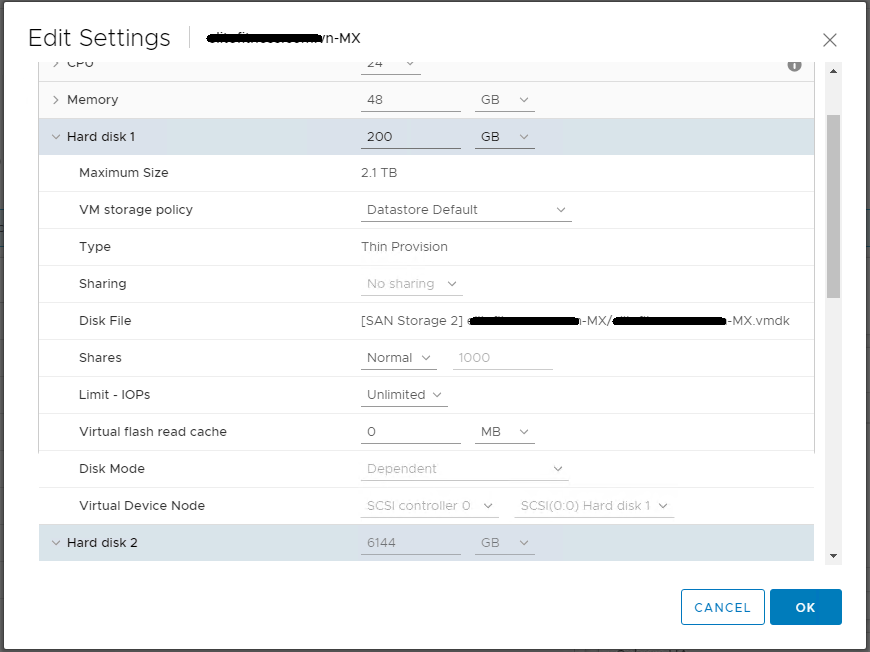

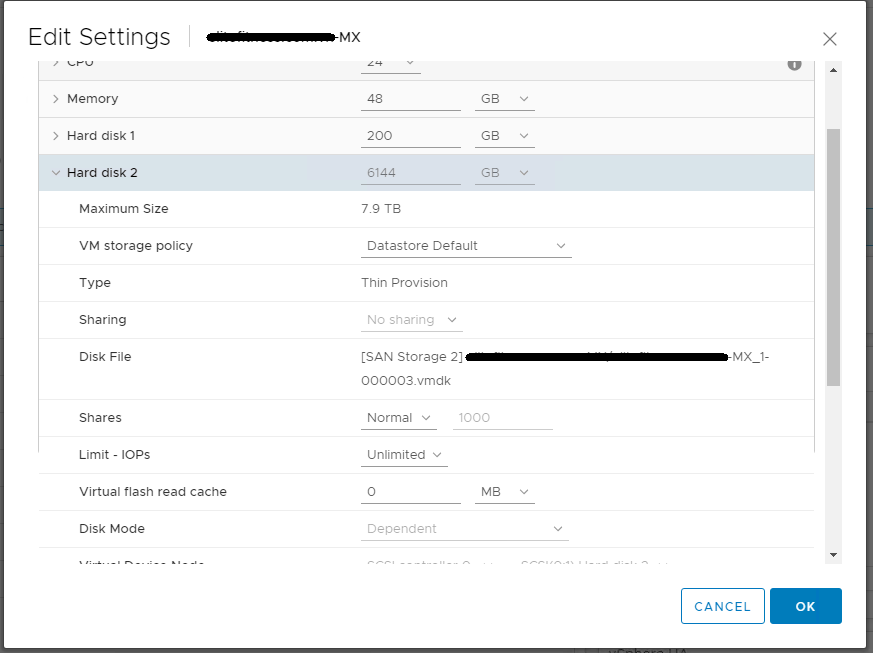

Dear Experts, after Veeam failed to remove a snapshot of VM, vsphere said that its disks need to be consolidated. I let it run through the night but it seems did not work. The VM is still running on snapshot because I can see "...MX-000001.vmdk" in Settings.

I guess the reason is low of storage. I tried to delete some junk data on SAN but not sure if that is enough for a new snapshot that will be taken tonight.

Should I still follow?

1. Power off the VM

2. Take a snapshot

3. Delete all snapshots

3. Power on the VM

Is there any consideration? when the snapshots are removed at step#3, we will have to wait disks to be consolidated. Am I right?

Many thanks!

I guess the reason is low of storage. I tried to delete some junk data on SAN but not sure if that is enough for a new snapshot that will be taken tonight.

Should I still follow?

1. Power off the VM

2. Take a snapshot

3. Delete all snapshots

3. Power on the VM

Is there any consideration? when the snapshots are removed at step#3, we will have to wait disks to be consolidated. Am I right?

Many thanks!

ASKER

Hi Andy, I'm not sure why but the vm has been still running on snapshot since yesterday.

Is there any problem with it? Should we let Veeam do its job again tonight or remove the snapshot manually?

Is there any problem with it? Should we let Veeam do its job again tonight or remove the snapshot manually?

if Veeam failed to remove the snapshot once it is likely to fail again making the situation worse also the snapshot will be growing by the minute VM performance will be affected and there is a chance of snapshot corruption so you should not ignore it an deal with it!!

ASKER

Okay so what should I do in this case? I just checked the veeam logs, the job completed with green check and said that the vm snapshot has stuck, will attempt to consolidate periodically. The VM has been running in production for 12 hours.

Do you think this script will work:https://pelegit.co.il/delete-stuck-veeam-snapshot-on-virtual-machine/

Do you think this script will work:https://pelegit.co.il/delete-stuck-veeam-snapshot-on-virtual-machine/

you've got two options power off VM and remove snapshot correctly or remove whilst powered on

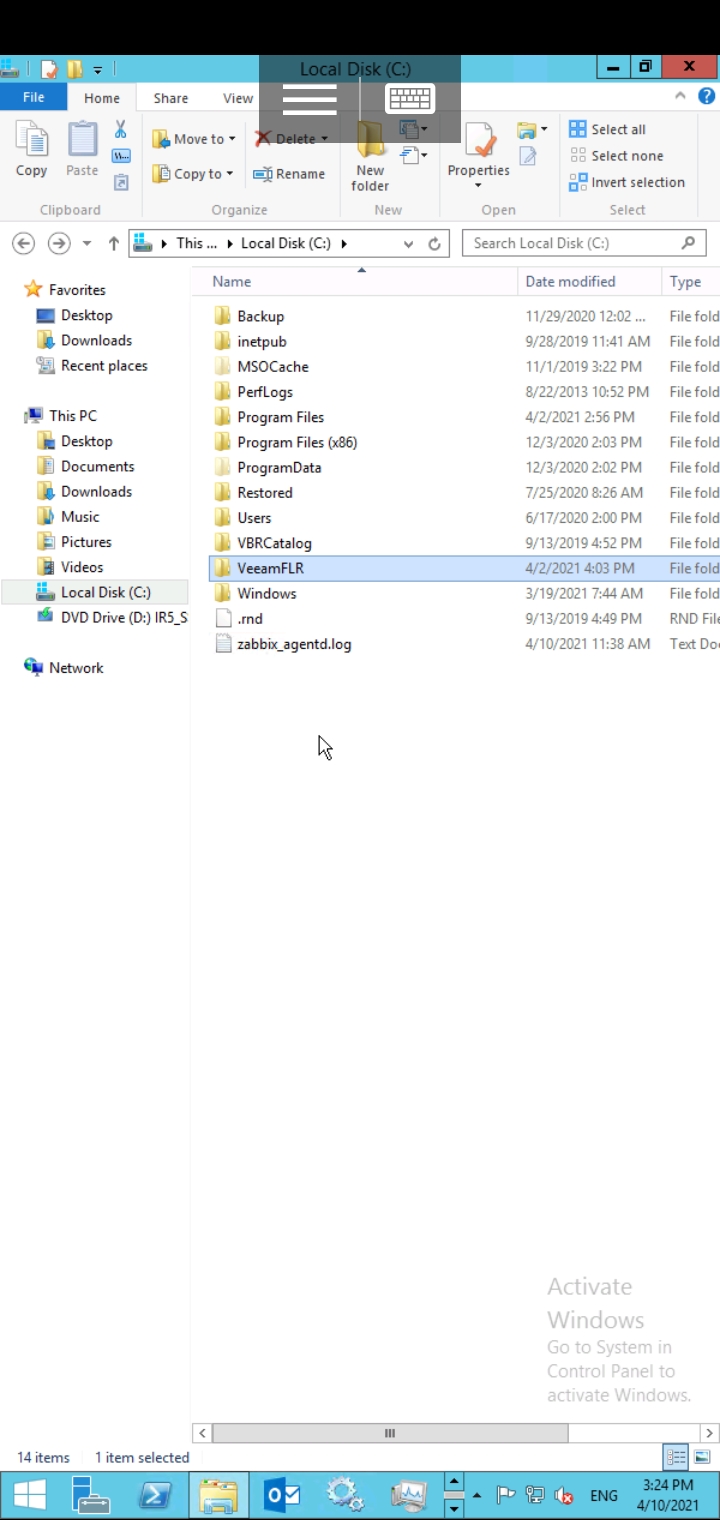

check that parent disk is not attached to Veeam vm

don't use the consolidate option

take a new snapshot

wait 120 seconds

then select delete alll

wait and be patient

check that parent disk is not attached to Veeam vm

don't use the consolidate option

take a new snapshot

wait 120 seconds

then select delete alll

wait and be patient

ASKER

If you have no Disks connected to Veeam VM then you can proceed

ASKER

It has been run for half an hour and progress in 2%. How fast does it run? Can it run faster, I really want to finish it before Sunday's night.

How long is a piece of string.... 30 minutes is nothing.....my advice, go away and come back in 4-5 hours

.

it could take seconds, hours, minutes or days, the speed of merge is based on two things but it will complete, the important thing, is you do not mess with the process, stop, cancel, restart the host, stop the services, but just wait.... and be patient.

1. size of snapshot.

2. speed of datastore.

Please read me EE Article

HOW TO: VMware Snapshots :- Be Patient

.

it could take seconds, hours, minutes or days, the speed of merge is based on two things but it will complete, the important thing, is you do not mess with the process, stop, cancel, restart the host, stop the services, but just wait.... and be patient.

1. size of snapshot.

2. speed of datastore.

Please read me EE Article

HOW TO: VMware Snapshots :- Be Patient

ASKER

hi, it seems like done but only 1 of 3 ddisks, the others are still Running ön snapshot. Should I repeat?

I repeated it. let's wait some time

I repeated it. let's wait some time

that's rather odd, as all disks should be done at the same time.

have to checked to confirm the VM is actually running on them, see my article above to check how.

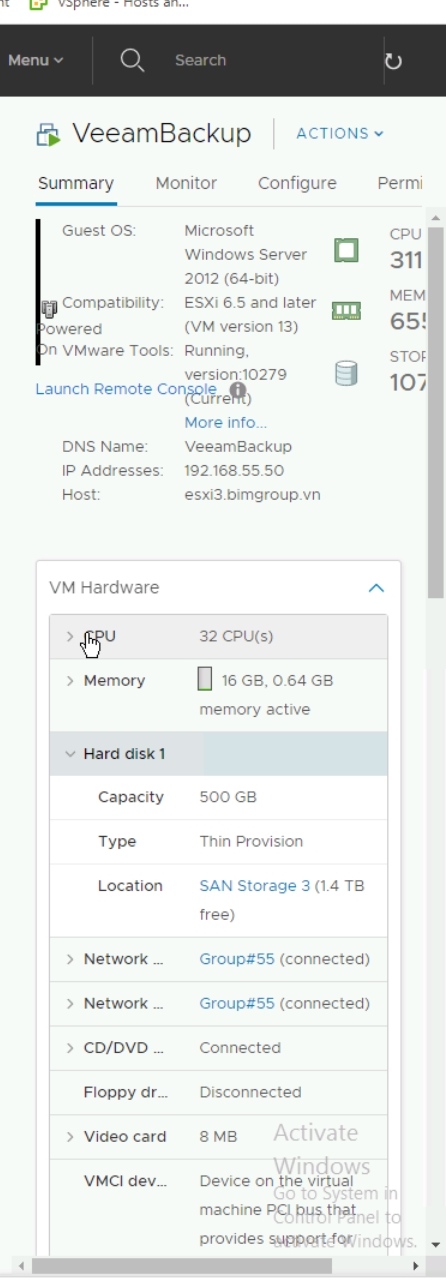

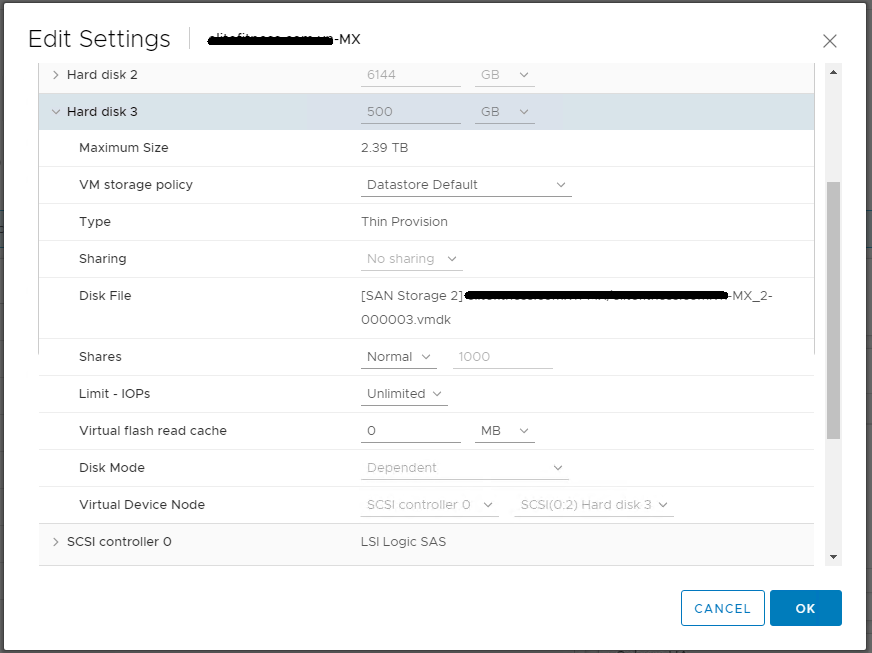

the screenshot above just shows a single disk of 500GB ?

the snapshot number has not increased, e.g. to -000002.vmdk

have to checked to confirm the VM is actually running on them, see my article above to check how.

the screenshot above just shows a single disk of 500GB ?

the snapshot number has not increased, e.g. to -000002.vmdk

ASKER

yes but its picture is veeam vm, not the problematic one. I checked in edit settings and only C drive is running on parent disks. It also has a consolidate warning on the screen.

ASKER

okay thats the Veeam VM - okay.

if you select DELETE ALL ?

do they disappear ?

if you select DELETE ALL ?

do they disappear ?

ASKER

Just before posting the update, I checked and did not see any snapshot in Snapshot manager, but now it should have because I created it. The task history showed that the remove process last night completed OK after 4 hours. Not sure why I still have 2 out of 3 disks running on snapshot. Now the new remove process is running on 33%

I guess there is nothing much I can do when it deletes the process, so I will come back in few hours. what should I do after it finish?

I guess there is nothing much I can do when it deletes the process, so I will come back in few hours. what should I do after it finish?

1. Check Snapshot Manager for any snapshots listed.

2. Check folder for any snapshots (-00000x.vmdk)

3. Check the disk properties to check if the VM is writing to a snapshot file -00000x.vmdk

2. Check folder for any snapshots (-00000x.vmdk)

3. Check the disk properties to check if the VM is writing to a snapshot file -00000x.vmdk

ASKER

Hi,

1. No I see no snap in Snapshot manager

2. Yes it still have the delta files

3. Yes it still has 2 disks running on snapshot

What should we do?

1. No I see no snap in Snapshot manager

2. Yes it still have the delta files

3. Yes it still has 2 disks running on snapshot

What should we do?

take a new snapshot

wait 120 seconds

then select delete alll

wait and be patient

wait 120 seconds

then select delete alll

wait and be patient

ASKER

Is there any other option?

I did twice but nothing different

I did twice but nothing different

Can you send me screenshots of the disk properties?

and a listing of all folder contents from SSH/console.

and a listing of all folder contents from SSH/console.

ASKER

ASKER

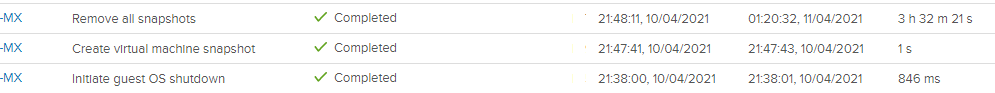

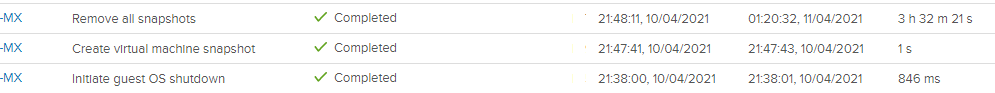

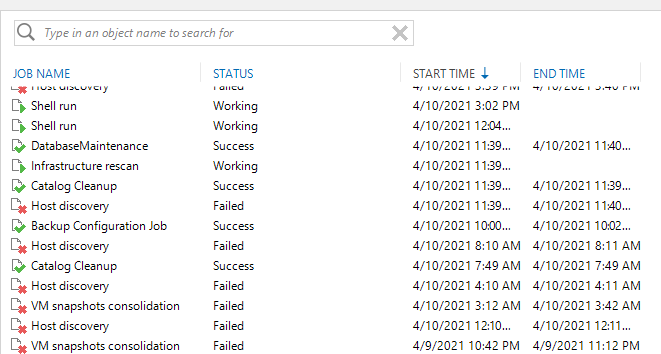

To recap, it happened on 9 April when my backup completed OK but its said the VM snapshot has stuck with "operation timeout". This is a log file (history) in Veeam:

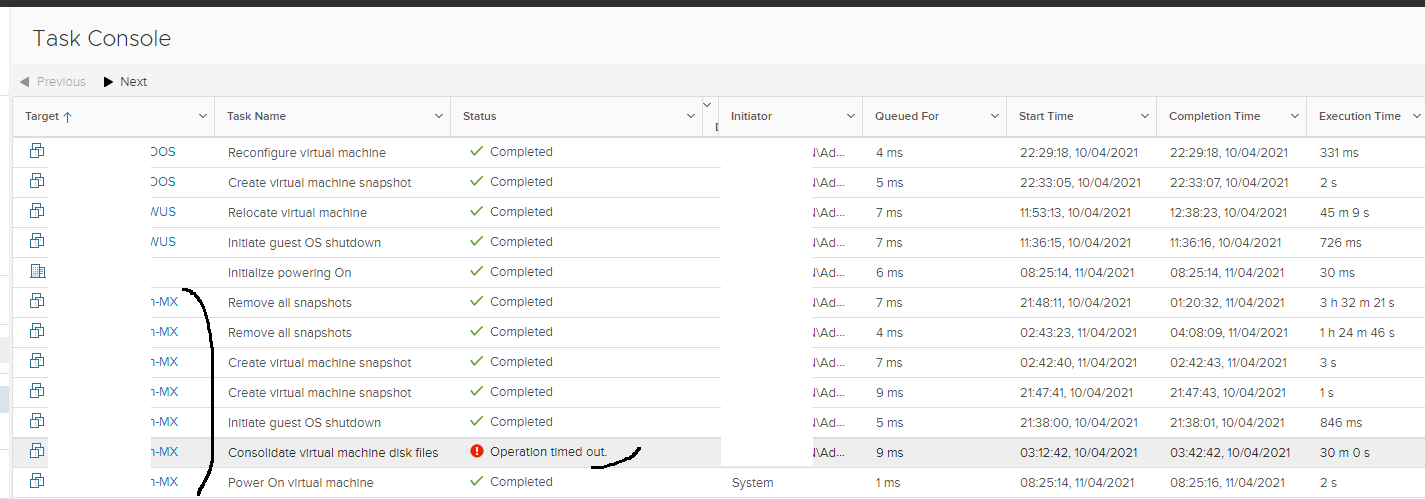

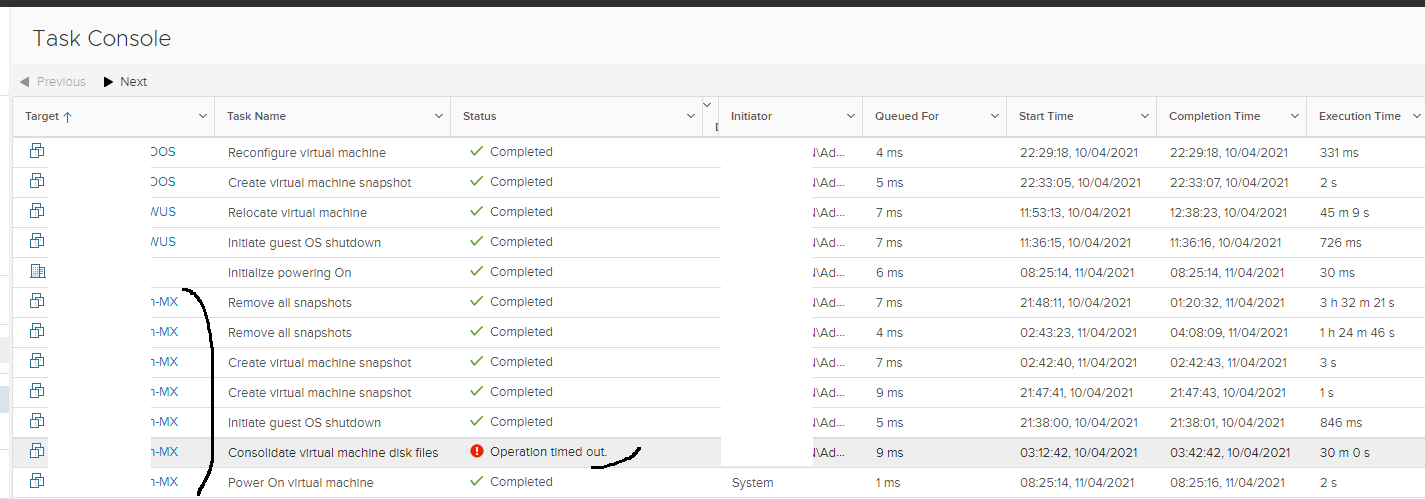

This is a task console in vCenter

That is the only one time consolidate disk was run automatically and it failed. After that we recreated snapshot twice and deleted ALL twice, but only 1 out of 3 disks is escaped from snapshot, remaining the other 2.

Currently I have to start the VM and running it from snapshots but not sure if it be OK?

This is a task console in vCenter

That is the only one time consolidate disk was run automatically and it failed. After that we recreated snapshot twice and deleted ALL twice, but only 1 out of 3 disks is escaped from snapshot, remaining the other 2.

Currently I have to start the VM and running it from snapshots but not sure if it be OK?

ASKER

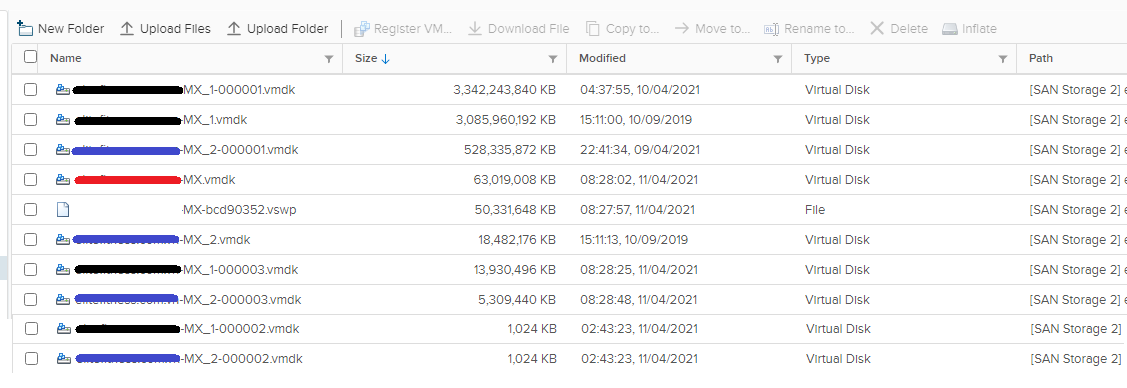

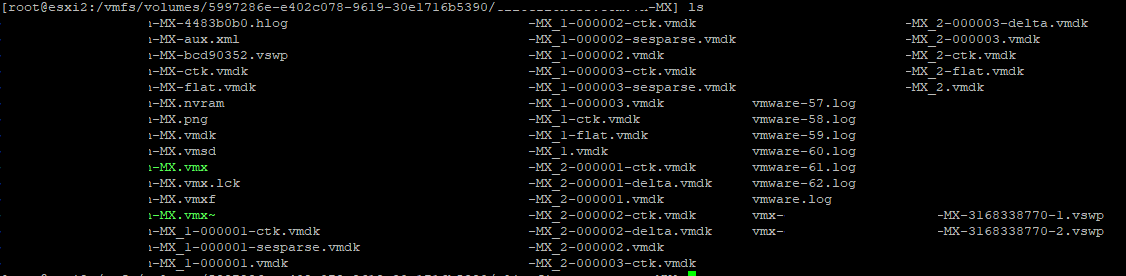

Is there anyway we can check the creation date of snapshots file? I can see that the child disk is even larger than the base.

So if I understand correctly, we have 000001.vmdk is the base now, not the .vmdk (its snapshot might be deleted?)

Order is .vmdk => 000001.vmdk => 000002.vmdk => 000003.vmdk

What if we delete the .vmdk and consolidate the disks, so that they will commit to 000001.vmdk? Is it possible?

So if I understand correctly, we have 000001.vmdk is the base now, not the .vmdk (its snapshot might be deleted?)

Order is .vmdk => 000001.vmdk => 000002.vmdk => 000003.vmdk

What if we delete the .vmdk and consolidate the disks, so that they will commit to 000001.vmdk? Is it possible?

Yes, it does confirm the VM is running on a snapshot.

Please just check that Veeam does not have any of these disks attached.

Is there space on this dataastore ?

Please just check that Veeam does not have any of these disks attached.

Is there space on this dataastore ?

ASKER

Hi, no Veeam VM did not have any of those disks attached.

We have about 1.9 TB in this SAN storage 2

I created the a folder in that VM's store and moved the MX_2.vmdk file to it, but it appeared a "flat" file with almost equal size of MX_2_000001.vmdk , so I moved it back

We have about 1.9 TB in this SAN storage 2

I created the a folder in that VM's store and moved the MX_2.vmdk file to it, but it appeared a "flat" file with almost equal size of MX_2_000001.vmdk , so I moved it back

Okay, there is another process we can do to try and get rid of the snapshot, and that is to CLONE the entire VM.

The resulting VM (CLONE) will be without snapshots and then use the CLONE.

(so Power off the VM, CLONE it)

It seems that something has locked the parent VM disks, which refuses to merge the snapshots.

The resulting VM (CLONE) will be without snapshots and then use the CLONE.

(so Power off the VM, CLONE it)

It seems that something has locked the parent VM disks, which refuses to merge the snapshots.

ASKER

Yup but the clone will cost us about 5 TB whereas the datastore has only 1.9 TB.

We intend to map the ISCSI disk (from NAS synology device) to the ESXi host as new datastore and clone the VM to it.

What do you think?

We intend to map the ISCSI disk (from NAS synology device) to the ESXi host as new datastore and clone the VM to it.

What do you think?

Any datastore with sufficient space will do.

I'm wondering if this is a space issue it cannot merge, did you try with VM Powered Off ?

It should not matter if using ESXi or vCenter Server to complete the delete all of snapshots.

I'm wondering if this is a space issue it cannot merge, did you try with VM Powered Off ?

It should not matter if using ESXi or vCenter Server to complete the delete all of snapshots.

ASKER

Yes the VM was turned off all the time I tried. ok but if we clone the VM, which disks will it use as base?

.vmdk or 00001.vmdk?

.vmdk or 00001.vmdk?

When the CLONE operation starts it will merge all the changes

0003.vmdk > 0002.vmdk > 0001.vmdk > parent.vmdk

So the final CLONE VM will just have a single parent.vmdk file with zero snapshots.

If the CLONE fails to complete or give an error there is something else which is wrong.

0003.vmdk > 0002.vmdk > 0001.vmdk > parent.vmdk

So the final CLONE VM will just have a single parent.vmdk file with zero snapshots.

If the CLONE fails to complete or give an error there is something else which is wrong.

ASKER

Hi, should we let the VM on while cloning it?

In that case, if we clone the VM in Monday and complete on Friday, will the data (when completed) will be merged or just the Monday's data?

In that case, if we clone the VM in Monday and complete on Friday, will the data (when completed) will be merged or just the Monday's data?

To ensure the data is intact with no issues power off the VM.

and then CLONE.

You will know in a matter of minutes if there are issues with the VM, you'll get an error.

and then CLONE.

You will know in a matter of minutes if there are issues with the VM, you'll get an error.

ASKER

Yes i just tried to clone another, smaller VM to iSCSi LUN datastore, it took 40 mins to complete 160 GB of data (and the VM is still online).

The data during that 40 mins cannot be cloned. In case of 6 TB data, if I estimate correctly will take about 25 hours to complete ;(

The data during that 40 mins cannot be cloned. In case of 6 TB data, if I estimate correctly will take about 25 hours to complete ;(

otherwise you will need to reach out to VMware Support for assistance because they will have remote access to hands on access to the issue which is preventing the snapshot from merge

Hi,

Just a shot in the dark : you could check the vmdk's IDs

Sometimes it could all went wrong with backup or replication softwares.

You could check that for each virtual disk

For example for Disk 1, named disk1.vmdk, if you have 2 snapshots you will have these files in VM's folder (in ssh/console):

disk1.vmdk

disk1-000001.vmdk

disk1-000002.vmdk

Which are only descriptors.

If you read these text files (cat file_name), you'll see the ID of the vmdk related to that file, and its parent ID

In the example you should have :

disk1's ID = disk1-000001's parent ID

disk1-000001's ID = disk1-000002 parent ID

I hope you see the logic. Check if the chain seems good.

Sometimes when this is all messed up you have ID=ffffff which mean no ID, except maybe for the first file parent ID, because it does not have parent, that's normal.

If all your files are still there in VM folder you could probably get it back.

But yes thats could be a support call to VMware as Andrew has stated.

Also what is your Veeam version?

Just a shot in the dark : you could check the vmdk's IDs

Sometimes it could all went wrong with backup or replication softwares.

You could check that for each virtual disk

For example for Disk 1, named disk1.vmdk, if you have 2 snapshots you will have these files in VM's folder (in ssh/console):

disk1.vmdk

disk1-000001.vmdk

disk1-000002.vmdk

Which are only descriptors.

If you read these text files (cat file_name), you'll see the ID of the vmdk related to that file, and its parent ID

In the example you should have :

disk1's ID = disk1-000001's parent ID

disk1-000001's ID = disk1-000002 parent ID

I hope you see the logic. Check if the chain seems good.

Sometimes when this is all messed up you have ID=ffffff which mean no ID, except maybe for the first file parent ID, because it does not have parent, that's normal.

If all your files are still there in VM folder you could probably get it back.

But yes thats could be a support call to VMware as Andrew has stated.

Also what is your Veeam version?

ASKER

My Veeam version is 9.5.

Last Sunday, I turned off the VM and started the cloning but about 12 hours later, we have to stop the process because of users' screaming. Is there anyway to start the VM during the cloning process?

Last Sunday, I turned off the VM and started the cloning but about 12 hours later, we have to stop the process because of users' screaming. Is there anyway to start the VM during the cloning process?

No, if the VM is powered off, and you start the CLONE, you cannot power back on.

I'm sure you'll understand if you try to CLONE whilst users are using the server and changing data, you may end up with an inconsistent state, and the risk of corrupt or lost data.

I'm sure you'll understand if you try to CLONE whilst users are using the server and changing data, you may end up with an inconsistent state, and the risk of corrupt or lost data.

Hi,

Veeam 9.5 should be fine, you could update to v10, but v9.5 it is not that old, and not specifically known for big issues of this type.

Is there anyway to start the VM during the cloning process?No. You have to cancel/stop the clone first. Then you will have the option to start VM.

Veeam 9.5 should be fine, you could update to v10, but v9.5 it is not that old, and not specifically known for big issues of this type.

ASKER

Hi, should we follow this article? https://www.experts-exchange.com/articles/29387/Veeam-Proxy-issue-Removing-Veeam-ghost-snapshots.html

1. Move all ctk files to a folder

2. Turn off the VM

3. Create a new VM's snapshot; then Delete All

4. Turn on the VM

5. Delete a folder of ctk file

Our VM is running on 33th snapshot :(((

1. Move all ctk files to a folder

2. Turn off the VM

3. Create a new VM's snapshot; then Delete All

4. Turn on the VM

5. Delete a folder of ctk file

Our VM is running on 33th snapshot :(((

Does the Veeam Backup VM, have the disk of this VM attached ?

I would still CLONE the VM.

I would still CLONE the VM.

ASKER

Hi, I checked but no any disk attached to Veeam VM.

So you'll need to CLONE and create a new VM.

ASKER

Hi, we just add 20 TB more to the data store. I just want to do the consolidate disk, at least one more time before cloning. How many space does it require for consolidate disk? that VM's size is about 7 TB

The issue is that you possibly have too many snapshots.

Do you get errors, when you try to delete the snapshot ?

Do you get errors, when you try to delete the snapshot ?

ASKER

Yes, in the Veeam console result, it said that "... has stuck VM snapshot, will attempt to consolidate periodically"

It also has "operation time out " in vCenter

It also has "operation time out " in vCenter

Have you manually tried to delete the snapshot, I'm not talking about Veeam ?

1. Take a manual snapshot.

2. Wait 120 seconds.

3. select DELETE ALL and Wait and be patient, it will take a long time.

If this does not work, you have got no option but to try CLONE, and re-reading all the above

1. Power off the VM.

2. CLONE.

3. WAIT.

Yes it's going to take a long time, and the VM and service is going to be down, so schedule out of hours.

1. Take a manual snapshot.

2. Wait 120 seconds.

3. select DELETE ALL and Wait and be patient, it will take a long time.

If this does not work, you have got no option but to try CLONE, and re-reading all the above

1. Power off the VM.

2. CLONE.

3. WAIT.

Yes it's going to take a long time, and the VM and service is going to be down, so schedule out of hours.

ASKER

ok, how can I checck the consolidate and cloning in ESXi CLI? It has run from Friday night.

You can either just use the CLONE from the GUI which is much easier and less work, or you can use CLONE from ESXI CLI (more work).

I would recommend, just powering off the VM, and Right CLICK and CLONE.

Do you have vCenter Server ?

I would recommend, just powering off the VM, and Right CLICK and CLONE.

Do you have vCenter Server ?

ASKER

Yes I have vCenter but really want to try the consolidate before cloning. Its status is 1% now :((

Perhaps I will let it run for few more days.

Perhaps I will let it run for few more days.

If you have Selected DELETE ALL you cannot cancel now....

ASKER

I clicked on consolidate actually, and stopped it when the San storage warns about increased size of 9TB. I checked but it still has 33 snapshots

you risk corruption to the virtual machine be warned!

you cannot avoid using storage to fix this snapshot mess!

and with every hour the snapshot will get larger causing more issues with performance of the Guest VM

what is the total size of snapshots now and how many snapshots now?

what is this VM? Is it Exchange ?

Have a read of my EE Article

HOW TO: VMware Snapshots :- Be Patient

you cannot avoid using storage to fix this snapshot mess!

and with every hour the snapshot will get larger causing more issues with performance of the Guest VM

what is the total size of snapshots now and how many snapshots now?

what is this VM? Is it Exchange ?

Have a read of my EE Article

HOW TO: VMware Snapshots :- Be Patient

ASKER

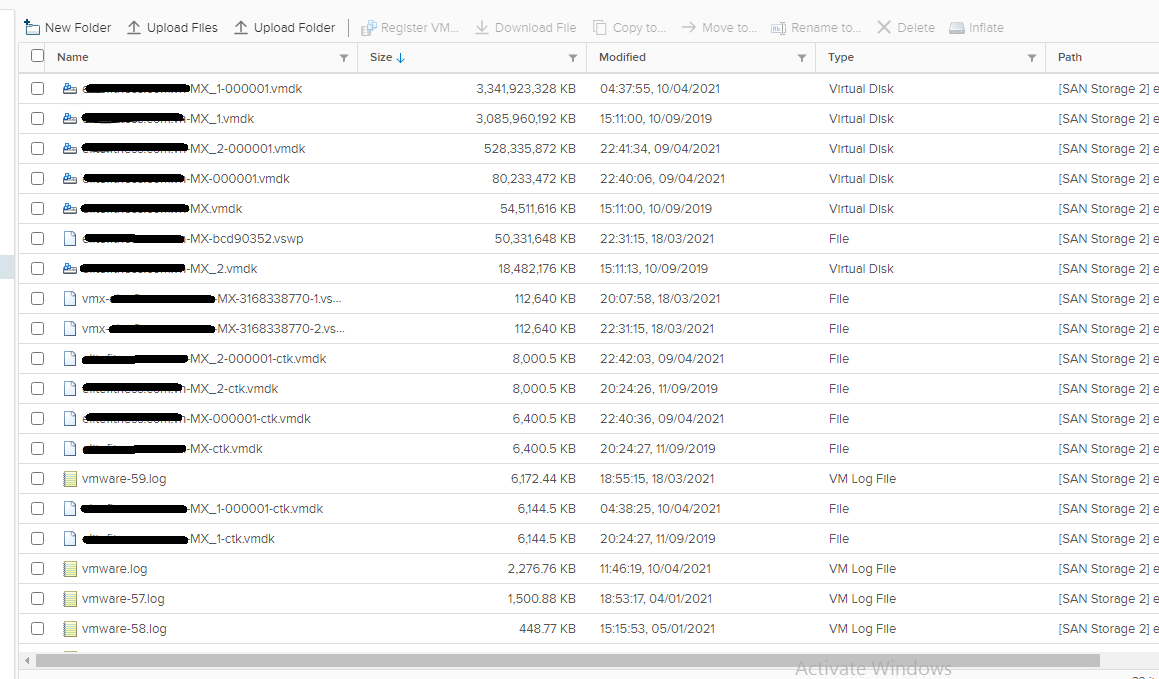

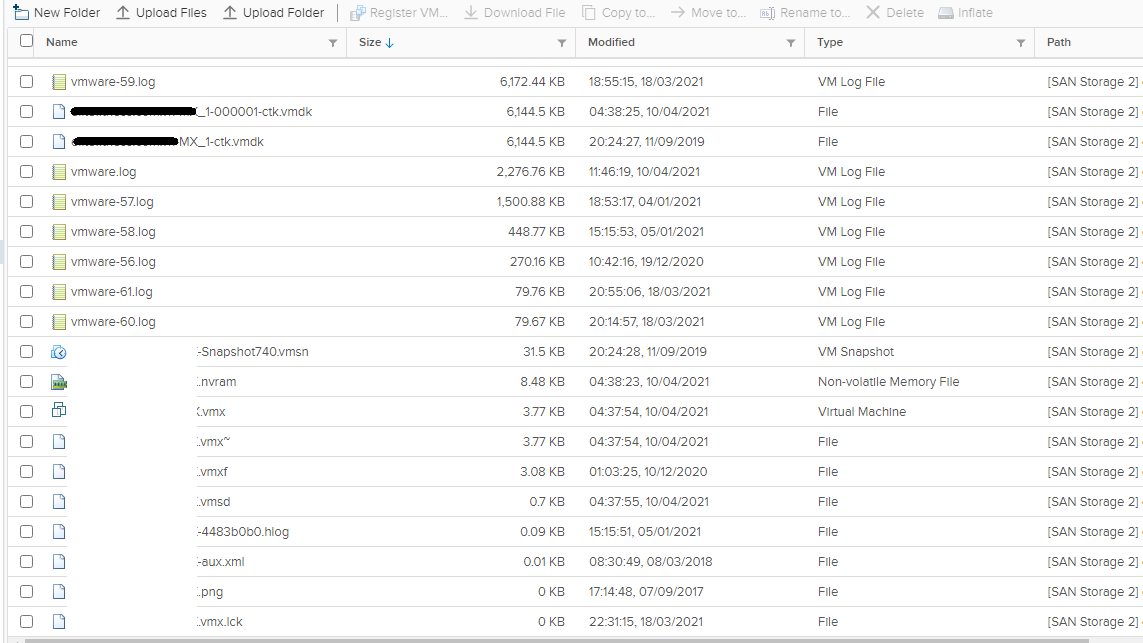

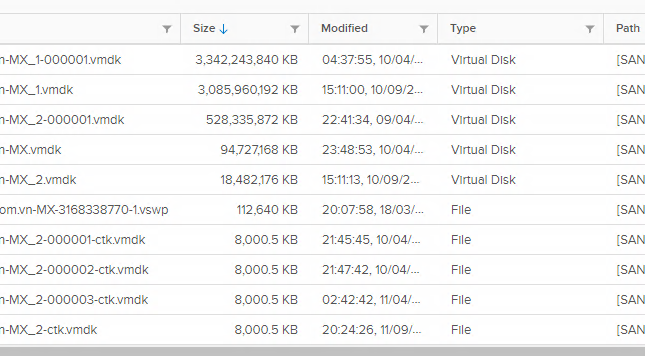

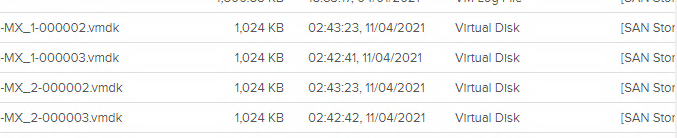

@Andrew, yes that is an Exchange 2016 server with 5 EDB, we saw lots of delta files in datastore. here is a list of delta and vmdk files in that VM: (the red ones are the parent disks)

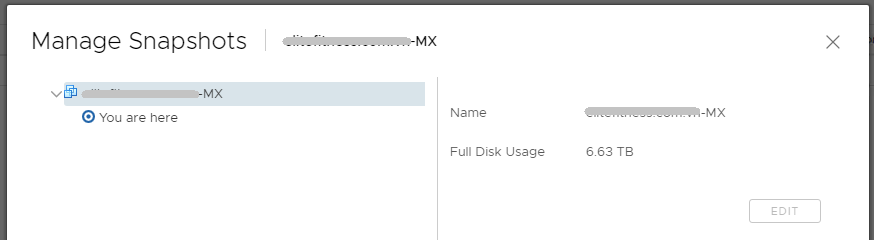

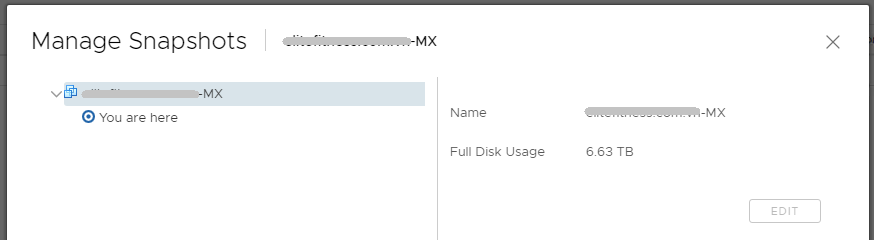

Yes the total size of those files is about 9 TB as expected. I also saw some "sesparse" files in datastore (SSH access) but could not see them in vCenter/ESXi GUI. In the snapshot management, I saw only 1 from Veeam:

I read your articles many times, but could not calculate the exact time it needed to clone (or consolidate?). That VM serves about 2500 users so it's difficult for us to schedule the downtime :((

and that VM has been running for few days without backup (because of consolidate disks process). Is it possible to run a full backup tonight?

Yes the total size of those files is about 9 TB as expected. I also saw some "sesparse" files in datastore (SSH access) but could not see them in vCenter/ESXi GUI. In the snapshot management, I saw only 1 from Veeam:

I read your articles many times, but could not calculate the exact time it needed to clone (or consolidate?). That VM serves about 2500 users so it's difficult for us to schedule the downtime :((

and that VM has been running for few days without backup (because of consolidate disks process). Is it possible to run a full backup tonight?

ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

ASKER

About the first 2 options, which one is your recommendation based on your experience? I'd like the one which has less down time please.

About the 3rd option, if I restore a whole VM, will the disks be consolidated? Because the last backup run last week and at that time, the VM already had those delta files.

About the 3rd option, if I restore a whole VM, will the disks be consolidated? Because the last backup run last week and at that time, the VM already had those delta files.

About the first 2 options, which one is your recommendation based on your experience? I'd like the one which has less down time please.

About the 3rd option, if I restore a whole VM, will the disks be consolidated? Because the last backup run last week and at that time, the VM already had those delta files.

None of these options have less downtime!

You should have been regularly checking backups to avoid 33 snapshots on a VM! That's at least 1 month of not checking!

I would try 1`, and check if it works, of if you are concerned, I would move onto 2.

Both are going to require

1. Emergency Downtime.

2. Power Off server.

3. Prepare a new datastore of correct size.

4. CLONE VM.

5. Wait and Be Patient.

ASKER

"Process of CLONING will create a new cloned VM with no snapshots, but it will require the same size as the current VM, without snapshots "

Should I increase the capacity of VM_1 disk on current VM before cloning? Currently it is 6TB disk, the occupied is only more than 4TB. I'm afraid after the cloning, the disk will be full of 9 TB. Is that correct?

Should I increase the capacity of VM_1 disk on current VM before cloning? Currently it is 6TB disk, the occupied is only more than 4TB. I'm afraid after the cloning, the disk will be full of 9 TB. Is that correct?

Should I increase the capacity of VM_1 disk on current VM before cloning? Currently it is 6TB disk, the occupied is only more than 4TB. I'm afraid after the cloning, the disk will be full of 9 TB. Is that correct?

No, GuestOS disk size has got nothing to do with snapshots.

Just ensure that the ESXi datastore you use for the CLONE has enough space to support the VM, check the disk without snapshots.

ASKER

Ok, based on my experiments with smaller vm, it will take about 12-16 hours to complete the cloning. Wish me luck to night 🙈

Should be fine, shutdown so no one can access so clone is identical. Check your mailbox and record last email received.

If you need to use the same MAC Address, then record the existing MAC Address, and manually change the MAC address later.

CLONE, then after 12-16 hours, change MAC Address of CLONE, and startup VM, but at this time, I would disable networking, and check over VM, before it enters production again.

If you need to use the same MAC Address, then record the existing MAC Address, and manually change the MAC address later.

CLONE, then after 12-16 hours, change MAC Address of CLONE, and startup VM, but at this time, I would disable networking, and check over VM, before it enters production again.

ASKER

I'm not sure about the MAC address, in which case I need to change it as the same of an old one?

Should I do the clone on vCenter or ESXi host?

Should I do the clone on vCenter or ESXi host?

only if you have a requirement to use the old MAC Address because a CLONE will change it in the VM created

if unsure make it the same

if unsure make it the same

oh dear! too many snapshots!

you may not need to use the shell and execute some commands ?

Happy to do this ?

you may not need to use the shell and execute some commands ?

Happy to do this ?

ASKER

Yes, I can do commands. Actually I have to turn that VM off using esxcli kill

When I check the VMtasj in ESXi, it said that the consolidate disk is running but I did not see it in GUI.

I rebooted the host and run the clone again. In case it happened again, what should I do? Is it better if we change to the plan 1 - "Delete All Snaphots"?

When I check the VMtasj in ESXi, it said that the consolidate disk is running but I did not see it in GUI.

I rebooted the host and run the clone again. In case it happened again, what should I do? Is it better if we change to the plan 1 - "Delete All Snaphots"?

The reason CLONE would have been greyed out, is if there was a task in progress.

You need to make a decision

1. DELETE ALL SNAPSHOTS

or

2. CLONE.

I would recommend CLONE now.

You need to make a decision

1. DELETE ALL SNAPSHOTS

or

2. CLONE.

I would recommend CLONE now.

ASKER

The clone is running and I think it is slower than expected.

If I have a full backup version of an old VM, then after cloning - can Veeam do the incremental in the new VM?

If I have a full backup version of an old VM, then after cloning - can Veeam do the incremental in the new VM?

A new VM, needs a new full backup, it's a different object.

ASKER

Hi, the clone completed successfully after more than 23 hours. I'm going to backup this new one with Veeam but to prevent this problem happens in the future, what options would you recommend to to in vCenter, ESXi or Veeam?

In the new VM's datastore; I saw the vmdk files of MX_3, MX_5 and MX_7; -ctk and -flat files, corresponding with these files. Are they ok? Not sure why does it name my disks like these, because the original ones are MX, MX_1 and MX_2? And where is _4 and _6?

In the new VM's datastore; I saw the vmdk files of MX_3, MX_5 and MX_7; -ctk and -flat files, corresponding with these files. Are they ok? Not sure why does it name my disks like these, because the original ones are MX, MX_1 and MX_2? And where is _4 and _6?

Set An alarm to warn you of snapshots and daily checks after backups!

that is a little odd, check the disks are all present in the VM settings.

In the new VM's datastore; I saw the vmdk files of MX_3, MX_5 and MX_7; -ctk and -flat files, corresponding with these files. Are they ok? Not sure why does it name my disks like these, because the original ones are MX, MX_1 and MX_2? And where is _4 and _6?

that is a little odd, check the disks are all present in the VM settings.

ASKER

Yes I checked and all 3 disks are enough, but they are named 3,5,7 respectively but I guess they should not have any problem?

that's very odd. If it causes concern, and you would like to appear cosmetically correct, you could try migrate to another datastore using Storage Migration may rename them correctly.

You will not be able to Power On, until the snapshot has been removed.