Elasticsearch cut strings with forward slash

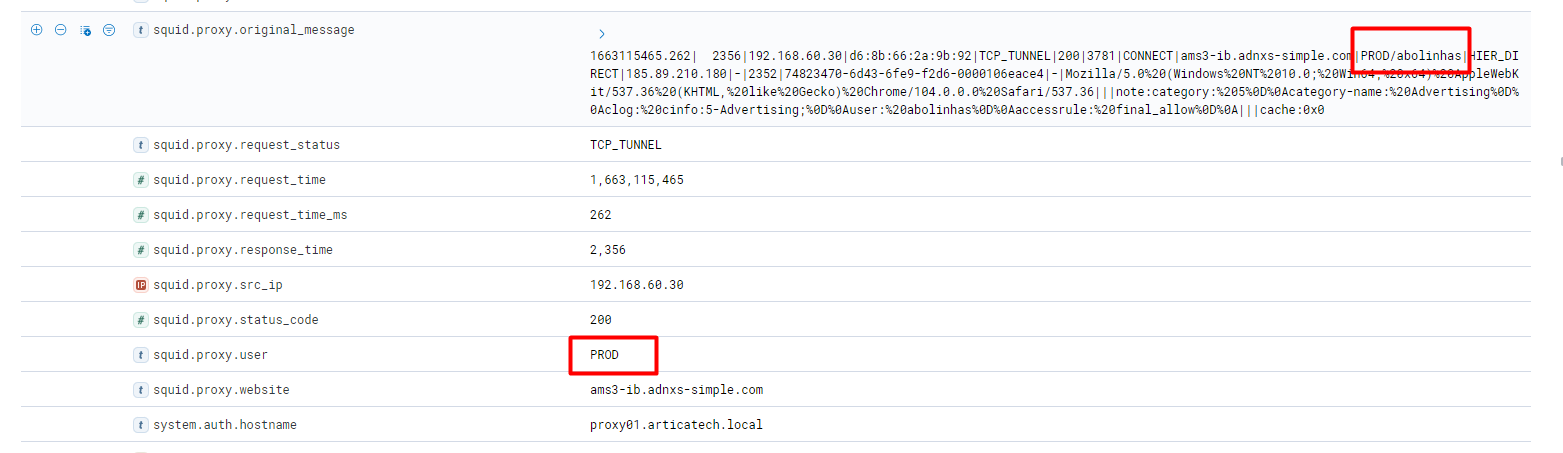

I have configured my squid proxy to send the logs to Elasticsearch 7.17.6, everything is working fine, except the USER mapping.

The string expect is DOMAIN/USER, the ELK recieves it correctly on original message but then on mapping I just see DOMIN, /USER is missed.

The field type is configured as keyword in mapping and index patterns.

filebeat pipeline.json

{

"description": "Pipeline for parsing squid elasticsearch.log",

"processors": [{

"grok": {

"field": "message",

"patterns":[

"%{POSINT:squid.proxy.request_time}.%{POSINT:squid.proxy.request_time_ms}\\|.*?%{NUMBER:squid.proxy.response_time}\\|%{IP:squid.proxy.src_ip}\\|(%{MAC:squid.proxy.mac}|-)\\|%{DATA:squid.proxy.request_status}\\|%{DATA:squid.proxy.status_code}\\|%{NUMBER:squid.proxy.http_size}\\|%{WORD:squid.proxy.http_method}\\|(%{IP:squid.proxy.server_ip}|%{HOSTNAME:squid.proxy.website})\\|(%{USER:squid.proxy.user})|(%{USER:{WORD}/{WORD}}|-)\\|%{WORD:squid.proxy.hierarchy}\\|(%{IP:squid.proxy.server_ip}|-)\\|(%{DATA:squid.proxy.ct}|-)\\|(%{NUMBER:squid.proxy.total_time}|-)\\|%{UUID:squid.proxy.uuid}\\|(%{HOSTNAME:squid.proxy.website}|-)\\|(%{DATA:squid.proxy.useragent}|-)\\|\\|\\|.*?(category:.*?cinfo:%{NUMBER:squid.proxy.category_id}-%{WORD:squid.proxy.category_name};|-).*"

],

"ignore_missing": false,

"ignore_failure": false

}

},{

"rename":{

"field": "message",

"target_field": "squid.proxy.original_message"

}

},

{

"date": {

"field": "squid.proxy.request_time",

"formats": ["UNIX", "dd MMM H:m:s"],

"ignore_failure": true

}

},

{

"geoip": {

"field": "squid.proxy.server_ip",

"target_field": "squid.proxy.geo",

"ignore_missing": true

}

},

{

"remove": {

"field": "message",

"ignore_missing": true,

"ignore_failure": true

}

}],

"on_failure" : [{

"drop" : {

"ignore_failure" : true

}

}]

}

Anyone can help me to solve this issue.

Thanks in advance

Best regards

ASKER

Hi

Using DATA stops Filebeat to send data to ELK

{

"description": "Pipeline for parsing squid elasticsearch.log.",

"processors": [{

"grok": {

"field": "message",

"patterns":[

"%{POSINT:squid.proxy.request_time}.%{POSINT:squid.proxy.request_time_ms}\\|.*?%{NUMBER:squid.proxy.response_time}\\|%{IP:squid.proxy.src_ip}\\|(%{MAC:squid.proxy.mac}|-)\\|%{DATA:squid.proxy.request_status}\\|%{DATA:squid.proxy.status_code}\\|%{NUMBER:squid.proxy.http_size}\\|%{WORD:squid.proxy.http_method}\\|(%{IP:squid.proxy.server_ip}|%{HOSTNAME:squid.proxy.website})\\|(%{USER:{DATA}}|-)\\|%{WORD:squid.proxy.hierarchy}\\|(%{IP:squid.proxy.server_ip}|-)\\|(%{DATA:squid.proxy.ct}|-)\\|(%{NUMBER:squid.proxy.total_time}|-)\\|%{UUID:squid.proxy.uuid}\\|(%{HOSTNAME:squid.proxy.website}|-)\\|(%{DATA:squid.proxy.useragent}|-)\\|\\|\\|.*?(category:.*?cinfo:%{NUMBER:squid.proxy.category_id}-%{WORD:squid.proxy.category_name};|-).*"

],

"ignore_missing": false,

"ignore_failure": false

}

},{

"rename":{

"field": "message",

"target_field": "squid.proxy.original_message"

}

},

{

"date": {

"field": "squid.proxy.request_time",

"formats": ["UNIX", "dd MMM H:m:s"],

"ignore_failure": true

}

},

{

"geoip": {

"field": "squid.proxy.server_ip",

"target_field": "squid.proxy.geo",

"ignore_missing": true

}

},

{

"remove": {

"field": "message",

"ignore_missing": true,

"ignore_failure": true

}

}],

"on_failure" : [{

"drop" : {

"ignore_failure" : true

}

}]

}ASKER CERTIFIED SOLUTION

membership

This solution is only available to members.

To access this solution, you must be a member of Experts Exchange.

I do like regular expression type things so, figured I'd give this a try.

Using:

http://grokdebug.herokuapp.com/patterns#

WORD is defined as "\b\w+\b" so the / won't be returned. Don't know how to escape it so your pattern will find it. I'm sure it can be done but never having used the tools, no idea how. Maybe someone will be along later that can tell you how if you still need it.

Did see DATA defined as ".*?"

Instead of:

{USER:{WORD}/{WORD}}

Try:

{USER:{DATA}}

That said: It appears Logstash has CSV parsing built in and allows you to change the separator. I might look into using that:

https://coralogix.com/blog/logstash-csv-import-parse-your-data-hands-on-examples/